Nvidia Patents a System That Teaches Self-Driving Cars to Know When They're Flying Blind

Fog, heavy rain, or a dusty highway can cripple a self-driving car's sensors — and the car often doesn't know it's struggling. Nvidia's latest patent tackles that blind spot directly.

What Nvidia's sensor visibility score actually does for self-driving cars

Imagine you're driving in dense fog. You instinctively slow down, stop changing lanes, and avoid the highway on-ramp — because you know your visibility is limited. Today's self-driving systems often lack that same self-awareness about their own sensor limitations.

Nvidia's patent describes a system where machine learning models continuously analyze incoming sensor data — from cameras, lidar, or radar — and estimate how far those sensors can actually 'see' at any given moment. That estimated distance gets converted into a usability score that tells the car's planning software how much it can trust what it's perceiving.

When visibility is high, the car gets full access to all its navigation and planning tools. When visibility drops, certain operations get restricted or disabled — like a pilot reducing speed in instrument-only conditions. The car doesn't guess; it adapts based on a real-time estimate of its own perceptual limits.

How Nvidia's ML model converts sensor data into a usability score

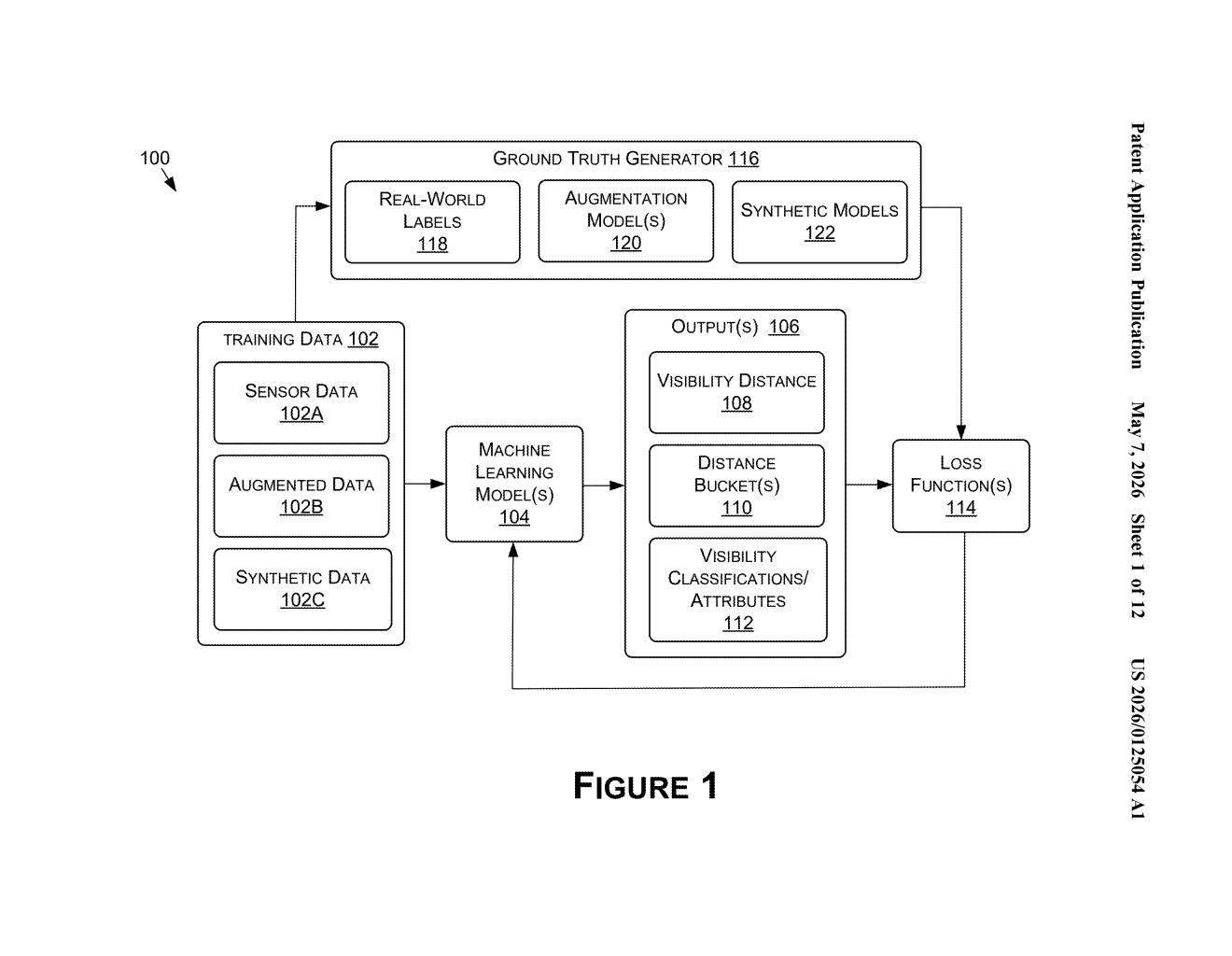

The core of Nvidia's system is a machine learning model (specifically a deep neural network) that takes in raw sensor data and outputs a visibility distance estimate — essentially a number representing how far the sensors can meaningfully detect the environment right now.

That distance isn't just a single value sitting in a log file. The system maps it against a set of predefined visibility thresholds (the patent calls them 'distance buckets') that correspond to different levels of sensor usability. Think of it like triage categories: full capability, reduced capability, minimal capability.

Based on which bucket the current visibility falls into, the vehicle's planning, navigation, and control operations are adjusted accordingly. A low-visibility score might mean the car refuses to execute a lane change, lowers its speed ceiling, or hands off control to a human driver.

Training the model involves a ground truth generator paired with augmentation models that simulate degraded sensor conditions — synthetic fog, rain, lens blur — so the network learns to recognize poor visibility even in edge cases it hasn't seen in the real world. A loss function ties predicted visibility distances to labeled classification outputs during training.

What this means for autonomous vehicle safety in bad weather

The practical upshot here is safety: a self-driving system that knows what it doesn't know is meaningfully safer than one that plows ahead with full confidence in degraded conditions. Most current AV perception systems are good at detecting objects; they're less good at flagging when detection itself is unreliable. This patent addresses that gap at a system level.

For Nvidia, whose DRIVE platform powers a wide range of autonomous vehicle programs, this kind of meta-perception layer is a logical next step. If this capability ships in production AV stacks, you'd expect to see it reduce edge-case failures in adverse weather — one of the most common failure modes cited in real-world AV incident reports.

This is genuinely useful work, not patent-filing theater. The problem of sensor self-assessment in degraded conditions is a well-documented gap in autonomous driving safety, and framing it as a discrete ML output that downstream planning systems can consume is a clean, practical design. It's not glamorous, but it's exactly the kind of infrastructure patent that makes a difference when a vehicle hits an unexpected fog bank at 65 mph.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.