Nvidia Patents an AI System That Generates Fake Spear Phishing Emails to Train Detectors

What if the best way to catch a phishing email is to first teach an AI to write thousands of convincing ones? That's exactly the idea behind Nvidia's latest cybersecurity patent.

How Nvidia uses fake phishing emails to fight real ones

Imagine you're a security team trying to train a filter to catch spear phishing emails — the highly targeted kind that impersonate your CEO or reference your actual job title. The problem is real spear phishing examples are rare and sensitive, so you don't have enough training data to build a sharp detector.

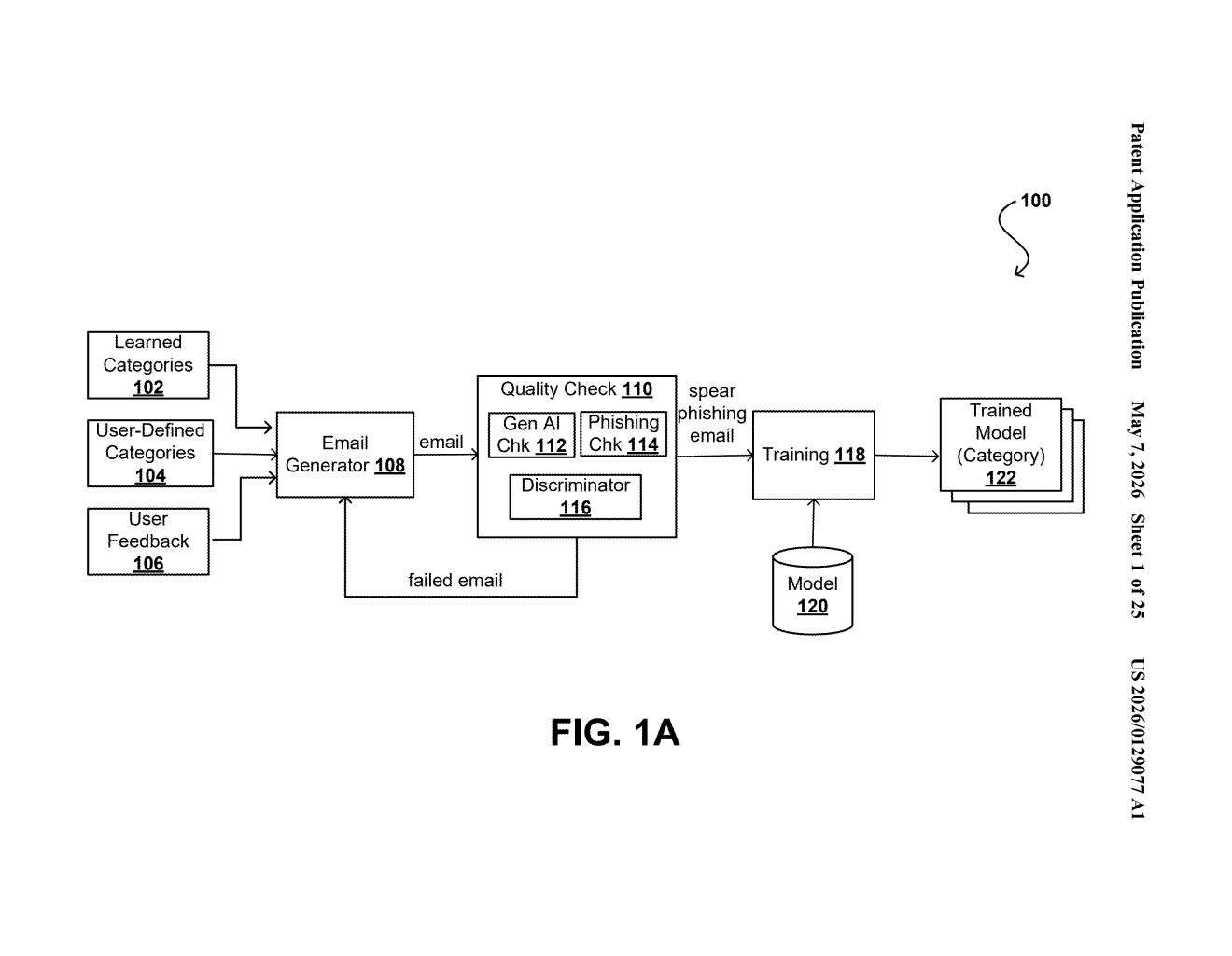

Nvidia's patent proposes a fix: use generative AI to manufacture synthetic phishing emails at scale. The system creates realistic fake attacks — complete with body text, images, and file attachments — tailored to specific categories of recipients (think: finance workers, executives, or IT staff). Any generated message that gets caught by existing spam filters gets discarded or regenerated, so only the sneakiest, most convincing examples make it into the training set.

Those high-quality synthetic examples are then used to train a spear phishing detector tuned for each recipient category. The idea is that a model trained on hundreds of realistic, category-specific fake attacks will be much better at spotting real ones than a model trained on generic junk mail.

How the synthetic phishing pipeline builds and filters examples

The system chains together several generative models to build complete synthetic phishing communications. It doesn't just generate text — it also produces images and file attachments that would typically accompany a real spear phishing attempt (think: a fake invoice PDF or a spoofed logo image).

Critically, the training communication that gets assembled doesn't include the full image or file. Instead it uses metadata from those assets — things like file type, size, or structural properties — combined with the text body. This is a clever shortcut: it keeps the training examples lightweight while still teaching the detector what malicious attachment patterns look like.

Before any synthetic email enters the training dataset, a quality-control step runs it past existing email filters. If the fake phishing email gets caught by current defenses, it's either deleted or regenerated. Only messages that slip through — meaning they're realistic enough to fool today's tools — graduate to the training set.

- Recipient-category guidelines: the system tailors attacks to specific job roles or industries

- Multi-modal generation: text, images, and files are all produced by generative models

- Filter-bypass validation: low-quality examples are culled automatically

- Category-specific detection models: the output is a tuned spear phishing classifier per recipient type

Why AI-generated attack data could outpace human red teams

Spear phishing is one of the most effective attack vectors precisely because it's personalized — generic threat models trained on bulk spam don't generalize well to targeted attacks. If Nvidia's approach works, it means security teams could generate arbitrarily large, high-quality datasets of realistic attacks without waiting for real incidents or running expensive human red-team exercises.

For enterprise security products, this kind of pipeline could significantly lower the cost and time needed to fine-tune detectors for specific industries or roles. It also raises an uncomfortable mirror-image question: the same generative pipeline that creates training data for defenders could, in theory, be repurposed to generate actual attacks at scale — which makes the quality-filtering and access-control design of such systems worth watching closely.

This is a genuinely useful idea in a space that badly needs it — spear phishing detection has always been hamstrung by a lack of labeled, realistic training data. The filter-bypass validation step is the smartest part: it's an automated way of ensuring only the hardest-to-catch examples survive into the training set, which is exactly the adversarial dynamic you want to model. Whether Nvidia turns this into a product or licenses it into enterprise security tooling, it's a more concrete AI-security contribution than most GPU-company patents.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.