Apple Patents a Smart Video Call System That Adds 3D Depth on Vision Pro

When you're on a video call, your iPhone and your Vision Pro are very different screens — so why should they show the exact same thing? Apple's latest patent tackles exactly that mismatch.

What Apple's 2D/3D video call switching actually does

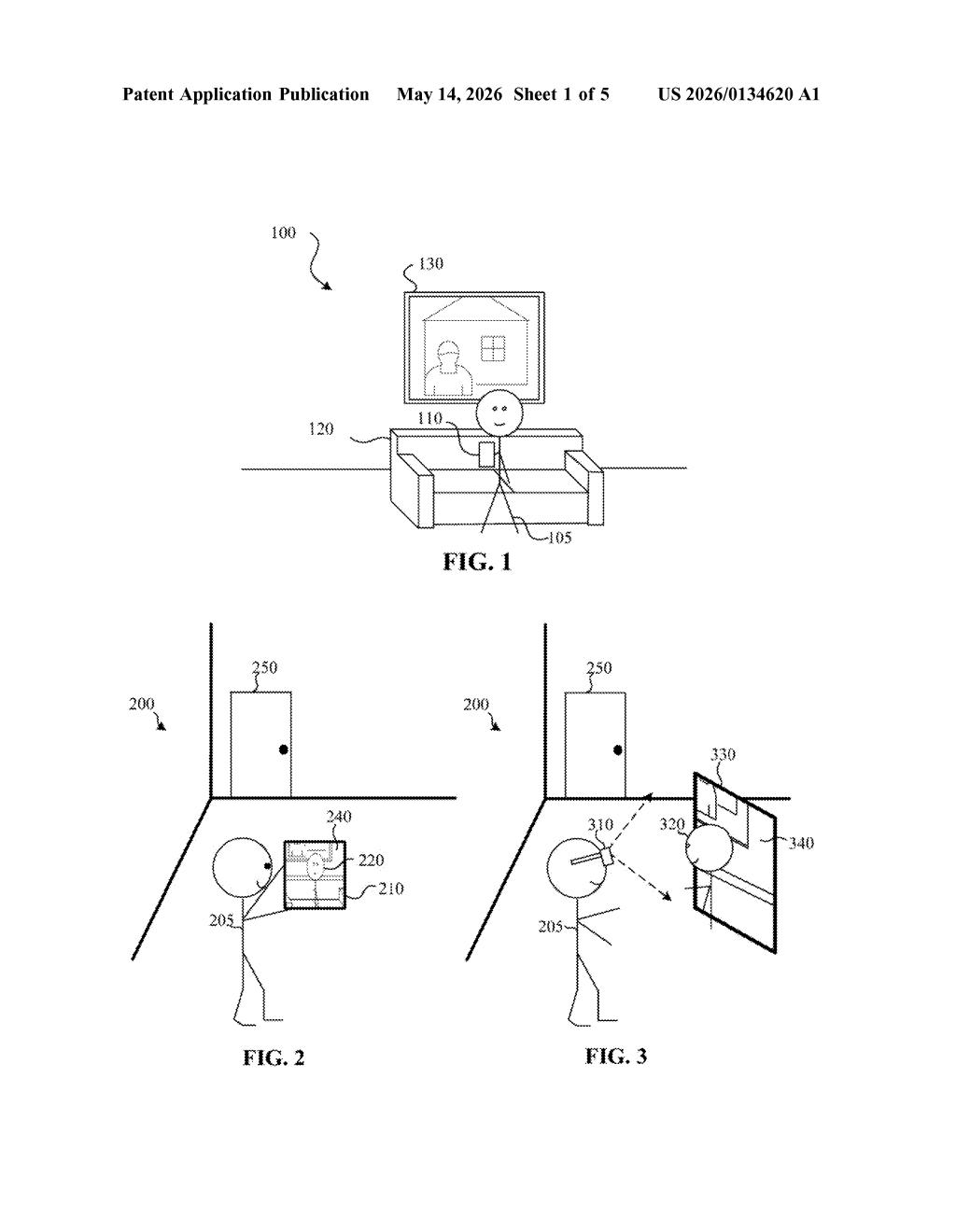

Imagine you're on a group FaceTime call. Your friend is watching on their laptop, but you're watching on a spatial computing headset. Today, you both see the same flat video. This patent describes a system where the sender's device streams both regular 2D video and extra 3D depth data at the same time — and the receiving device decides what to do with it.

If you're on a plain phone or laptop, your device just plays the normal flat video and ignores the 3D data entirely. But if you're wearing a headset like Vision Pro, your device uses that depth data to make the other person look like they have real spatial presence — not just a flat rectangle floating in your room.

There's even a third mode where the person is visually separated from their background, like a live cutout — think of it as background blur taken to its logical extreme. One stream, three possible experiences depending on what screen you're using.

How the receiving device picks its presentation mode

The patent describes a communication architecture where the sending device captures a standard 2D video stream via its camera and simultaneously captures 3D sensor data — likely depth map information from a LiDAR scanner or structured light system — and transmits both during a multi-user session.

On the receiving side, the device evaluates a criterion (most likely whether it has stereo-display hardware) and picks one of three presentation modes:

- First mode (flat 2D): The device just plays the video stream normally, ignoring the 3D data entirely. This is what a laptop or smartphone would do.

- Second mode (depth-enhanced): The device uses the 3D data to reconstruct a sense of depth around the person or object in the video, making them appear more volumetric. This targets stereo-capable headsets.

- Third mode (object separation): The system isolates the subject from the rest of the video frame — essentially real-time segmentation — and presents them separately from the background.

The key insight is that the 3D data travels alongside the 2D stream unconditionally; the intelligence about what to render lives entirely on the receiving device. This keeps the system backward-compatible — older devices just never touch the extra data.

What this means for FaceTime on Vision Pro and beyond

For Apple, this is infrastructure work for a future where Vision Pro and iPhones share the same FaceTime calls but deliver different experiences. Right now, spatial personas in FaceTime require the other person to also be on a spatial device. A system like this could let a Vision Pro user see a 3D-depth version of someone calling from a regular iPhone — no special hardware on the sender's end required.

The object separation mode is also worth watching. Isolating a person from their background in real time, as a first-class presentation mode rather than a software filter, hints at deeper integration with AR overlays and virtual environments — places where you'd want a person to feel like they're inside your space, not pasted onto a flat screen.

This is quiet but meaningful plumbing for Apple's spatial computing roadmap. It solves a real interoperability headache — how do you make video calls feel spatial on a headset when most callers are still on flat screens? — without requiring everyone to upgrade. The three-mode design is elegant: send everything, render what makes sense. Worth watching as Vision Pro software matures.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.