Google Patents a Real-Time Video Distortion Removal System Using Masked Frames

Ever noticed how background-blurring effects in video calls sometimes cause your arm to flicker, or your hair to warp? Google is filing patents on a smarter way to fix exactly that — in real time, frame by frame.

What Google's masked-frame distortion fix actually does

Imagine you're on a video call and you've turned on background blur. As you move, the app has to figure out, 60 times a second, exactly where you end and the background begins. Get it even slightly wrong and you get ghosting, halos, or that unsettling effect where part of your face briefly vanishes.

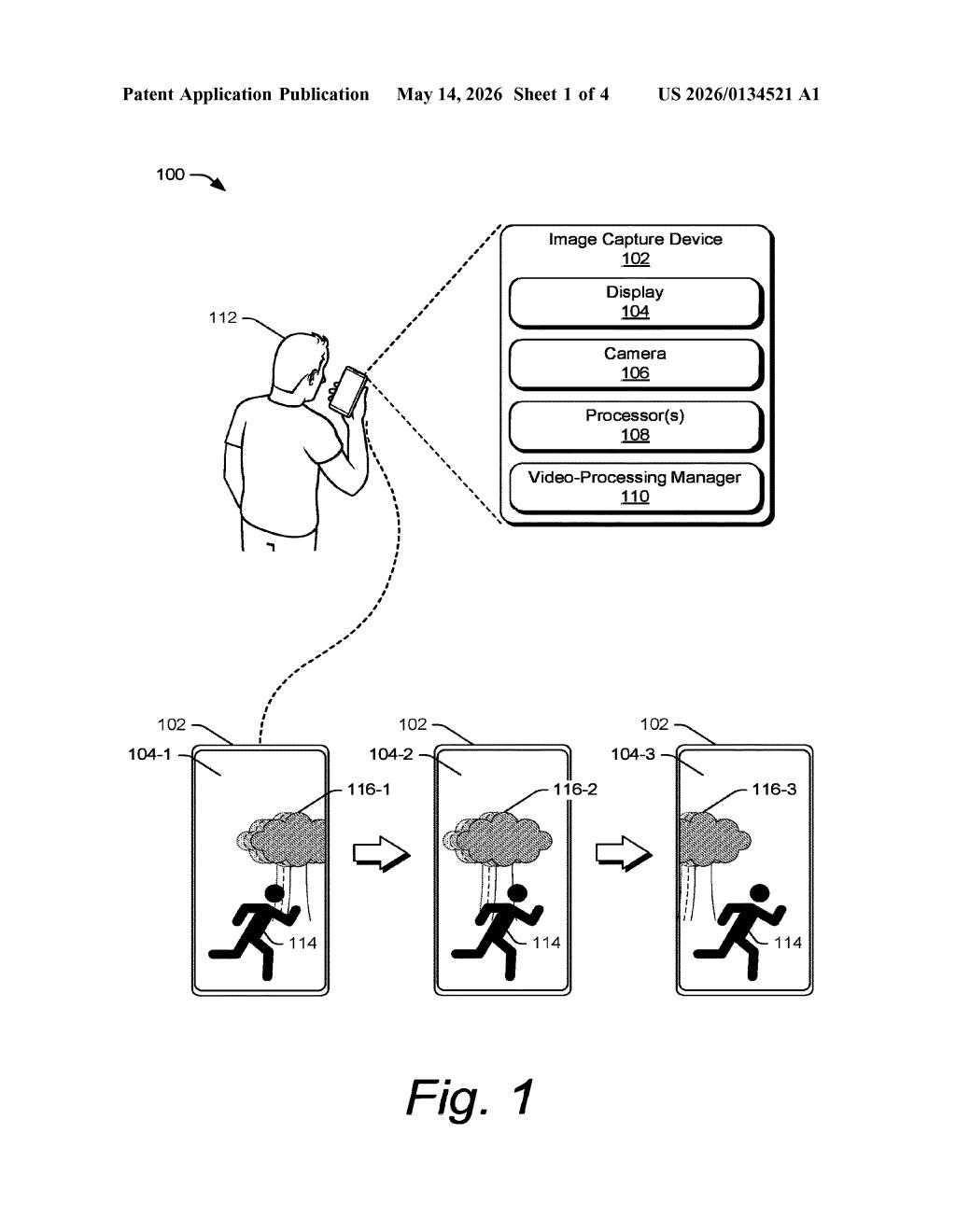

Google's patent describes a system that attacks this problem from three angles at once. It uses an AI model to detect your outline in the current frame, a tool called optical flow to measure how pixels moved since the last frame, and a predicted mask that guesses where you'll be based on that motion. All three get combined into a single, more reliable "final mask" before any editing happens.

Once that mask cleanly separates you from the background, the system can remove distortion — things like lens warping or compression artifacts — from just the part of the frame that matters. The result is a cleaner, steadier output video that holds up even when you move quickly.

How the final mask combines ML, motion vectors, and prediction

The patent describes a video-processing manager running on an image-capture device that processes each frame in a pipeline combining three distinct inputs:

- Subject mask: generated by a machine-learned (ML) model that classifies which pixels belong to the foreground subject (you, a person, an object).

- Motion vectors: produced by an optical flow measurement tool (a technique that tracks how individual pixel regions shift between consecutive frames — think of it as measuring the "direction and speed" of every patch of the image).

- Predicted mask: a forward-projected estimate of where the subject mask should be in the current frame, derived by warping the prior frame's mask using those motion vectors.

The system fuses all three into a final mask for the current frame. This fusion step is the core innovation — no single source is fully trusted alone. The ML mask can lag on fast motion; the predicted mask can drift; combining them with motion vector guidance corrects for both failure modes.

With the final mask applied, the frame is split into foreground and background. The masked foreground frame is then edited to remove distortion — the patent doesn't specify a single distortion type, leaving room for lens correction, compression artifacts, or rolling-shutter effects. The cleaned output frame is then passed downstream.

What this means for Google's video calling and camera apps

For Google, this sits squarely in the stack powering Google Meet, Pixel camera processing, and any feature that requires real-time segmentation — background blur, portrait mode video, or AR overlays. The problem it solves (temporal instability in per-frame masks) is one of the hardest practical issues in live video, and it's responsible for most of the jittery or flickering effects users complain about.

The broader implication is that combining ML-based segmentation with classical motion estimation (optical flow) is becoming a standard recipe in on-device video pipelines. If this approach ships in Pixel hardware or the Android camera stack, you'd notice it as smoother, more stable background effects — not as a feature you explicitly turn on.

This is a solid, practical patent in a real problem space — not flashy AI research, but the kind of careful pipeline engineering that separates a polished camera experience from a buggy one. The three-input mask fusion approach is genuinely clever, and the fact that it's designed for real-time, on-device processing means Google is thinking about shipping this, not just publishing it. Worth paying attention to if you follow Pixel camera development or Google Meet quality improvements.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.