Google Patents a Structured Reasoning System for LLM Tool Calls

Before a smart AI assistant books your flight or searches for an apartment, it needs to figure out exactly which tool to call and what to tell it. Google's new patent describes a structured way to make that reasoning process explicit, auditable, and trainable.

How Google's LLM thinks before it calls an API

Imagine you ask an AI assistant to find you a two-bedroom apartment for under $3,000 a month. Behind the scenes, the AI doesn't just guess an answer — it has to figure out which search tool to use, what filters to apply, and in what order to do things. That internal planning step is usually invisible. Google's patent makes it a first-class, structured artifact.

The system works by having a fine-tuned language model produce a series of "reasoning blocks" before it ever sends a request to an external API. Each block is either a text thought ("the user wants a rental under $3k") or a concrete tool call ("call the housing API with these exact parameters"). The model keeps generating blocks until it signals it's done reasoning.

Once that reasoning chain is complete, the model uses it to generate a final, readable response for you. Think of it as the AI showing its work — except the "work" is structured enough that engineers can train on it, debug it, and improve it over time.

Inside Google's tool-use reasoning block pipeline

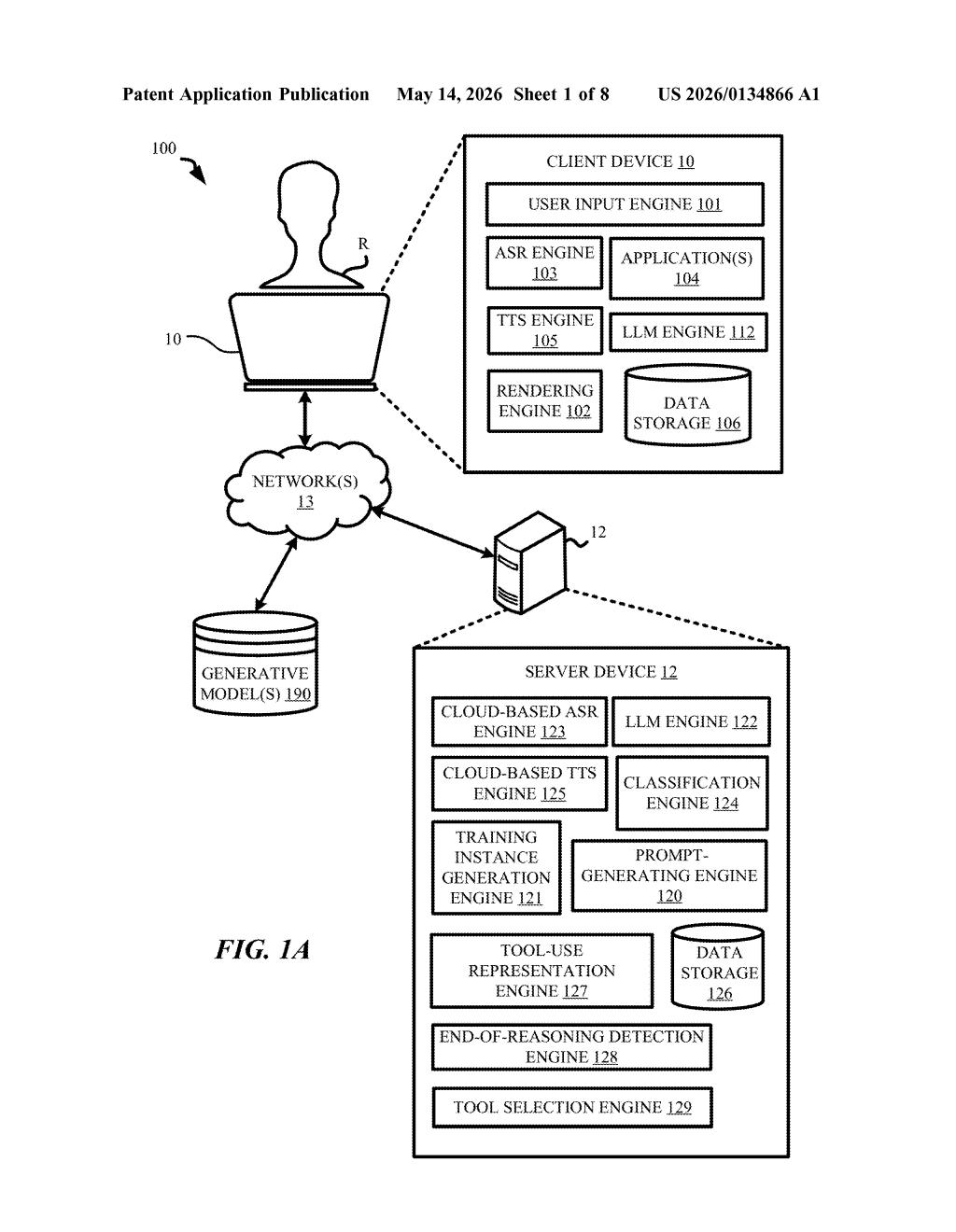

The patent describes a fine-tuning and inference framework for large language models (LLMs) that need to interact with external APIs — things like search engines, booking systems, or databases.

At the core is the concept of a tool-use representation: a structured output the LLM builds iteratively before producing a user-facing answer. This representation is made up of one or more reasoning blocks, which come in two flavors:

- Text reasoning blocks — natural-language thoughts the model generates to work through the problem (similar to chain-of-thought prompting, where you ask a model to reason step by step before answering)

- Tool call reasoning blocks — structured records that specify exactly which API to call, which parameters it takes, and what values to pass

The model processes this iteratively: each prompt it receives can produce another reasoning block, and the loop continues until the model outputs an end-of-reasoning signal. Only then does it synthesize a final response.

The first prompt fed into the model combines the raw user query with metadata about available APIs — essentially a catalog of what tools exist and how to use them. This grounds the model's reasoning in real, callable interfaces rather than hallucinated ones.

What this means for Google's AI assistant strategy

Google's AI products — from Gemini to Google Assistant to Workspace — increasingly rely on LLMs that can take actions, not just answer questions. The harder problem isn't generating fluent text; it's getting a model to reliably pick the right tool, pass the right parameters, and handle multi-step tasks without going off the rails. Structured reasoning blocks give engineers a concrete artifact to train on and evaluate, which is much easier than debugging freeform text outputs.

For you as a user, this could translate to AI assistants that are more reliable when doing things on your behalf — fewer hallucinated bookings, fewer wrong API calls, fewer moments where the assistant confidently does the wrong thing. It also lays groundwork for systems where the reasoning chain itself is shown to users or used for trust and verification.

This is solid, unglamorous infrastructure work — the kind of patent that doesn't make headlines but absolutely shapes how production AI agents behave. Structured reasoning before tool calls is already a known technique (think ReAct, function calling in GPT-4, etc.), so the novelty here is in the specific fine-tuning and representation details rather than the concept itself. Worth tracking if you care about where Gemini's agentic capabilities are headed.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.