Google Patents a Scoring System That Prioritizes Specialist Domains in Search Results

Google is patenting a system that quietly scores every domain on how specialized it is — then loads results from niche-expert sites before generalist ones. It's a direct, algorithmic attack on sites that rank for everything.

How Google decides which websites are true specialists

Imagine you search for a very specific question about, say, sourdough hydration ratios. Google currently might serve you results from big general-purpose sites that rank well across thousands of topics. This patent describes a system designed to change that — by giving an edge to websites that consistently show up for a narrow slice of queries.

Here's how Google figures out which sites are specialists: it looks at a long history of search queries, groups them into categories (cooking, finance, medical, etc.), and tracks which domains users actually engaged with. A site that earns clicks across dozens of unrelated categories gets down-weighted. A site whose engaged traffic clusters tightly around one topic gets a higher specialization score.

The practical result: when you search for something, Google's system could prioritize loading — and rendering on your screen — results from the domain it considers most topically focused for your query's category. It's less about raw link popularity and more about whether a site truly owns a subject area.

How the specialization score penalizes generalist domains

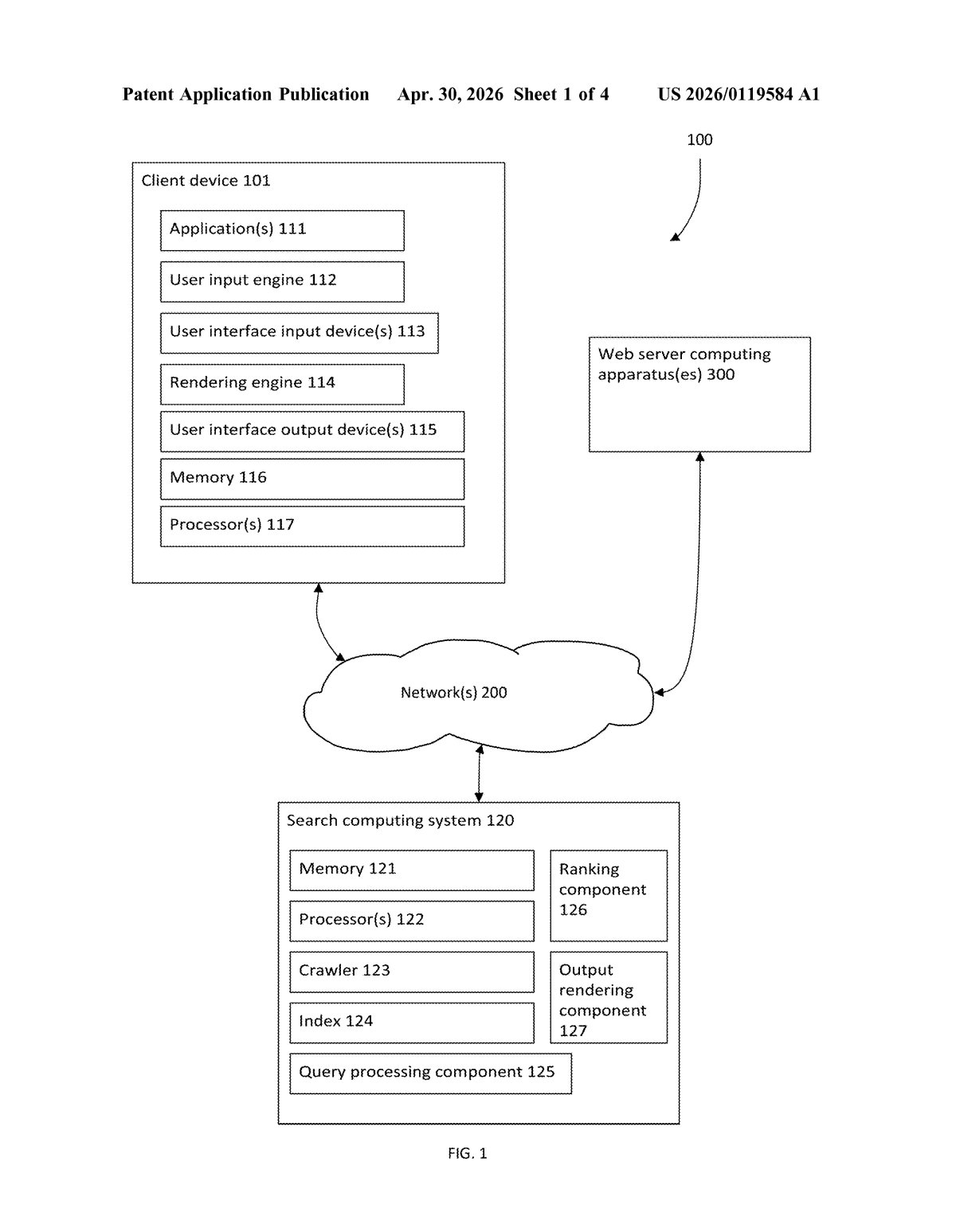

The patent describes an offline analysis pipeline that runs over a historical window of search queries. For each query, Google assigns it to a search query category (think of these like topic buckets — cooking, legal advice, software tools). It then filters down to queries where users actually interacted with results — clicks, dwell time, or other engagement signals — creating a curated subset of high-signal queries.

For each domain that appeared in results for that subset, Google calculates a specialization score using two factors:

- Magnitude: how many results from the domain appeared across those queries (raw presence)

- Inverse correspondence: an inverted measure of how spread a domain's appearances are across different query categories — the more categories a domain appears in, the lower this factor, penalizing breadth

The score is essentially asking: does this domain show up a lot, but only for one kind of question? If yes, it's classified as a specialist and gets a priority flag. The system then uses that flag to influence render order at the user interface level — meaning specialist domains can be surfaced and loaded before generalist ones, even before the full result set is assembled.

This is distinct from just reranking blue links. The patent specifically mentions prioritizing rendering, which suggests the optimization touches how quickly results visually appear to users, not just where they rank in the list.

What this means for publishers and SEO strategy

For publishers and SEO practitioners, this patent signals that Google is building infrastructure to algorithmically distinguish true domain experts from large content farms that happen to rank across many verticals. If this scoring gets baked into production search, a site that publishes broadly — finance, health, travel, tech — could find its results loading slower or appearing lower, even if it has strong traditional signals like backlinks and authority.

For everyday users, this is ostensibly a quality improvement: you'd theoretically get a cooking site that only does cooking, faster, over a megasite that covers everything. Whether that holds in practice depends heavily on how Google tunes its category taxonomy and engagement thresholds — both of which are invisible to the public.

This patent is worth taking seriously. Google has been publicly hammered for years over search quality — Reddit threads, HN discussions, and SEO discourse are full of complaints that generalist content farms dominate results. A scoring mechanism that penalizes domain breadth is a concrete, machine-readable answer to that criticism. Whether it works well in production depends on the category taxonomy being accurate, but the intent is clear and the approach is coherent.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.