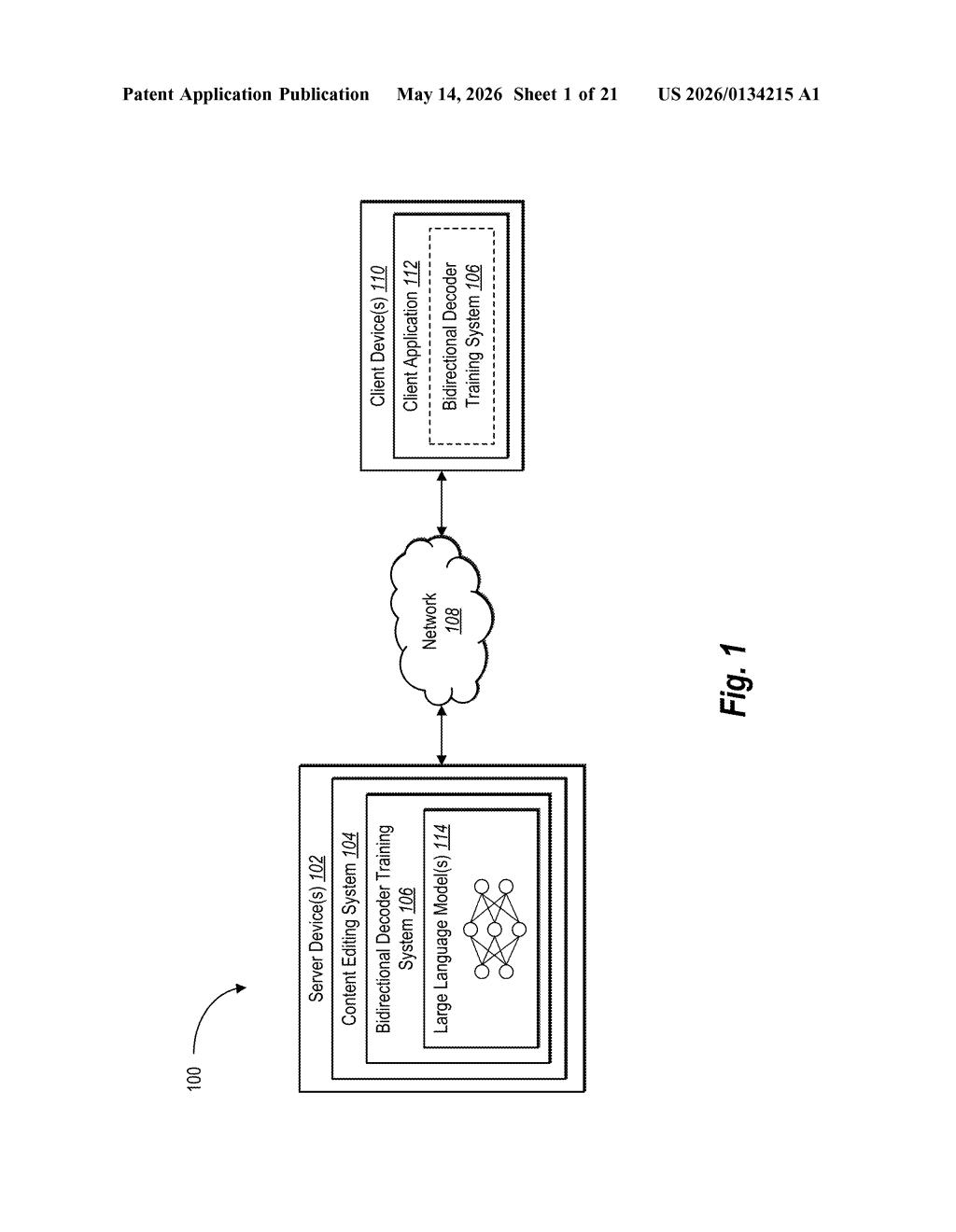

Adobe Patents a Hybrid Attention Method to Boost Large Language Model Capabilities

Most AI language models are trained to either understand text or generate it — Adobe's new patent describes a training method that tries to do both at once, using a clever mix of two fundamentally different attention styles.

What Adobe's hybrid attention training actually does

Imagine you're studying for an exam two different ways: sometimes you read a passage and try to understand the whole thing at once, and other times you write an essay word-by-word without peeking ahead. Each approach builds different mental muscles. Most AI language models only train one way or the other.

Adobe's patent describes a training recipe that combines both approaches. It splits the text a model learns from into two types of tokens — context tokens that the model reads with full awareness of surrounding text, and span tokens that the model must predict sequentially, like filling in blanks. The model is then trained in two stages, gradually layering in more complex learning signals.

The goal is a single model that can both understand context deeply and generate fluent text — a combination that's tricky to achieve because the two skills normally pull a model's training in opposite directions.

How causal and bidirectional attention tokens are mixed in training

At the core of this patent is a distinction between two types of attention — the mechanism that lets a language model decide which words to focus on when processing text.

- Bidirectional attention (used in models like BERT) lets the model look at all surrounding words simultaneously — great for understanding meaning in context.

- Causal attention (used in GPT-style models) only lets the model look at words that came before — great for generating text one token at a time.

Adobe's method creates two token sets from the same input. Context tokens use bidirectional attention only, letting the model absorb full context. Span tokens use both causal and bidirectional attention, training the model to generate text while still being aware of surrounding context.

Training happens in two stages. In Stage 1, the model is optimized using two loss functions (think of a loss function as a scorecard measuring how wrong the model's predictions are) — one for context tokens, one for span tokens. In Stage 2, a third loss function is added that focuses again on context tokens, further refining the model's comprehension abilities. This staged approach lets the model build foundational skills before tackling harder, combined objectives.

What this means for Adobe's AI-powered creative tools

For Adobe, whose Firefly AI platform spans text understanding, image generation, and document intelligence, having a single model that excels at both comprehension and generation is practically valuable. Many current workflows require stitching together separate models — one to understand a user's prompt deeply, another to generate output — which adds latency and complexity.

If this training method works as described, it could allow Adobe to ship more capable, unified AI models inside products like Acrobat AI Assistant or Firefly without the engineering overhead of chaining multiple specialized systems. For you as a user, that could translate to more coherent, context-aware responses when you ask an AI to summarize, rewrite, or generate content.

This is a legitimate and technically interesting contribution to the ongoing debate in NLP about whether you can unify encoder-style understanding with decoder-style generation in a single training regime. Adobe's team includes serious researchers, and the two-stage training curriculum with mixed attention masks is the kind of careful systems work that doesn't make headlines but does make models better. Worth keeping an eye on if you follow foundation model training techniques.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.