Microsoft Patents On-Device Video Dubbing That Keeps Translated Speech in Sync

Anyone who's watched a dubbed foreign film knows the lip-sync horror — mouths moving while the words have already stopped. Microsoft is patenting a smarter approach that generates multiple translations of different lengths and picks the one that fits the original audio's timing.

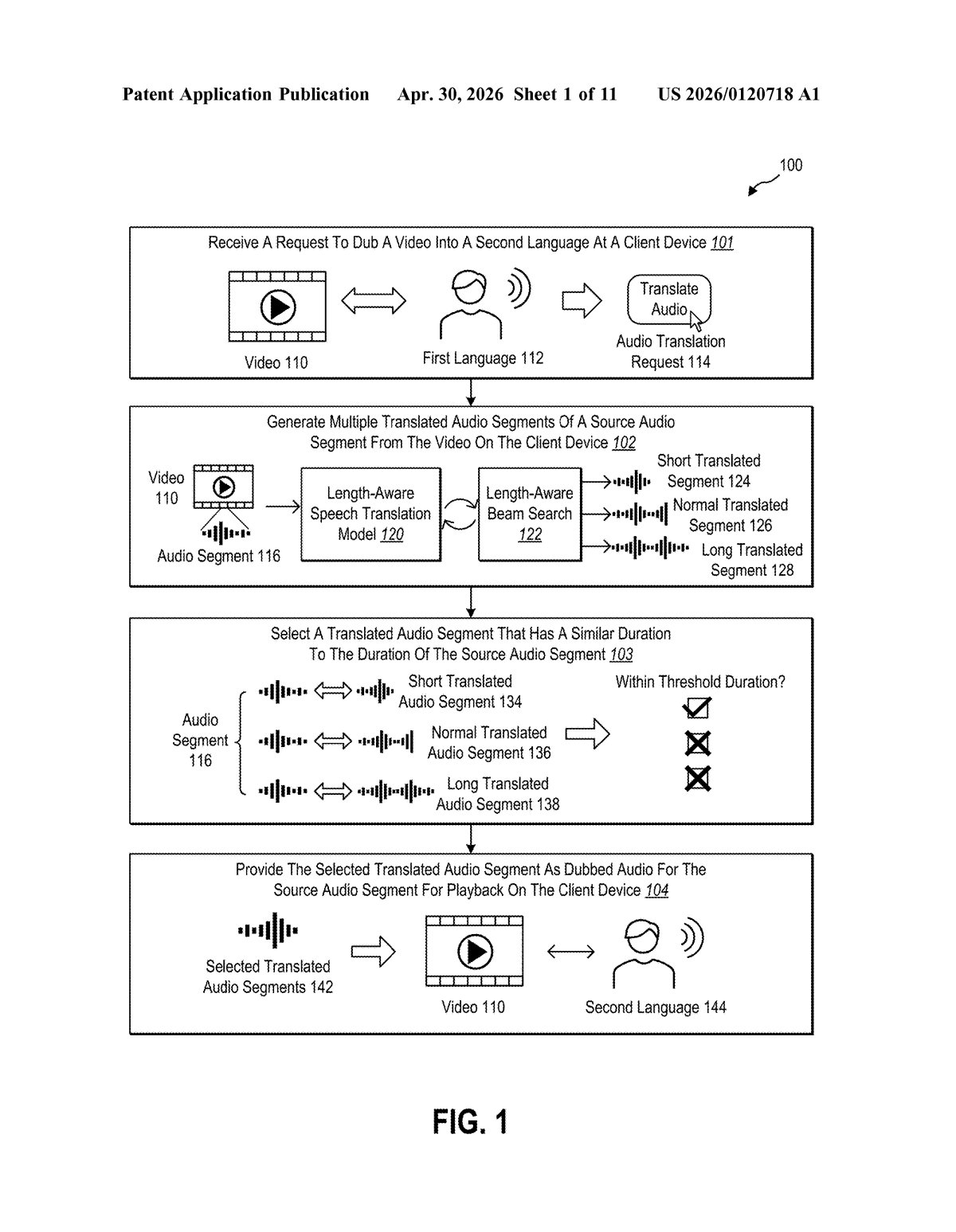

What Microsoft's length-aware dubbing system actually does

Imagine you're watching a video in Spanish, but you want to hear it in English. A simple translation might nail the meaning but completely blow the timing — the English sentence ends two seconds before the speaker's lips stop moving, or it's still talking when the next scene cuts in. That mismatch is jarring, and it's one of the main reasons auto-dubbed video feels fake.

Microsoft's approach is to generate several different English translations of each spoken phrase — some shorter, some longer — and then pick the one whose duration best matches the original. It's a bit like a tailor adjusting a hem: instead of forcing one fixed translation to fit, you have options at different lengths and you choose the closest fit.

The whole process runs on your device, not in the cloud, which matters for speed and privacy. The system is designed to work in real time, so you'd theoretically get dubbed audio that tracks the video naturally, phrase by phrase, without a server round-trip adding delay.

How the duration-ratio selection picks the right translation

The core of this patent is a length-aware speech translation model — an AI model that doesn't just produce one translation of an audio clip, but produces multiple translated versions intentionally sized to different durations. Think of it as asking the model: "Give me a short version, a medium version, and a longer version of this phrase in the target language."

Once those candidates exist, the system compares each one's duration against the original audio segment's duration by computing duration ratios (translated length ÷ original length). A ratio close to 1.0 means the translation roughly matches the original timing. The system then applies a threshold — selecting whichever translated segment's ratio falls closest to that target.

To efficiently search across all those candidate translations, the patent describes using beam search (a classic AI decoding technique that keeps track of the top-N most promising outputs at each step, rather than exploring every possible word sequence). This keeps the compute load manageable even on a local device.

Key components described include:

- A length-aware speech translation model running entirely on the client device

- A duration-ratio comparator that scores each candidate translation against the source segment

- A beam search decoder that generates multiple length-varied outputs efficiently

- A real-time pipeline that assembles the selected translated audio for playback in sync with the video

What this means for real-time video translation on your device

The practical payoff here is on-device real-time dubbing that doesn't rely on a cloud service — meaning lower latency, no data leaving your machine, and the ability to work offline. For Microsoft, this slots naturally into products like Teams, Stream, or any scenario where live or recorded video needs instant translation for a multilingual audience.

For you as a viewer, the difference between a well-timed dub and a poorly-timed one is the difference between actually following a video and constantly being distracted by the audio lag. If this works as described, it could make auto-dubbing feel much closer to a professional localization job — and potentially push it from a novelty into something people actually use daily.

This is a genuinely clever framing of the dubbing problem. Instead of trying to compress or stretch a single translation to fit — which tends to produce either chipmunk-speed speech or awkward pauses — generating multiple length variants and selecting the best fit is a much cleaner solution. The on-device angle also gives Microsoft a competitive edge over cloud-only approaches, particularly in enterprise settings where data privacy is a real constraint.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.