Microsoft Patents Plain-English AI Search Across Security Log Files

Writing SQL-style queries to hunt through security logs is a skill most analysts have — but it slows everyone down. Microsoft's new patent describes an AI system that lets you just ask a question in plain English and get back a meaningful answer about potential cyber threats.

How Microsoft's AI lets analysts skip the query language

Imagine your company's servers generate thousands of log lines every hour — records of who logged in, what files were accessed, which connections were made. When something suspicious happens, a security analyst normally has to write a precise, structured query (think: database code) just to search through those logs. That's slow, error-prone, and requires specialized skills.

Microsoft's patent describes a system that removes that barrier entirely. You type a plain-English question — something like "Did any account access sensitive files after hours this week?" — and the AI searches the raw logs, finds the most relevant entries, and generates a readable answer that flags the threat.

Critically, the system doesn't require logs to be cleaned up and loaded into a database first. It works directly on raw security data, which is how logs usually exist in the real world — messy, varied, and hard to pre-process at scale.

How embedding distances rank the most relevant log lines

The core mechanic here is embedding-based semantic search — a technique where both your search query and each log line are converted into lists of numbers (called vectors) that capture their meaning. Lines whose meaning is close to your query end up with vectors that are mathematically nearby.

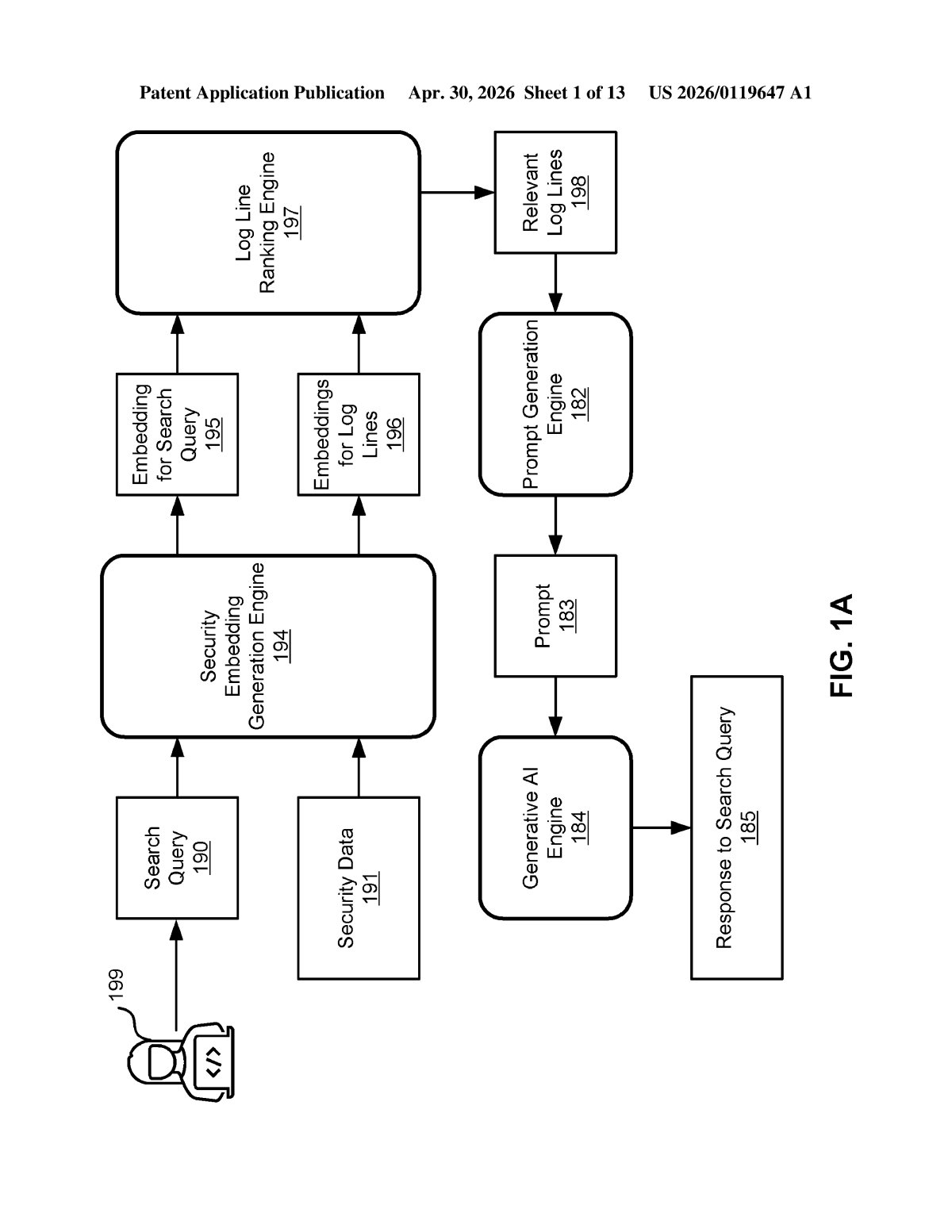

Here's the step-by-step flow the patent describes:

- The system receives a natural language search query (e.g., "unauthorized login attempts from foreign IPs") and converts it into a query embedding.

- Each log line in the security dataset is also converted into its own log line embedding.

- The system calculates the embedding distance (how semantically different or similar each log line is from the query) and ranks them.

- The most relevant log lines are selected — with a cap based on a threshold prompt length (so the AI isn't fed more text than it can handle at once).

- Those lines are assembled into a prompt, fed to a generative model (an LLM), and a human-readable response is returned that identifies the cyber threat.

The underlying LLM is described as being trained specifically on security language — the shorthand, abbreviations, and structured formats found in real log files — combined with natural language. That dual training is what lets it bridge the gap between terse log syntax and conversational questions.

What this means for overloaded security operations teams

Security operations centers (SOCs) are notoriously understaffed and overloaded. The bottleneck isn't always finding skilled analysts — it's the time those analysts spend translating business questions into technical queries. A system that lets a junior analyst — or even a non-technical stakeholder — ask "what happened last night?" and get a useful answer could meaningfully compress response times during an active incident.

This also matters because it works on unparsed, raw log data, which is the practical reality at most organizations. Most log-search tools require you to ingest and normalize data into a structured format first — a process that can take hours or days. Skipping that step is a real operational advantage, not just a convenience feature.

This is genuinely useful, unglamorous infrastructure work. The combination of embedding-based log ranking plus a security-domain-tuned LLM addresses a real pain point that security teams deal with daily. It fits neatly into Microsoft's Sentinel and Defender product lines, and the 'no ingestion required' angle is the detail that makes this more than just another AI-on-logs story.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.