Meta Patents Radar-Based Facial Expression Tracking for VR Headsets

Meta is filing patents for a system that uses radar — the same basic technology in your car's collision-avoidance sensors — to detect your facial expressions from inside a VR headset, no visible-light camera required.

How Meta reads your face without a camera

Imagine putting on a VR headset and having your avatar mirror your facial expressions in real time — your smirk, your raised eyebrow, your surprised look — without any cameras pointed at your face. That's what Meta is working toward with this patent.

Instead of cameras, the headset fires tiny radar signals at the area around your face. When those signals bounce back, the system compares the return pattern against a stored library of known expression signatures to figure out what your face is doing. It's a bit like sonar, but for detecting whether you're frowning instead of smiling.

The privacy angle is interesting here: radar doesn't capture image data the way a camera does, so there's no literal photo of your face being processed. Whether that matters to you probably depends on how much you trust the system doing the matching.

How radar signals map face movements inside the headset

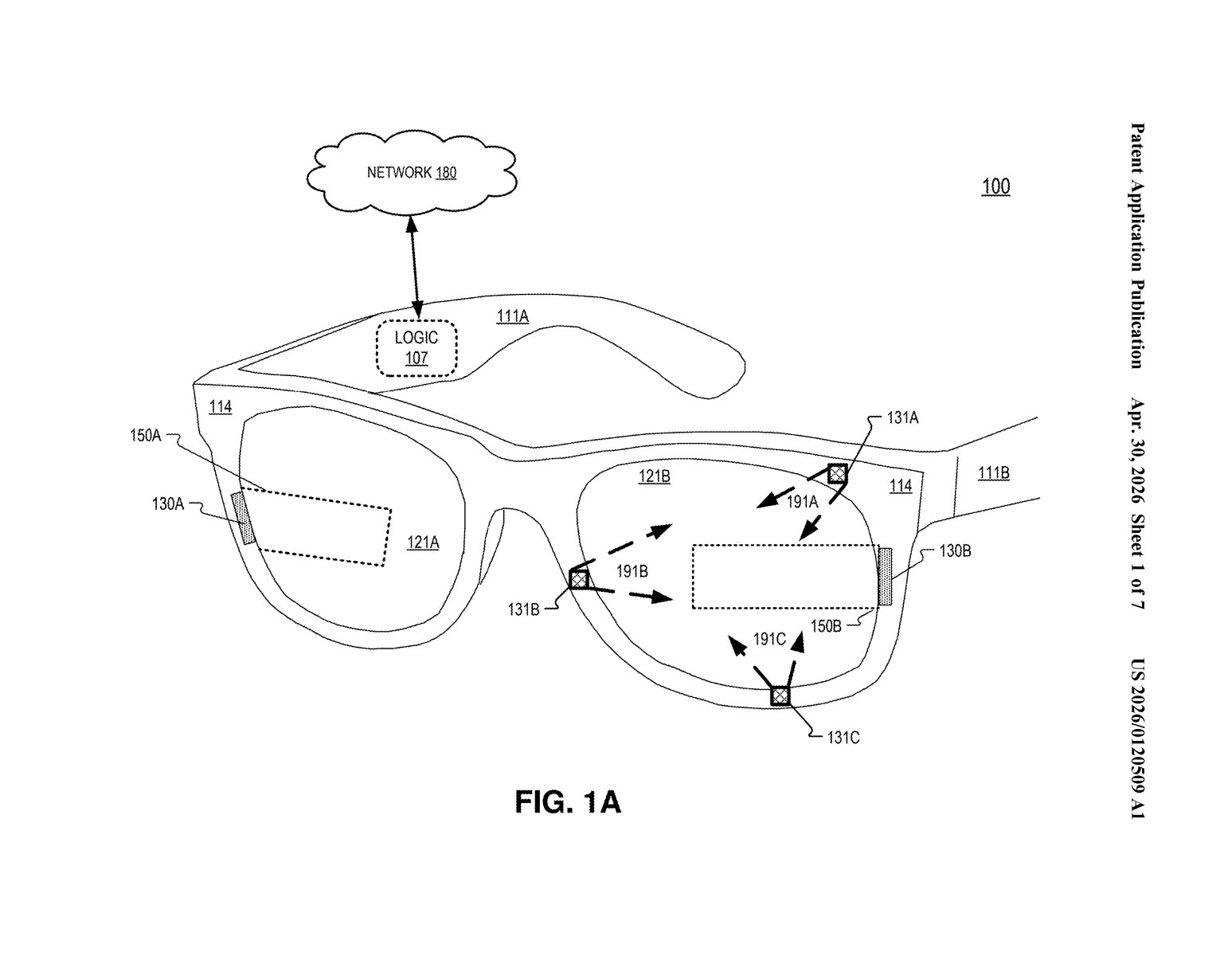

The patent describes a head-mounted device (read: VR or mixed-reality headset) equipped with a radar transmit antenna and a radar receiver antenna aimed at the wearer's face region.

Here's the basic loop:

- The transmit antenna sends out a radar signal toward the face.

- The signal bounces off facial features and returns to the receiver antenna.

- That return signal is compared against an expression database — a pre-built library of radar signatures corresponding to specific facial expressions.

- When a match is found, the system knows which expression the wearer is making.

The matching process is essentially pattern recognition on radar echoes. Different facial configurations — puffed cheeks, furrowed brows, open mouth — produce subtly different radar return profiles, and the system is trained to tell them apart.

This is a continuation of an earlier application (No. 18/105,595, filed February 2023) that already issued as Patent No. 12,400,478, so Meta has been developing this line of technology for at least a couple of years. The current filing extends or refines that earlier work.

What this means for avatar realism in Meta's VR future

Avatar expressiveness is one of the big unsolved problems in social VR — and one of the reasons Meta's Horizon Worlds has felt flat compared to the hype. If a radar system can accurately and continuously read facial expressions, avatars in Presence Platform or future Quest headsets could become dramatically more lifelike during calls and social experiences, without requiring users to consent to having their faces photographed.

For you as a user, this could mean more expressive virtual meetings, better social presence in multiplayer games, and — potentially — a privacy-friendlier alternative to camera-based face tracking. The catch is that 'radar signature of your face' is still biometric data, and how Meta would store and use that expression database is a question the patent doesn't answer.

This is a genuinely clever approach to a real problem: getting facial expression data without the social and regulatory baggage of in-headset cameras. The fact that this is already a granted patent (the original 2023 filing issued as Patent No. 12,400,478) and this is a continuation filing tells you Meta is actively building out this IP family — not just placeholder-patenting an idea. Worth tracking as Quest hardware evolves.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.