Nvidia Patents a Two-Stage AI Pipeline for Teaching Robots to Grab Objects

Getting a robot to reliably pick up an arbitrary object is one of the hardest unsolved problems in robotics — and Nvidia just filed a patent for an AI pipeline that splits the job into two distinct learned stages to do it better.

How Nvidia's robot grasp filter actually works

Imagine you're teaching a robot arm to pick up a coffee mug. The robot needs to figure out not just where to grab it, but how — the angle, the grip, the approach. Get any of those wrong and the mug ends up on the floor.

Nvidia's patent describes a two-step AI approach to this problem. First, one AI model looks at sensor data (think cameras or depth sensors) and generates a bunch of candidate grip positions — essentially brainstorming a list of ways the robot could grab the object. Then a second AI model acts like a quality filter, reviewing that list and picking out only the grips that are likely to actually work.

The surviving grips get assembled into a final grasping plan, and the robot executes it. By splitting 'generate ideas' and 'pick the best idea' into two separate trained models, the system can be smarter and more reliable than a single do-everything model.

Inside Nvidia's two-model grasp pose pipeline

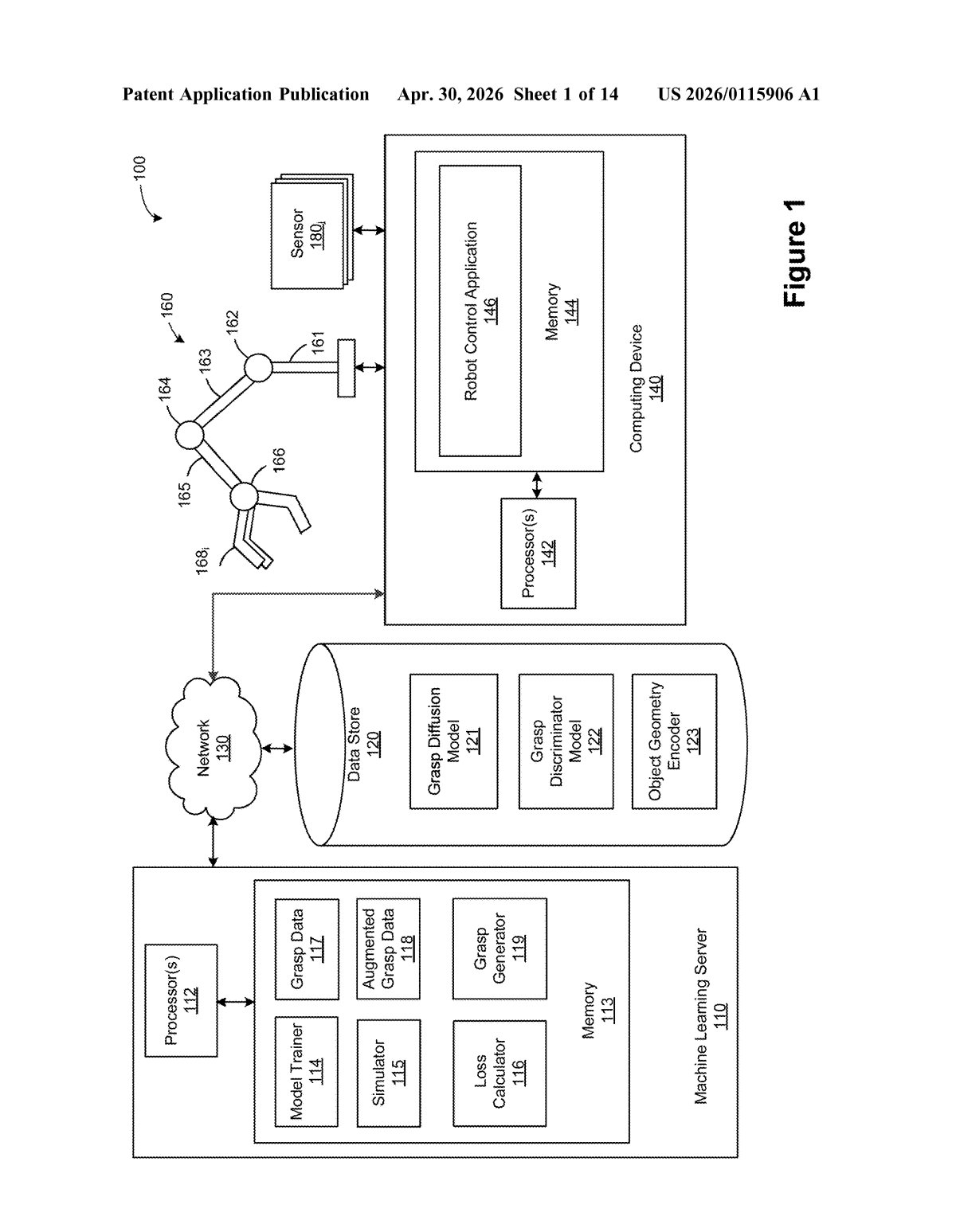

The patent describes a computer-implemented method for robotic grasping built around two distinct machine learning models working in sequence.

- Stage 1 — Pose generation: A first trained ML model takes in raw sensor data — likely point clouds from depth cameras or similar 3D input — and outputs a set of candidate grasp poses (a grasp pose is a specific combination of position and orientation for the robot's hand or gripper).

- Stage 2 — Pose filtering: The claim language is a little circular (it references the first model for both stages, which may reflect a drafting quirk), but the intent is clear: a filtering model evaluates those candidate poses and selects a refined subset — removing unlikely or physically infeasible options.

- Planning and execution: The filtered poses feed into a grasping plan, which the robot then executes.

The key architectural insight is decomposition: rather than asking one monolithic model to go from pixels to action, Nvidia's system breaks the task into generation and selection — a pattern borrowed from fields like image synthesis (think diffusion models' noise-then-denoise logic). This makes each sub-model easier to train and evaluate independently.

The inventors — several of whom are well-known robotics researchers — appear to be formalizing techniques from Nvidia's Isaac robotics platform into patentable method claims.

What this means for the next wave of robot arms

Robot manipulation is the bottleneck holding back practical automation in warehouses, factories, and homes. Most current systems are brittle: they work for a narrow set of known objects under controlled lighting, and fall apart when conditions change. A learned two-stage pipeline that can generalize across arbitrary objects from sensor data alone is exactly the kind of capability that closes that gap.

For Nvidia, this fits squarely into its Isaac robotics push — the company wants to be the platform layer that robot makers build on, the same way it became the platform layer for AI training. If Nvidia can patent and productize core manipulation algorithms, it strengthens the moat around that platform considerably. You might not see this directly, but the robot that eventually sorts packages or restocks grocery shelves could be running it.

This is a focused, technically credible filing from a team with serious robotics credentials — Dieter Fox alone has co-authored some of the most-cited work in robot perception. The two-stage generate-then-filter architecture is a well-motivated design choice, not just patent-filling. It's worth watching because it signals Nvidia is thinking hard about making manipulation a software product, not just a research problem.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.