Qualcomm's New Patent Wants Your Phone to Bundle Related Videos Before You Hit Send

Sharing a bunch of related clips from a night out usually means hunting through your camera roll and stitching things together manually. Qualcomm's new patent wants your phone to do that grouping and compressing automatically the moment you decide to share.

What Qualcomm's auto-media-grouping system actually does

Imagine you've just recorded five short clips from a concert — same venue, same lighting, same general vibe. Normally, sharing all of them means selecting each one individually, maybe trimming, and sending a string of files your friend has to open separately. This patent describes a system that handles all of that for you.

When you tap "share," your phone's processor looks at the media in your camera roll, finds clips that are similar to what you just captured — based on characteristics like color palette, scene content, or timing — and groups them together. It then compresses that group into a single combined file and sends it as one tidy package.

The key detail is that "similar" clips don't have to live only on your device. The system can also pull in matching videos accessible over a network, broaden the pool, and still land on a coherent, shareable result — all without you digging through folders.

How Qualcomm's processor matches and compresses similar clips

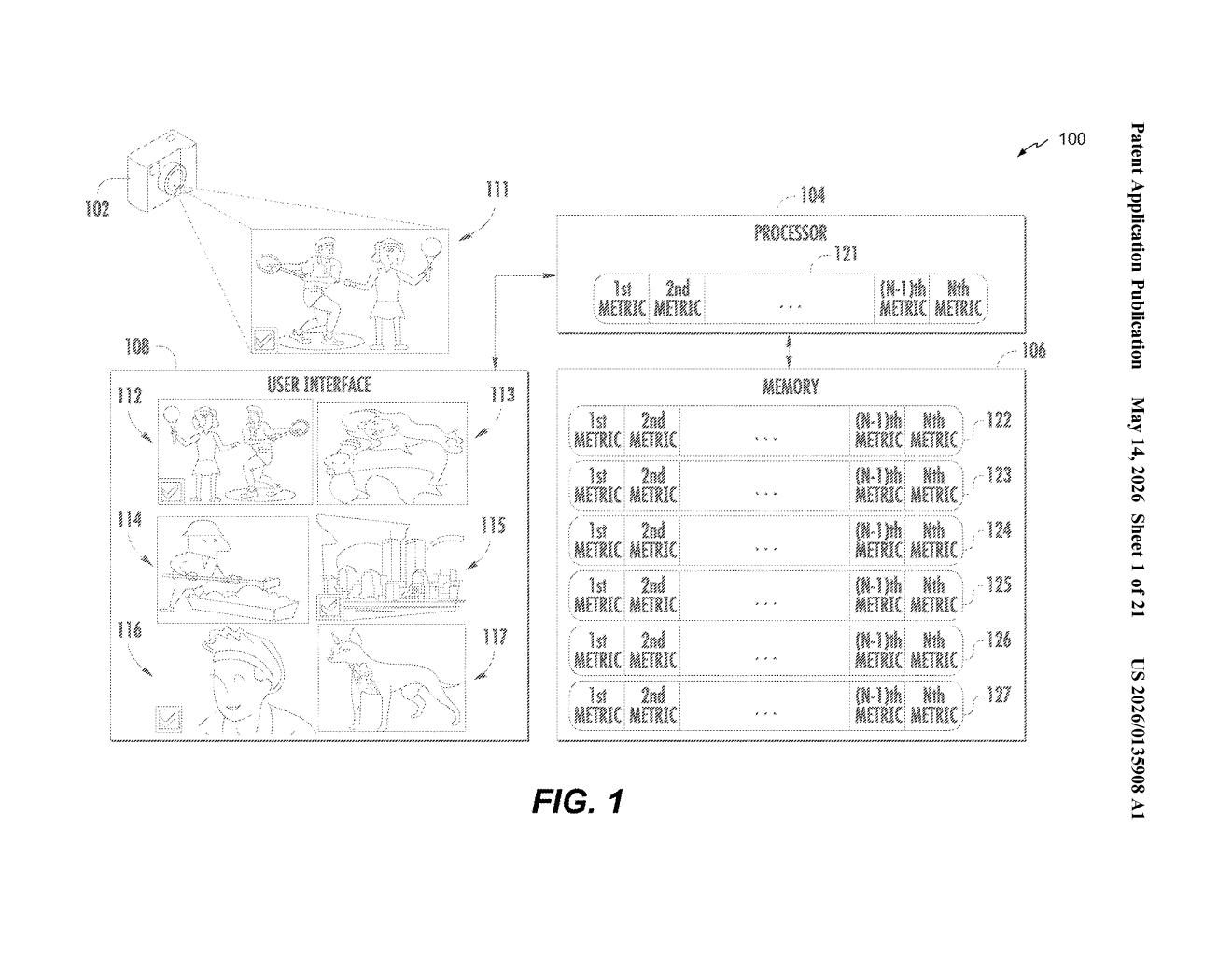

The patent describes a mobile device — think a Snapdragon-powered smartphone — with an image capture device, processor, memory, and display all in one form factor. The core workflow kicks in when a user chooses to share media.

Here's the sequence the processor follows:

- Capture and store a first set of media items (photos or videos) using the on-device camera.

- Analyze characteristics of each item — the patent references a "first set of characteristics" and a "second set of characteristics," which could include things like color histograms, motion vectors, timestamps, or scene descriptors.

- Select a group of media items whose characteristics are sufficiently similar to each other — essentially clustering related content automatically.

- Compress the group into a single combined media item (think a merged video or bundled file), then transmit it to a remote device.

The second video in the abstract can also come from a network source, not just local storage — meaning the system could theoretically reach into cloud albums or shared drives to find a matching clip. The similarity comparison is what drives the selection engine, though the patent doesn't fully specify the algorithm used to measure it.

What this means for mobile video sharing on Snapdragon devices

For everyday users, this is a quality-of-life improvement for the chaotic post-event share. Instead of manually curating which clips belong together, your phone reasons about content similarity and packages things up automatically. That's especially useful as video clips get longer and camera rolls get deeper.

For Qualcomm specifically, this is the kind of on-device intelligence that differentiates a Snapdragon platform from a commodity chip. If this capability ships as part of a reference design or Android feature set, it gives OEM partners — Samsung, OnePlus, Xiaomi — something concrete to market around smart camera UX, without requiring a cloud round-trip for every sharing decision.

This is a solid, practical patent — not flashy AI, but genuinely useful friction-removal for a task most smartphone users do constantly. The network-accessible second video angle is the most interesting wrinkle, suggesting Qualcomm is thinking beyond the local camera roll. Whether it ships as a real feature or stays a filing is the only real question.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.