Zoox Patents a Smarter Ground Detection System for Self-Driving Cars

Self-driving cars need to know exactly where the ground is — but LiDAR sensors can lie. Zoox's new patent describes a clever filter that uses already-trusted ground data to throw out sensor readings that couldn't possibly be real.

How Zoox's LiDAR ground filter actually works

Imagine you're driving toward a dip in the road. Your car's laser sensors are constantly firing beams at the ground to figure out where the surface is — but some of those beams bounce back with weird readings that suggest the ground is floating in mid-air. That's a real problem for a self-driving car trying to navigate safely.

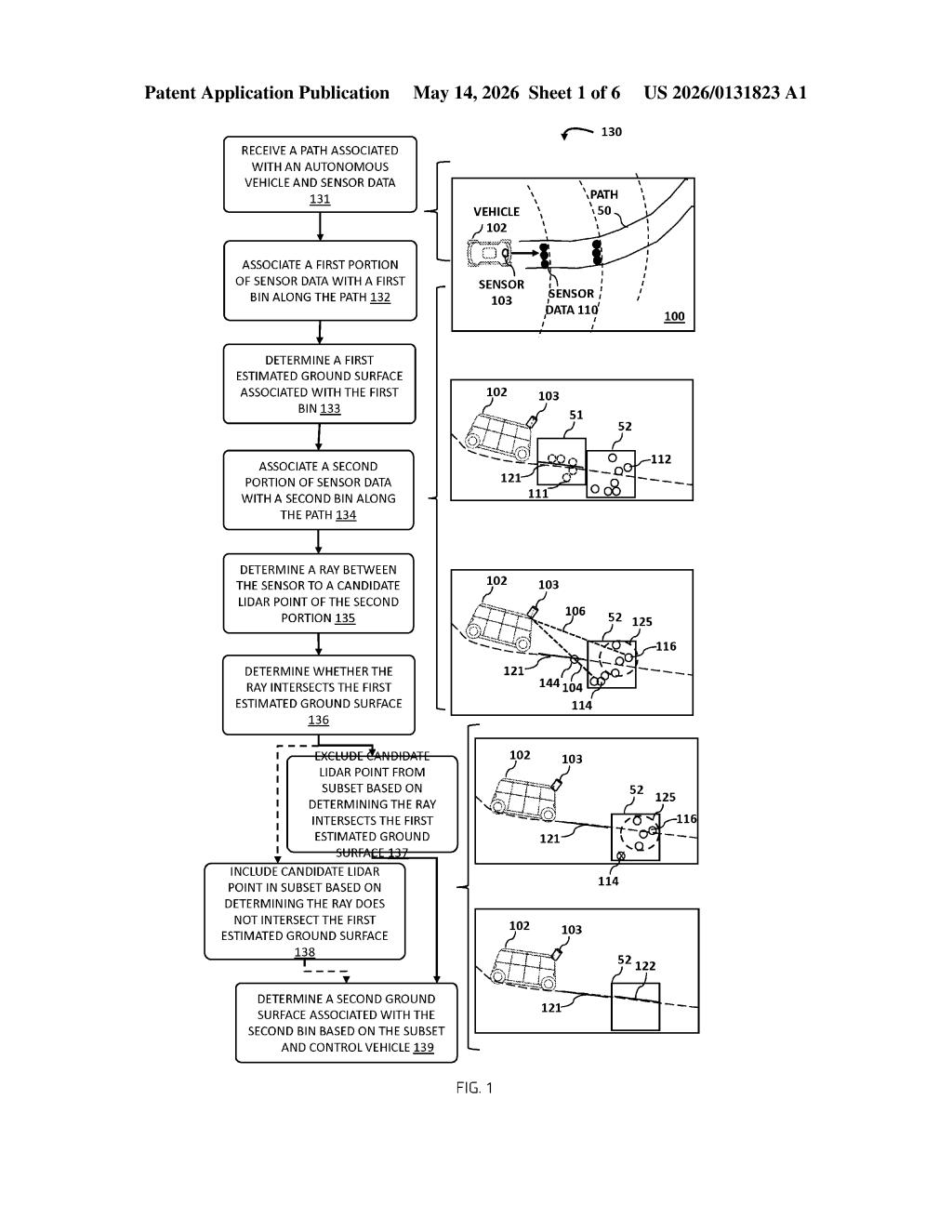

Zoox's patent tackles this by breaking the road ahead into segments, called bins. The vehicle first builds a trusted map of the ground for an earlier segment, then uses that map as a sanity check for sensor data coming from the next segment ahead. If a laser beam (ray) from the sensor to a new data point would have to pass through the already-mapped ground to get there, that point is almost certainly garbage — and it gets thrown out.

What's left is a cleaner, more reliable picture of the road surface, which the car then uses to make driving decisions. It's a bit like using what you already know about the world to spot when your own eyes are lying to you.

How the ray-intersection test filters bad LiDAR points

The system works with LiDAR — the spinning laser rangefinder that most autonomous vehicles use to build a 3D picture of their surroundings. LiDAR is powerful, but noisy: reflections, dust, and geometry can produce spurious data points (phantom readings that don't correspond to real surfaces).

Zoox's approach divides the vehicle's planned path into sequential bins — think of them like slices of road ahead. For each bin, the system estimates a ground plane (a mathematical surface representing where the ground is). The key insight is using the ground surface from an earlier bin as a filter for the next bin.

For every candidate LiDAR point in the new bin, the system draws a ray — a straight line from the sensor to that point. Then it asks: does that ray pass through the ground surface already established for the previous bin? Physically, that would be impossible for a genuine ground-level reading, because the beam would have to travel underground to reach it.

- If the ray does intersect the prior ground surface → exclude the point as likely erroneous

- If the ray does not intersect it → keep the point and use it to estimate the new ground surface

The filtered subset of valid points then feeds the second ground-surface estimate, which in turn drives vehicle control decisions like speed and path adjustments.

What this means for AV reliability on complex terrain

Ground estimation is foundational to autonomous vehicle safety — get it wrong, and the car might brake for a phantom pothole or, worse, miss a real one. Most AV systems filter LiDAR noise using statistical methods alone, which can struggle at the boundaries between different terrain types (a slope leading into a flat intersection, for example). Zoox's ray-intersection approach adds a geometric consistency check grounded in physics, which is harder to fool.

For Zoox specifically — which operates a fully driverless robotaxi service in Las Vegas — robust terrain estimation in varied real-world conditions isn't academic. This kind of incremental, principled improvement to perception pipelines is exactly the unglamorous work that separates reliable commercial AV operations from demo vehicles.

This is solid, practical engineering — not flashy AI, but the kind of careful geometric reasoning that makes perception systems more robust in the real world. The ray-intersection trick is elegant precisely because it's grounded in physical constraints that bad sensor points can't satisfy. For a company running a live robotaxi service, patents like this matter more than headline-grabbing neural network filings.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.