Samsung Patents a Pre-Read Trick That Beats Cache Lookup Latency

What if your memory system stopped waiting to find out if data is cached, and just started fetching it anyway — in parallel? That's the core idea behind Samsung's latest memory patent.

How Samsung's memory device reads ahead of itself

Imagine asking a librarian for a book. Normally, they first check the "fast shelf" (the cache) to see if it's already close by, and only then decide whether to go to the back warehouse. That waiting step costs time.

Samsung's patent describes a smarter librarian. The moment you ask for the book, they send an assistant to the warehouse at the same time as checking the fast shelf. If the fast shelf has it, great — you get the cached copy and the warehouse trip is canceled. If it doesn't, the warehouse copy is already on its way. Either path ends faster than the old approach.

The key component is something called a pre-request manager — a small piece of logic inside the memory device that kicks off a parallel retrieval operation before the cache has finished making up its mind. It's a speculative bet that pays off whenever there's a cache miss, and costs almost nothing when there isn't.

How the pre-request manager races the cache lookup

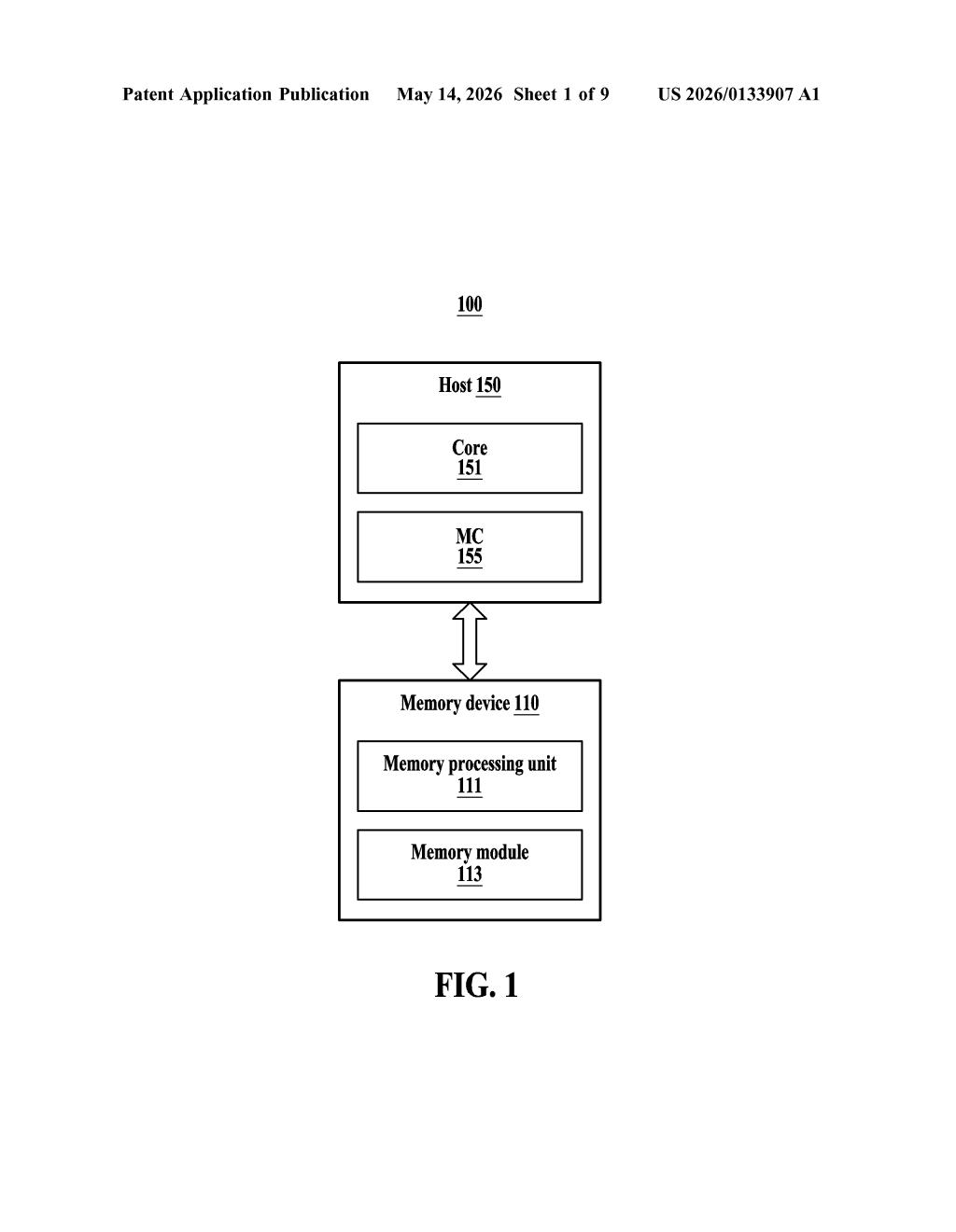

The patent describes a memory device with three main components working together:

- Cache — performs the standard lookup to determine whether requested data is already stored in fast-access memory (a "cache hit") or needs to be fetched from the slower main memory module (a "cache miss").

- Pre-request manager — the novel piece. Before the cache finishes its hit/miss determination, this manager sends a pre-request (either the original read request or a copy of it) to the Memory Processing Unit, telling it to start preparing the data retrieval now.

- Memory Processing Unit (MPU) — executes at least one of the operations needed to locate and retrieve data from the memory module, doing so speculatively in parallel with the cache check.

The outcome depends on what the cache decides. If it's a cache miss, the MPU has already done meaningful work, so the data arrives faster. If it's a cache hit, the cached data is returned to the host instead, and the MPU's speculative work is effectively discarded — a small wasted effort, but acceptable given the latency savings on misses.

This is a form of speculative execution applied to memory hierarchies — a well-known CPU technique now being pushed down into the memory device itself.

What this means for memory-intensive workloads

Cache miss latency is one of the biggest bottlenecks in memory-intensive workloads — think AI inference, database queries, and high-bandwidth compute. By overlapping the cache lookup with the start of a memory fetch, Samsung's design shaves off a meaningful slice of idle wait time without requiring the host processor to do anything differently.

For Samsung's memory business — which competes directly with SK Hynix and Micron in HBM and enterprise DRAM — even incremental latency wins at the device level can translate into real differentiation in data center benchmarks. If this technique ships inside a future HBM or CXL memory product, you'd benefit from it without ever knowing it's there.

This is solid, unglamorous engineering. Speculative execution is a decades-old idea, but applying it inside the memory device itself — rather than the CPU — is a meaningful architectural push. Samsung is essentially moving intelligence into the memory stack, which fits a broader industry trend toward compute-near-memory designs. Worth watching as a signal of where Samsung's memory roadmap is heading.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.