Microsoft Patents an AI System That Figures Out What's Missing From Your Photos

Most AI image tools wait for you to tell them what to add. Microsoft is patenting a system that looks at your image and decides what's missing — and where it should go — on its own.

What Microsoft's object-placement AI actually does

Imagine you're decorating a virtual room and you're not sure what furniture would look right. Microsoft's patent describes an AI that looks at the photo and says, "there should be a laptop on that table" — without you ever asking for one.

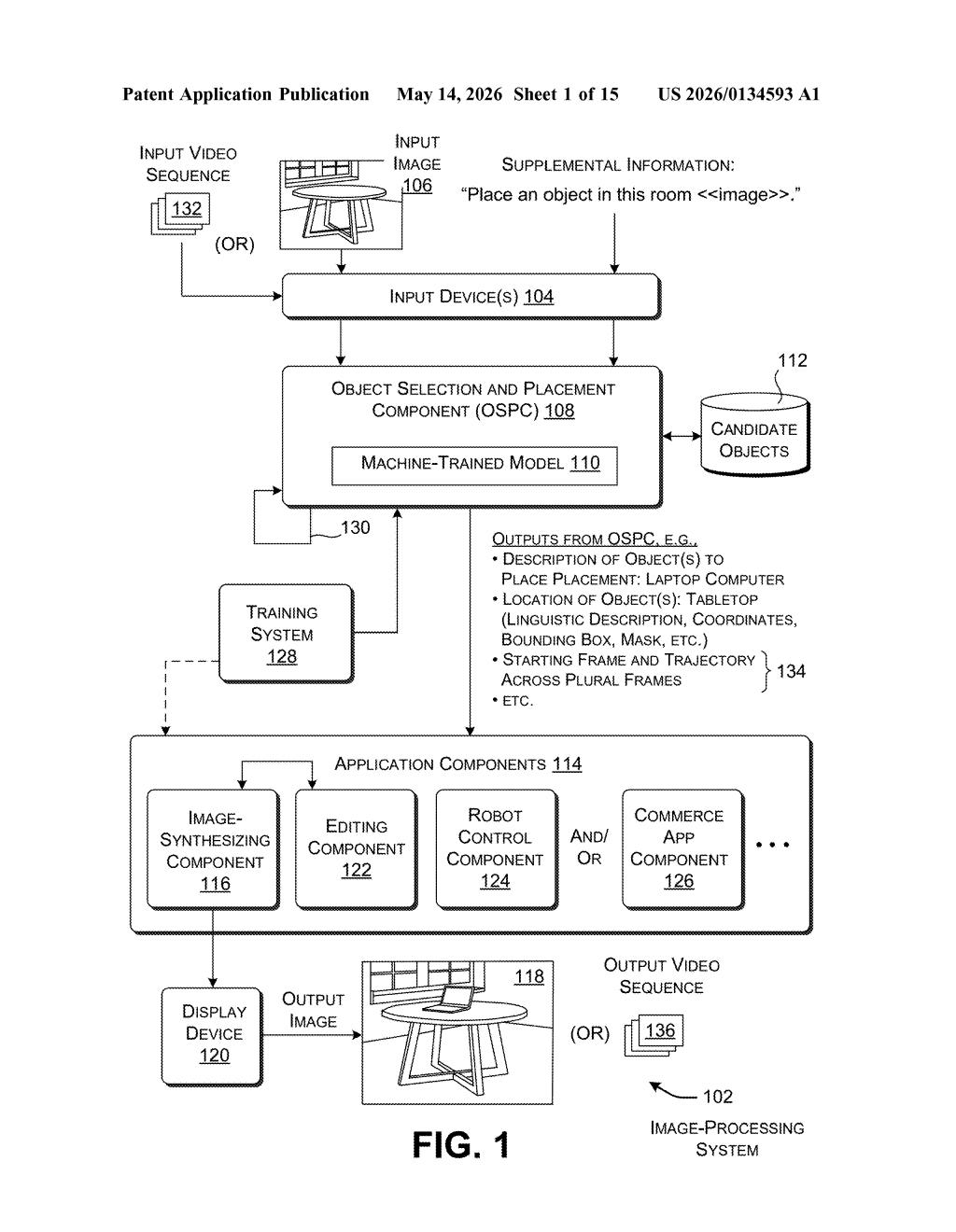

The system uses a trained model to analyze an input image and produce two things: a suggestion of what object belongs in the scene, and a suggestion of where exactly to place it. It can then synthesize a new version of the image with those objects added in.

This isn't just for photos. The patent also extends the idea to video, where the AI can track an object's placement across multiple frames. Possible applications include e-commerce (auto-staging product shots), image editing tools, and even robot navigation — where a robot might need to figure out what objects it should be holding or placing.

How the model learns by erasing and predicting objects

The core of the patent is a machine-trained model called the Object Selection and Placement Component (OSPC). Given an input image, it outputs two pieces of information: which object should be placed in the scene, and where it should go (expressed as coordinates, a bounding box, a mask, or even a plain-language description like "tabletop").

The clever part is how the model is trained. Rather than labeling images with instructions like "put a chair here," the training process works by erasure:

- Take a real image that already contains objects.

- Remove one or more of those objects.

- Ask the model to predict which object was removed, given its location — and predict where the object was, given only the object identity.

- Adjust the model's weights based on how wrong it was, and repeat.

This self-supervised training loop (meaning it doesn't require manually labeled data) teaches the model to understand the relationship between scene context and object placement. The model learns, for example, that laptops belong on desks and plants belong near windows.

The patent also describes downstream applications: an image-synthesizing component that renders the final composite, a commerce app component for product staging, and a robot control component that could use placement predictions to guide a physical robot.

What this means for AI image editing and robotics

For image editing tools like Photoshop or Microsoft's own Designer, this kind of model could reduce the creative lift significantly — instead of hunting for the right stock asset and manually positioning it, the tool could suggest and place contextually appropriate objects for you. That's a meaningful quality-of-life improvement for non-designers.

The robotics angle is the more surprising one. If a robot can look at a scene and infer what object should be there — and where — that's a building block for more autonomous task planning. The patent's breadth, covering both image editing and robot control under one framework, suggests Microsoft is thinking about this as a general-purpose spatial reasoning capability rather than a narrow photo tool.

This is a well-constructed self-supervised learning approach with real practical legs. The erasure-based training trick is elegant — it sidesteps the need for expensive labeled datasets and produces a model that genuinely understands scene composition. Whether it ships in Copilot, Designer, or something else entirely, the underlying method is solid enough to pay attention to.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.