Nvidia Patents an AI System That Learns Where Objects Belong Before Placing Them

Dropping a new object into a photo sounds simple — until the AI places a car on the ceiling. Nvidia's new patent describes a smarter approach: teaching the system to understand spatial context before it ever draws a single pixel.

How Nvidia's AI figures out where objects should go

Imagine you're editing a photo and want to add a chair to a living room scene. A naive AI might plop it in midair or half-inside a wall, because it doesn't really understand the rules of a room. Nvidia's patent is about solving exactly that.

The system first reads the semantic layout of an image — which pixels are floor, which are wall, which are already occupied by furniture — and uses that to figure out a sensible region where a new object could realistically sit. It then generates the object's shape to match that region. Crucially, it also looks at other objects already in the scene when deciding where to put something new, so objects don't awkwardly overlap or ignore each other.

The result is a pipeline that doesn't just copy-paste objects — it reasons about space. That's a meaningful step toward AI editing that looks like a human made it.

How the two-generator pipeline places objects into scenes

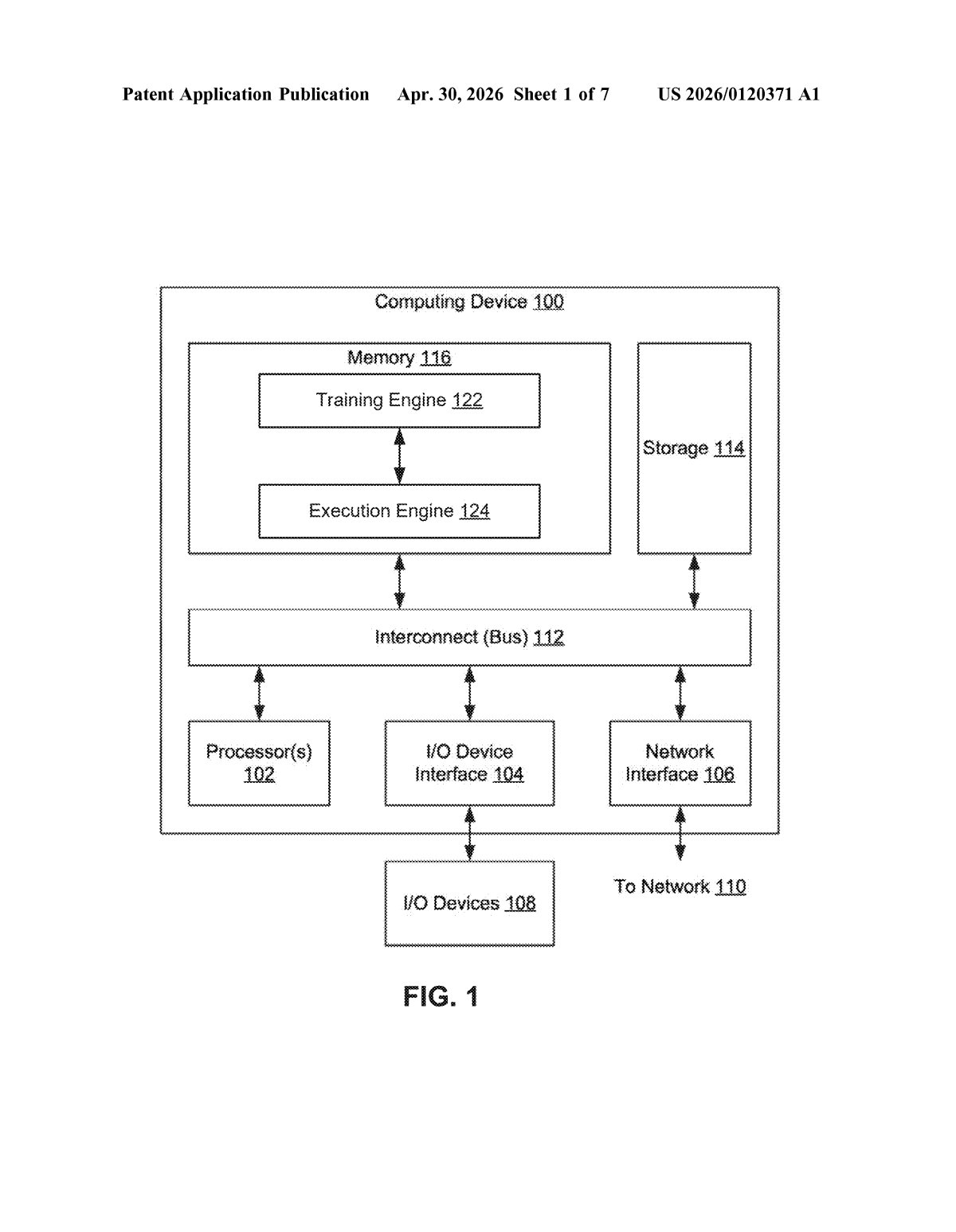

The patent describes a two-stage generative pipeline built around semantic segmentation maps (pixel-level labels that say "this area is road," "this area is sofa," etc.).

- Stage 1 — Location reasoning: A first neural network reads the segmentation map and outputs an affine transformation (a mathematical operation that defines a bounding box — essentially a rectangle that says "put the object here, at this size and orientation"). Importantly, the system determines valid placement zones for multiple objects simultaneously, so it can account for how objects relate to one another spatially.

- Stage 2 — Shape generation: A second neural network takes that bounding box plus the original segmentation map and generates the precise silhouette or shape of the object to be inserted, fitting it to the designated region.

- Composite insertion: The generated shape and the bounding box together tell the system exactly where and how to render the final object into the image.

The key novelty is in the first independent claim: when placing a first object, the system explicitly conditions on the location of a second object already in the scene. That means it's not placing things in isolation — it's modeling spatial relationships, which is how real-world scenes actually work.

What this means for AI image editing and synthetic data

For AI image generation and editing, plausible object placement is one of the harder unsolved problems. Most diffusion-based tools still struggle with scene geometry — generated objects float, clip through surfaces, or ignore perspective. A dedicated placement-reasoning module like this could be plugged into a larger pipeline to handle that specific failure mode cleanly.

There's also a big synthetic data angle here. Nvidia trains AI models for robotics, autonomous vehicles, and computer vision — all of which need massive labeled datasets. A system that can insert objects into scenes in geometrically plausible ways would let Nvidia's teams generate far more realistic training images without expensive real-world photography. That's arguably the more immediate commercial use case.

This is a focused, technically credible filing — not a moonshot. The two-generator architecture is a clean decomposition of a real problem (location first, shape second), and conditioning on sibling-object locations is a genuine insight. It's most interesting as a synthetic data tool in Nvidia's own AI training pipelines, and that alone makes it worth tracking.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.