Nvidia Patents a Neural Network System That Auto-Corrects Database Queries

What if your database search could learn from its own bad results and try again — automatically? That's the core idea behind Nvidia's latest patent on neural-network-guided query refinement.

How Nvidia's self-refining database search actually works

Imagine you search a database for something — say, images of a car turning left — and the results come back slightly off. Maybe they're too blurry, or the angle is wrong. Normally, you'd have to manually tweak your search and try again. Nvidia's patent describes a system that does that tweaking for you, automatically.

The system uses a neural network to look at what the database actually returned, compare it against what you were probably looking for, and generate a refined query — a better-worded search — without any human intervention. It's like having an assistant who reads the room after a bad first attempt and quietly rewrites the question.

This kind of feedback loop is especially useful when you're searching large, complex datasets — the sort used in training AI models, or powering real-time systems that need to pull exactly the right data on the first useful try.

How the neural network loops on prior query results

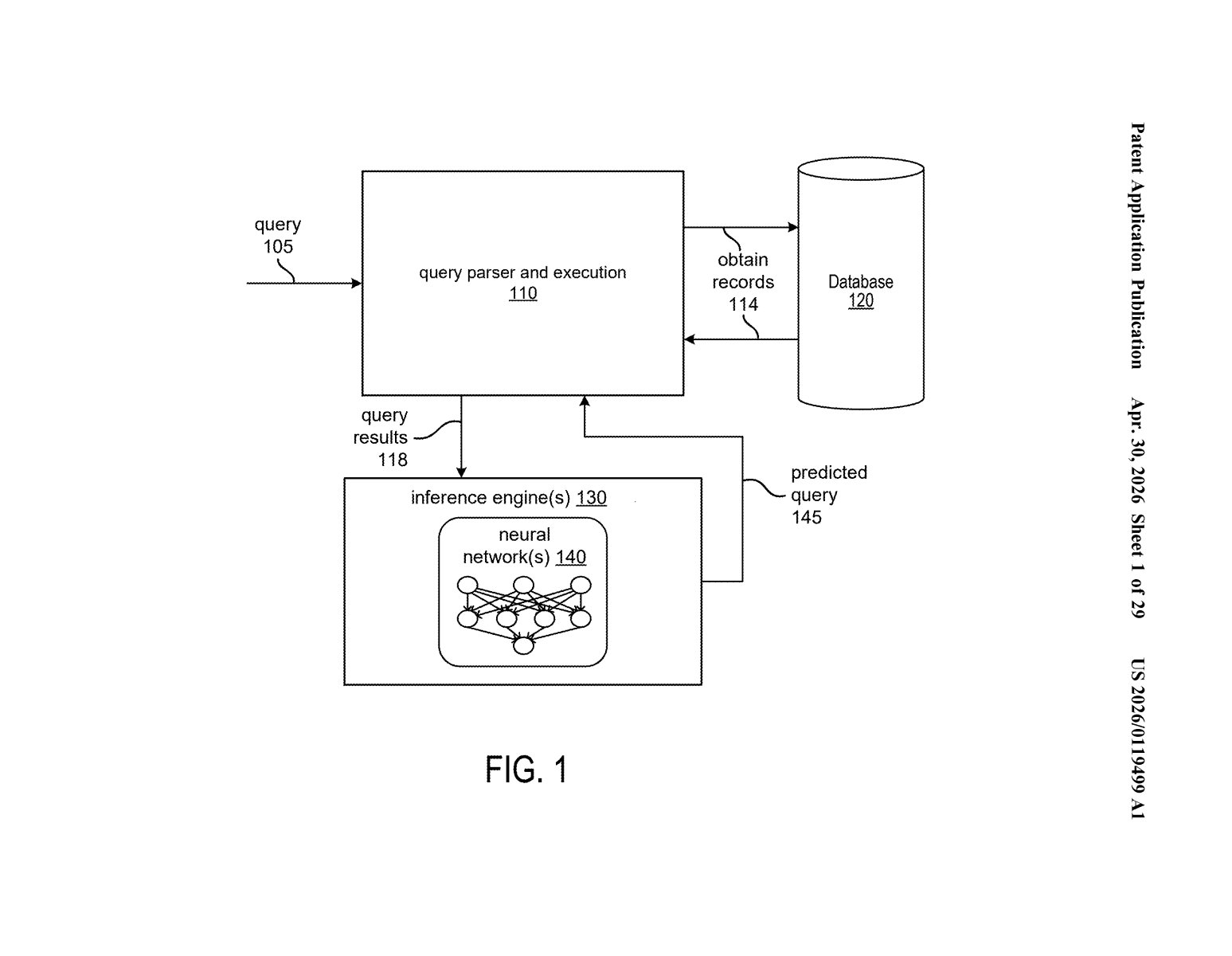

At its core, the patent describes a processor with circuits that drive one or more neural networks to predict a refinement of a database query based on what the previous query returned. The feedback from earlier results becomes the input signal for the next, better query.

The system appears to rely on embeddings — a technique where objects (images, text snippets, sensor readings) are converted into numerical vectors that capture their meaning or content. Those vectors are stored and searched in a database. When a query returns results that are close but not quite right, the neural network analyzes the gap and proposes a corrected query vector.

The patent diagram references a feedback generator component, suggesting a structured loop:

- A query is sent to the database

- Results come back and are evaluated

- The feedback generator passes those results to the neural network

- The network predicts a refined query and the cycle repeats

This is architecturally similar to iterative retrieval methods used in modern RAG systems (Retrieval-Augmented Generation — where AI models look up external data before answering), but framed here as a general database-querying framework, not limited to language models.

What this means for AI-driven search and robotics data pipelines

For Nvidia, whose hardware powers massive AI training pipelines and robotics systems, fast and accurate data retrieval is foundational. Training a self-driving model or a robotic manipulation system means querying millions of labeled sensor frames. A query system that self-corrects could dramatically cut the manual curation work that slows those pipelines down.

More broadly, this kind of iterative neural search has real implications for any enterprise database where the gap between what you ask and what you actually need is wide — think medical imaging archives, legal document retrieval, or e-commerce product search. If Nvidia builds this into its AI infrastructure stack, it could become a quiet but meaningful efficiency multiplier.

This is a solid infrastructure patent that solves a real, unglamorous problem: databases return imperfect results, and fixing that usually requires human effort. Nvidia framing this as a feedback-loop neural architecture is smart and aligns well with their push into AI-driven data pipelines for robotics and simulation. It's not flashy, but it's the kind of foundational piece that shows up inside bigger systems before anyone notices.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.