Microsoft Patents an Automatic Quality Filter for Medical Imaging AI

Garbage in, garbage out — and in medical AI, garbage can mean a model that misses tumors. Microsoft has patented a system that automatically identifies and quarantines low-quality or anomalous medical scans before they ever touch a training pipeline.

How Microsoft's system spots a 'bad' MRI before it causes damage

Imagine a hospital sends 10,000 MRI scans to train an AI diagnostic model. Most scans are clean, but a few are blurry, mislabeled, or just plain weird — a patient moved mid-scan, or the wrong protocol was used. If those bad scans sneak into training, the whole model gets a little worse. Multiply that across dozens of hospitals and you have a real problem.

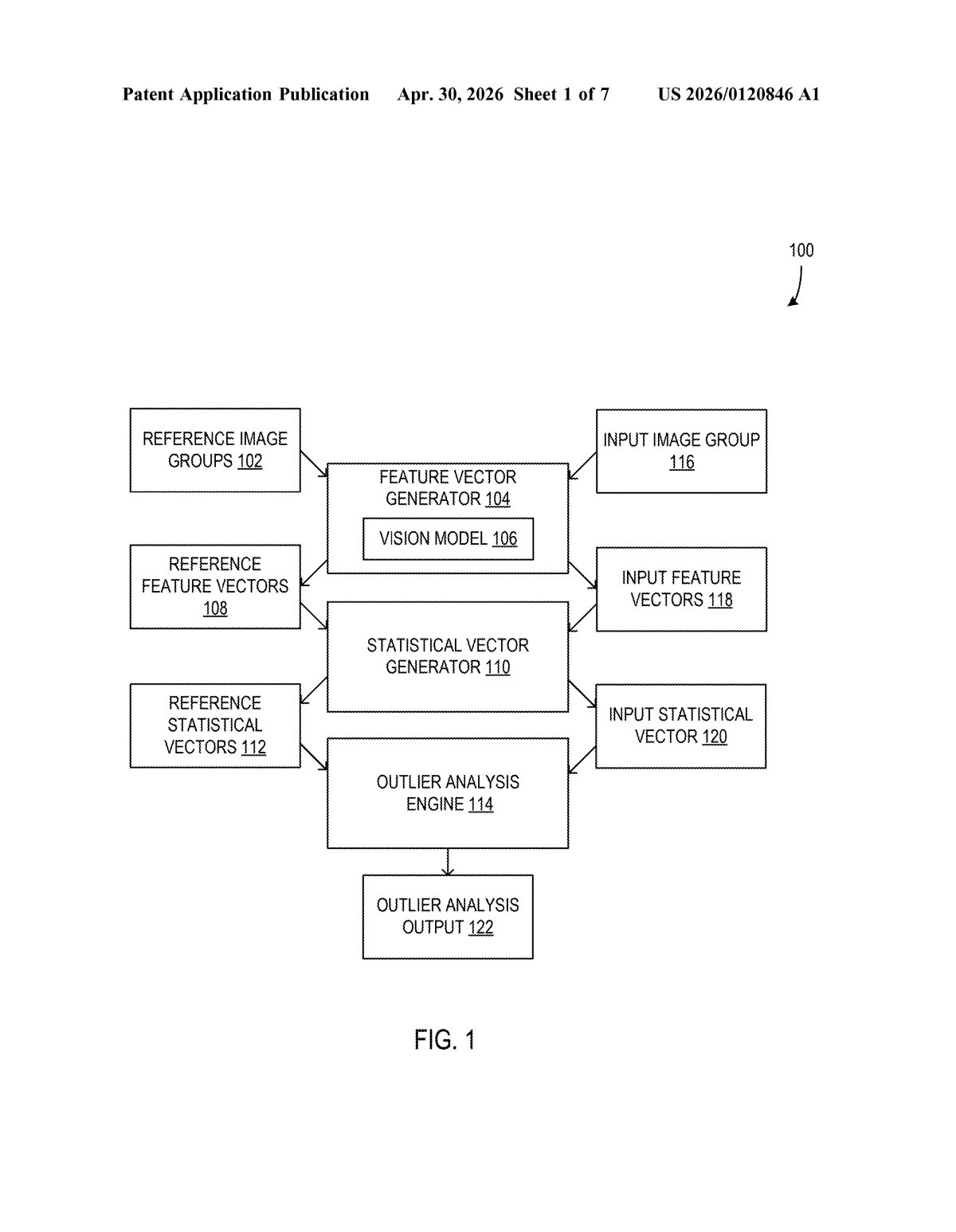

Microsoft's patent describes a system that acts like a quality control inspector before any AI training begins. It converts each scan into a compact numerical fingerprint (a feature vector), then compares those fingerprints against a reference library of known-good scans. If a new scan's fingerprint looks statistically out of place — an outlier — the system flags it and automatically removes it from the dataset.

The key idea is that this all happens without a human radiologist reviewing each scan manually. You get automatic, scalable quality control that keeps AI training data clean.

How the outlier analysis pipeline flags a suspicious scan

The system operates on medical imaging studies — collections of images from a single patient exam, like a multi-slice CT or a series of MRI frames — rather than on individual images.

Here's how the pipeline works:

- Feature extraction: Each image in a study is run through a model that produces a feature vector — essentially a high-dimensional numerical summary of what the image looks like (texture, intensity distribution, structural patterns).

- Statistical aggregation: The per-image feature vectors for a study are combined into a single statistical vector for that study (likely via mean, variance, or similar summary statistics). This gives you one compact representation of the entire exam.

- Outlier scoring: That study-level statistical vector is compared against a library of reference statistical vectors derived from known-good studies of the same imaging category (e.g., chest CTs, brain MRIs). Standard outlier-detection math — think Mahalanobis distance or similar — determines how far outside the normal distribution the new study falls.

- Automated action: If the study is flagged as an outlier, it is automatically excluded from the target dataset before downstream analysis or model training runs.

The patent is category-aware: it only compares a study against references from the same imaging category, so a brain MRI isn't incorrectly flagged just because it looks different from chest X-rays.

What this means for AI trained on hospital imaging data

Medical AI is only as good as the data it learns from, and curating that data at scale is one of the biggest unsexy problems in the field. Right now, bad scans are often caught by manual review or not caught at all — both outcomes are expensive or dangerous.

Microsoft positions itself heavily in healthcare cloud infrastructure (Azure Health Data Services, Nuance DAX). A system like this could slot directly into a data ingestion pipeline, silently cleaning datasets before they reach any training job. For you as a patient, cleaner training data means diagnostic AI that's less likely to have learned bad habits from corrupted inputs — which is a genuine patient-safety argument, not just an engineering nicety.

This is unsexy infrastructure work that solves a real, documented problem in medical AI. It won't make headlines, but if it ships into Azure's health data pipelines, it quietly raises the floor on every medical AI model trained there. Worth tracking for anyone following Microsoft's healthcare cloud strategy.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.