Samsung Patents a Self-Calibrating Radar Receiver That Fixes Its Own Signal Distortion

Radar chips are only as accurate as their analog circuitry — and that circuitry is never perfect. Samsung's new patent describes a radar transceiver that quietly measures its own imperfections and corrects for them, without ever needing to be plugged into external test equipment.

What Samsung's built-in radar self-calibration actually does

Imagine a microphone that automatically figures out it's slightly off-tune and adjusts itself before every recording session. Samsung is trying to do something similar for radar.

Radar systems use two signal channels — called I and Q — that are supposed to be perfectly matched in amplitude and phase. In the real world, tiny manufacturing differences mean they never quite are. Those mismatches, plus small voltage drifts called DC offsets, blur the radar's picture of the world. Normally you'd fix this on a factory test bench with specialized equipment.

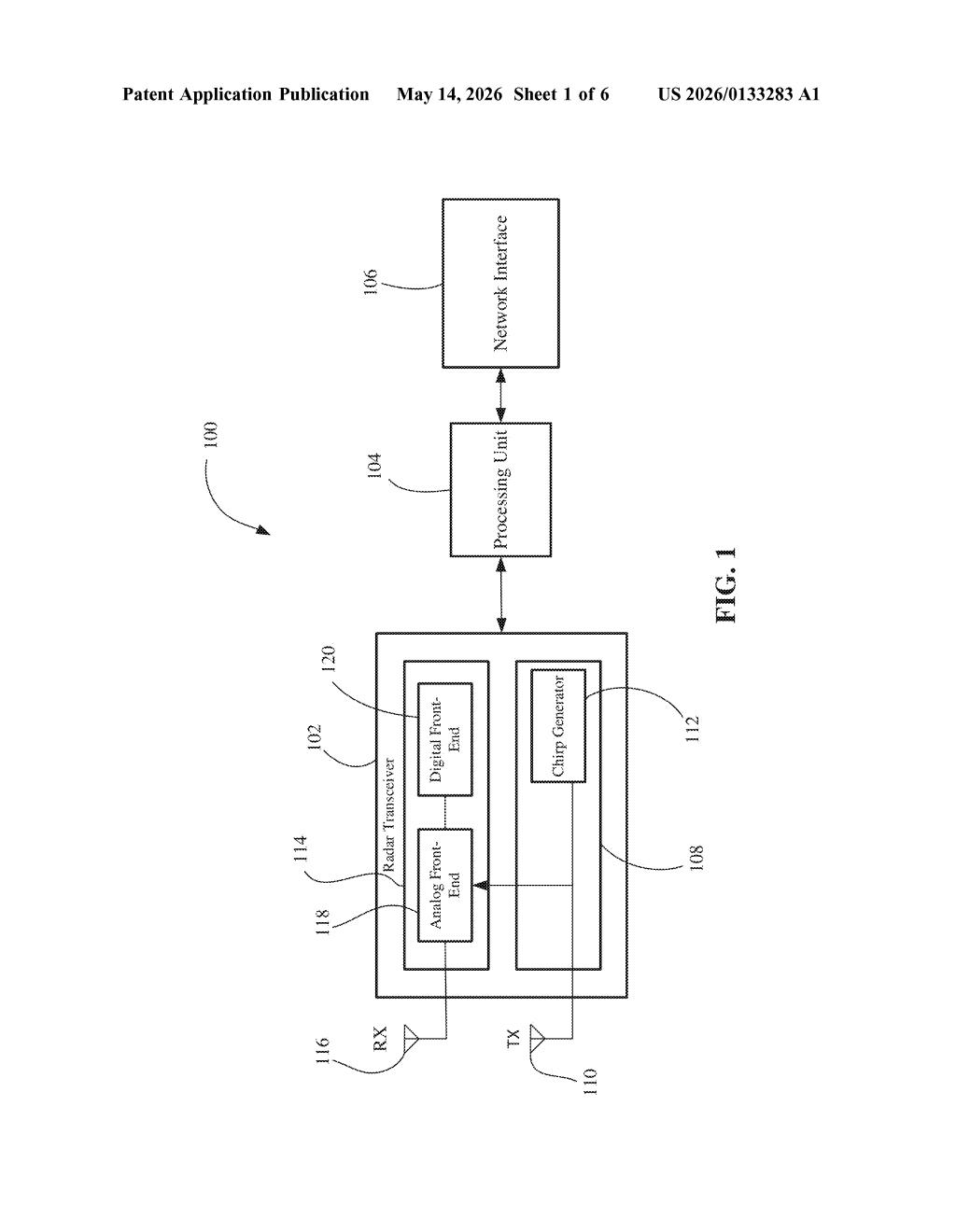

Samsung's approach skips the test bench entirely. The radar chip sends a known test signal through its own internal circuitry, measures the distortion it sees, and calculates exactly how much correction to apply — all on its own. Those corrections then stay active when the radar is doing its real job, like detecting objects around a car or a phone.

How the loopback chirp estimates and corrects I/Q errors

The patent describes a quadrature receiver (the part of a radar that splits incoming signals into two channels, I and Q, to capture both amplitude and phase) that can self-diagnose and self-correct two classic problems: I/Q mismatch and DC offsets.

Here's the core mechanism:

- The radar transmitter sends a chirp signal (a short tone that sweeps across frequencies — the standard waveform in modern FMCW radar) back into its own receiver via an internal loopback path.

- The digital front-end then measures the beat frequency signal (the difference tone that appears when the received chirp mixes with the transmitted one), its image (a mirror artifact caused by I/Q imbalance), and any DC offset riding on top.

- From those three measurements, the system computes exactly how much amplitude correction, phase correction, and DC subtraction to apply.

The patent covers both multi-iteration approaches (refine the estimate over several passes) and single-iteration approaches (get it right in one shot). Once corrections are computed, they are baked into the signal processing pipeline and applied continuously during normal radar operation — including when the radar is scanning for real targets.

What self-calibrating radar means for consumer and automotive devices

For automotive radar and millimeter-wave sensing in consumer devices, calibration accuracy directly affects how well the system distinguishes real objects from ghost echoes. Right now, factory calibration is a time-consuming step that adds cost and can drift as hardware ages or temperature changes. A chip that recalibrates itself on demand — or even periodically in the field — sidesteps both problems.

This also matters for miniaturization. As radar moves into phones, wearables, and in-cabin vehicle sensors, there's no room (or budget) for external calibration rigs. Samsung's approach embeds the calibration logic entirely in the digital signal processing layer, which means it can live on the same chip as the radar front-end with minimal extra silicon.

This is solid, unglamorous engineering work solving a real manufacturing pain point in radar design. It's not a moonshot — I/Q calibration is a well-studied problem — but Samsung's angle of doing it fully in-situ, without external equipment, and covering both multi-pass and single-pass methods in one patent, suggests this is headed toward a production chip rather than a research exercise. Worth tracking if you follow automotive or indoor sensing radar.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.