Intel Patents a Way to Keep Robots from Drifting Off Course

Even the best robot sensors drift — Intel's new patent describes a way to fuse two sensors together, weighting one's error signal against the other, so a robot always knows where it actually is.

How Intel's robot positioning correction actually works

Imagine a warehouse robot that thinks it's lined up perfectly with a shelf, but it's actually a few centimeters off. That gap between where the robot thinks it is and where it actually is can cause missed picks, collisions, or worse. This is called position error, and it's one of the trickiest ongoing problems in robotics.

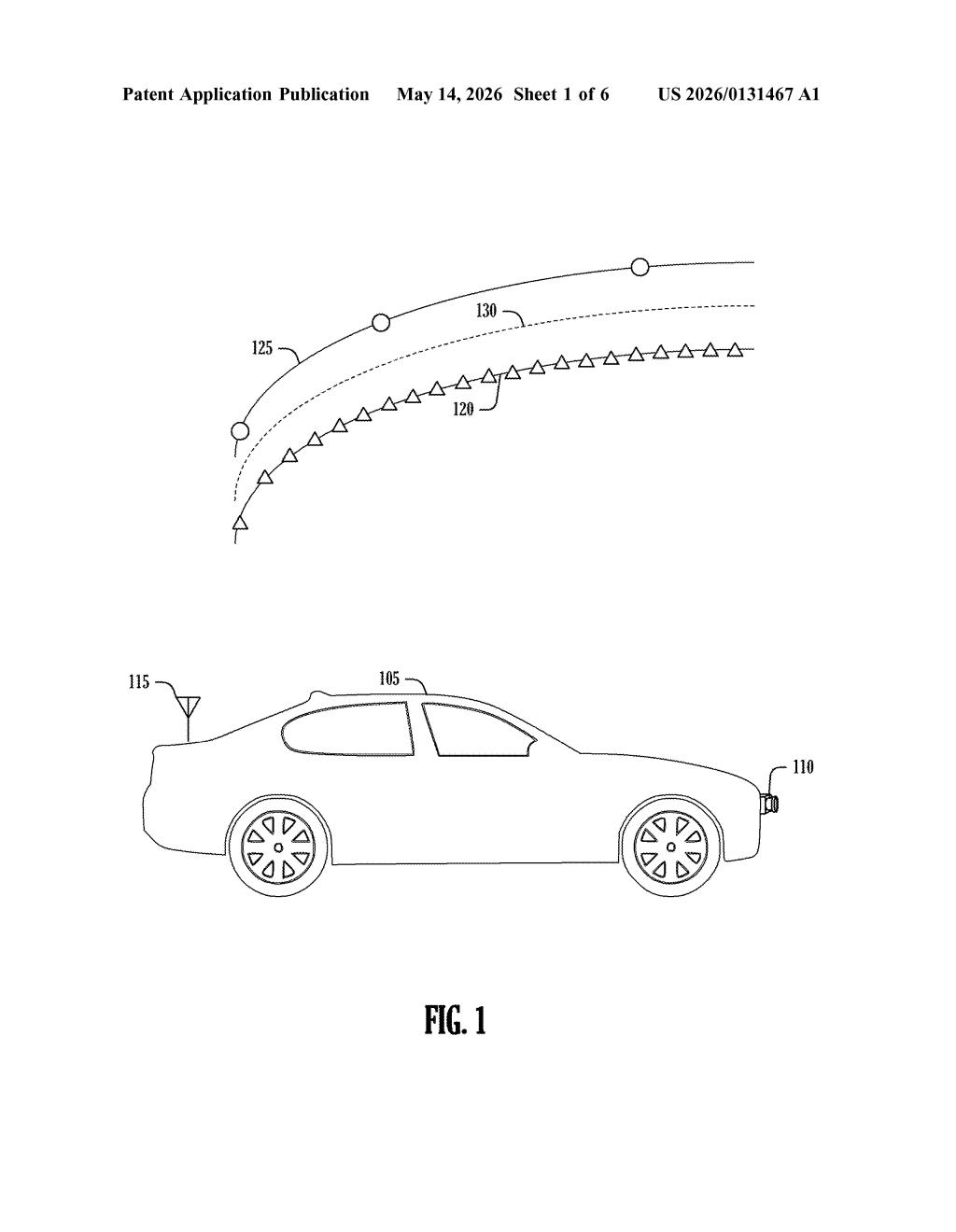

Intel's patent describes a system that uses two different sensors working together. The first sensor builds a picture of the robot's environment to establish a starting position. The second sensor contributes an error value — a measurement of how far off things might be. The system then assigns a weight to that error (basically deciding how much to trust it), combines everything, and produces a corrected position the robot can actually act on.

Think of it like a GPS that also listens to your car's wheel odometer — when one source gets noisy, the other helps keep you on track. Intel's approach formalizes that kind of cross-checking into a structured, trainable pipeline.

How Intel weights sensor errors into a corrected pose

The patent outlines a pipeline with two key inputs and one corrected output. A first sensor — likely a camera, LiDAR, or depth sensor — generates a 3D dense map of the robot's surroundings, which an artificial neural network processes to estimate the robot's current pose (position plus orientation in space).

A second sensor then contributes an error value — essentially a signal indicating how much the first sensor's position estimate may have drifted or deviated. Crucially, the system doesn't just accept this error value at face value. Instead, it selects a weight for it, scaling the error's influence before combining it with the base position estimate.

The result is a corrected position — a fused, more reliable location estimate that the robot then uses as the basis for whatever it needs to do next: picking an object, navigating a path, avoiding an obstacle. The mention of a pose trajectory in the abstract suggests the system tracks this correction over time, not just at a single moment.

The neural network component is key: rather than hand-tuning how much to trust each sensor, the weighting can be learned from data — making the correction adaptive to different environments and sensor conditions.

What this means for industrial and autonomous robots

For industrial robots, autonomous vehicles, and warehouse automation, position accuracy is directly tied to reliability and safety. A robot that can self-correct its location estimate using a second sensor — rather than stopping and re-localizing from scratch — can operate faster and more robustly in messy, real-world environments where sensors get noisy, lighting changes, or reflective surfaces fool cameras.

Intel's angle here is notable: the company has been pushing into robotics and edge AI through its hardware platforms, and a patent like this could underpin software or silicon optimized for on-device sensor fusion. If the neural network weighting runs efficiently on Intel hardware, that's a real differentiator for robot OEMs choosing a compute platform.

This is solid, focused engineering work rather than a splashy concept. Sensor fusion for robot localization is a well-trodden field, but Intel's contribution — using a learned weight to modulate a second sensor's error signal before fusing it with a neural-network-derived pose — is a sensible and practical refinement. It's the kind of patent that quietly ends up in production robotics stacks.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.