Adobe Patents a Complexity-Aware System for Smarter AI Inpainting

Adobe is patenting a way for its AI image-editing tools to first measure how visually complex a scene is — then automatically pick the best inpainting model to fill in the gap. It's less 'one model to rule them all' and more 'the right tool for the job.'

What Adobe's complexity-based fill selection actually does

Imagine you're editing a photo and you want to erase an object — maybe a stranger in the background or a power line. You'd brush over it, hit 'fill,' and let the AI figure out what should be there instead. Simple backgrounds, like a clear blue sky, are easy. But a crowded street scene with buildings, signs, and people? That's a much harder problem.

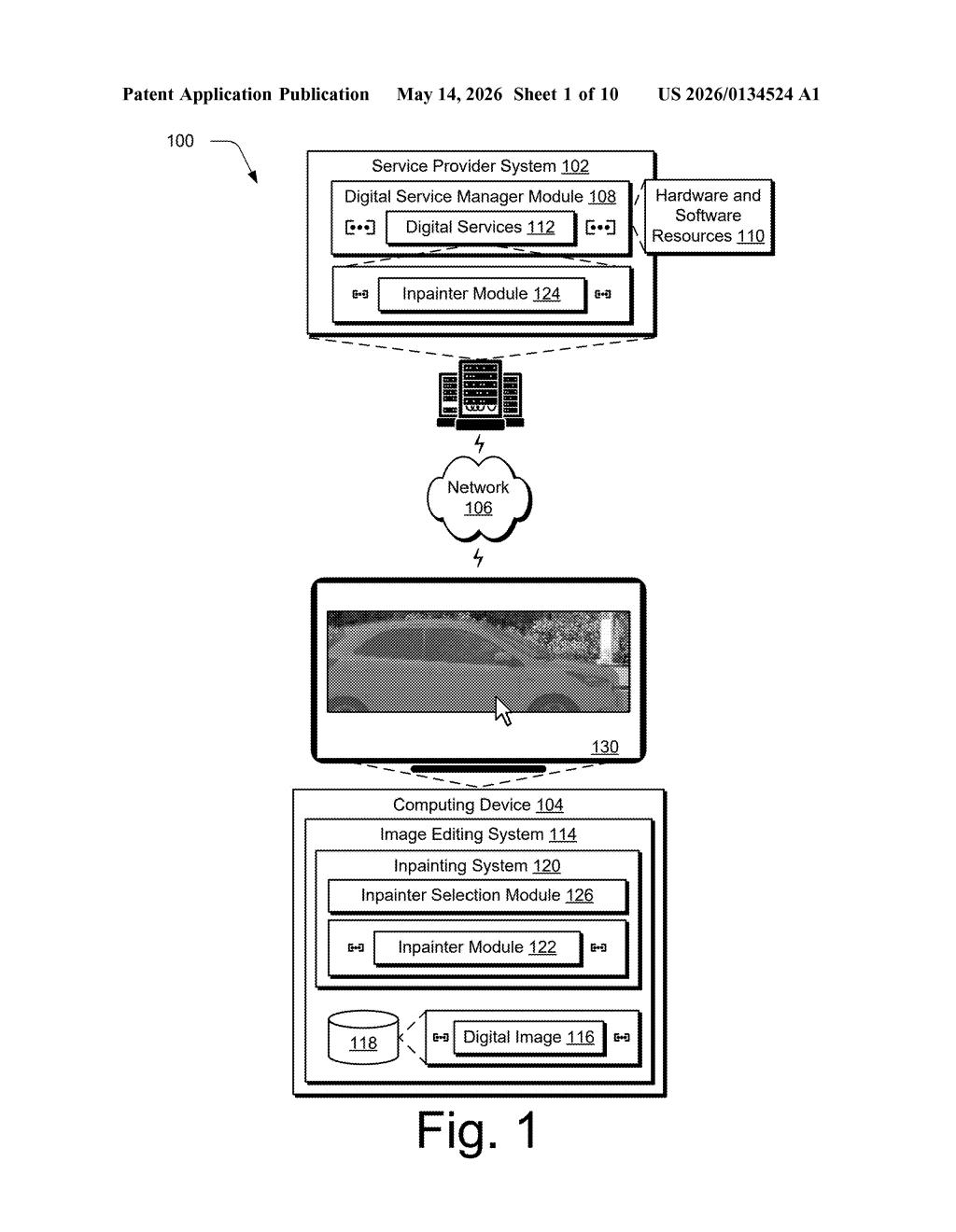

Adobe's patent describes a system that actually measures how complicated the area you want to fill really is before picking which AI model handles it. It uses semantic segmentation — basically, labeling every pixel in your image by what it is (sky, face, car, tree) — to calculate a complexity score.

Depending on that score, the system routes your fill request to the best-suited inpainting module from a pool of options. A simple patch of grass might go to a fast, lightweight model. A tangle of overlapping objects might get handed to a more powerful (and slower) one. You just see the result — the routing happens automatically.

How Adobe's system scores complexity and routes to an inpainter

The patent describes a pipeline with three key stages:

- Semantic segmentation: The system first analyzes the full digital image and generates a map where every pixel is labeled by category — sky, human, vehicle, foliage, and so on. Think of it as an X-ray that shows what's in the scene.

- Complexity detection: Using those labels, the system calculates an amount of complexity for the region that needs to be filled. A region containing many different labels, overlapping objects, or fine-grained detail would score higher than a monotone or simple region.

- Inpainter selection: Based on that complexity score, the system picks one or more modules from a pool of inpainter modules — each presumably trained or optimized for different types of scenes — and uses the selected module(s) to generate the fill.

The claim is intentionally broad: it doesn't lock in a specific AI architecture or segmentation model. What it protects is the decision logic — the idea of using semantic complexity as the selection criterion when routing fill jobs across multiple inpainting backends. The fill result is then surfaced directly in the editing UI.

What this means for Photoshop's Generative Fill pipeline

For users of tools like Photoshop's Generative Fill, this could mean better results with less manual fiddling. Right now, a single model handles everything from erasing a pimple to reconstructing a demolished building facade. A routing system like this could reduce the 'AI smear' artifacts that appear when a powerful model is overkill — or when a lightweight model is hopelessly out of its depth.

For Adobe strategically, this is about managing compute costs and output quality simultaneously. Running a large generative model on every fill request — even trivially simple ones — is expensive. If a rules-based or lighter-weight inpainter can handle 60% of jobs, that's real infrastructure savings at scale.

This is a genuinely practical patent, not a moonshot. The core insight — that inpainting difficulty correlates with semantic complexity, and that you should pick your tool accordingly — is obvious in hindsight but valuable to formalize. Adobe is essentially building a smart dispatcher for its AI editing stack, which matters a lot when you're running millions of fill requests a day on Creative Cloud.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.