Google Patents Real-Time View Synthesis for Streaming 3D Video

Google is patenting a system that takes a bunch of ordinary 2D camera images and, in real time, stitches them into a full stereoscopic 3D video tailored to exactly where you're sitting. It's essentially a live volumetric video pipeline — and it has to work fast enough to stream.

How Google rebuilds 3D scenes from flat camera images

Imagine watching a live concert through a VR headset. The cameras around the stage are all flat — they each capture a normal 2D image. But for you to feel like you're actually there, the system needs to figure out what the scene looks like from your specific vantage point, including a slightly different image for your left eye versus your right eye. That's the problem Google's patent is trying to solve.

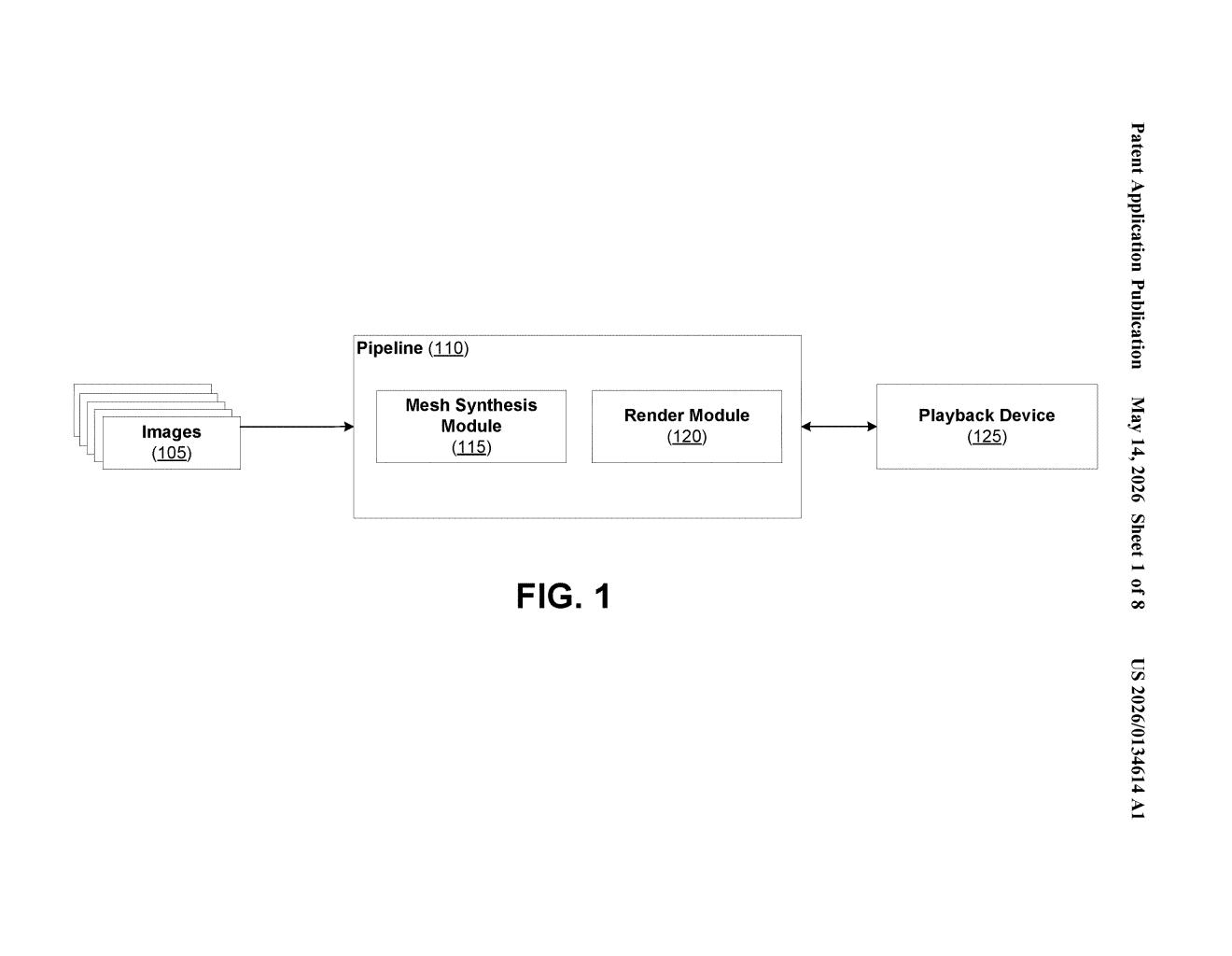

What Google describes is a pipeline that takes those multiple 2D camera images, converts each one into a 3D mesh (think a textured surface map of the scene), blends them together into a single unified mesh, and then renders two separate images from that mesh — one for each eye — perfectly matched to your viewing position in real time.

The key word is real time. This isn't a post-production effect you apply after filming. It's meant to work while the video is actively streaming to a playback device, which makes it significantly harder to pull off.

How Google's mesh pipeline generates stereo depth per viewer

The patent describes a multi-stage rendering pipeline built for streaming 3D video. Here's how the stages break down:

- Input: The system receives multiple 2D images that together represent a single frame of 3D video — think of this as a rig of cameras capturing the same moment from different angles.

- Mesh generation: Each 2D image is used to generate a geometric mesh (a 3D wireframe surface that encodes depth and texture). Then these per-image meshes are fused into a single synthesized mesh — a unified representation of the scene's geometry.

- Stereo rendering: From that synthesized mesh, the system produces two outputs: a left-eye image with a depth map and a right-eye image with a depth map. The depth map encodes how far each pixel is from the viewer — critical for convincing stereoscopic 3D.

- Viewer-adaptive viewpoint: Crucially, both images are rendered from a viewpoint perspective based on the receiver — meaning the output adjusts to where the viewer is positioned, not just a fixed camera angle.

This last point is what separates it from simple 360-video. The pipeline is doing actual novel view synthesis — computing what the scene would look like from a position that no physical camera actually occupied.

What this means for live 3D streaming and VR headsets

For VR headsets and 3D displays, the holy grail is live content that responds to your head position. Pre-recorded 360 video doesn't do this — move your head and the illusion breaks. Google's pipeline, if it works at streaming latency, would make live sports, concerts, or telepresence feel genuinely volumetric rather than like a flat sphere wrapped around you.

Google has been publicly investing in immersive video through projects like Immersive Stream for XR and volumetric capture research. This patent fits squarely into that thread. It also lands at an interesting moment: Apple Vision Pro has raised consumer expectations for spatial video quality, and the race to deliver live volumetric content — not just pre-rendered — is very much on.

This is a genuinely interesting patent because the hard part of volumetric video has always been real-time delivery — anyone can render beautiful 3D scenes offline. Google is staking out IP on the streaming pipeline specifically, which is the piece that has to work at scale for live events. Whether the mesh-fusion approach holds up under network jitter and compute constraints is a real engineering question, but the patent direction is clearly aimed at a problem worth solving.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.