X Development Patents an AI Agent That Automatically Evaluates Scientific Experiments

Imagine having an AI assistant that doesn't just run your experiments — it reads the hypothesis, evaluates the results, and tells you what it found. That's exactly what X Development is building.

What X Development's AI experiment evaluator actually does

Picture a lab notebook that reads itself. You write down a hypothesis — say, "this protein folds differently at higher temperatures" — and instead of waiting for a human scientist to crunch the data, an AI agent reviews the experiment, checks the results, and writes up its own observations.

That's the core idea behind this patent from X Development (Alphabet's moonshot lab). They're describing a platform where experiments are stored as structured records — each one tagged with a hypothesis and a definition of how the experiment was run. An AI-based agent then evaluates those records and generates notes about whether the hypothesis holds up.

This isn't about replacing scientists — it's about handling the volume problem. Modern biology and synthetic biology labs can run thousands of experiments in parallel. No human team can keep up with reading every result. An AI evaluator could flag what's interesting and let researchers focus on the findings that actually matter.

How the AI agent reads hypotheses and generates observations

The system stores an experiment data set — a collection of records where each record captures two things: the hypothesis being tested and the experiment definition (the structured description of how the experiment was designed and executed).

An AI-based agent is then configured to loop through those records, perform an evaluation of each one, and produce at least one observation tied back to the original hypothesis. Think of it as automated peer review at the record level — the agent checks whether what was observed aligns with, contradicts, or is ambiguous relative to what was predicted.

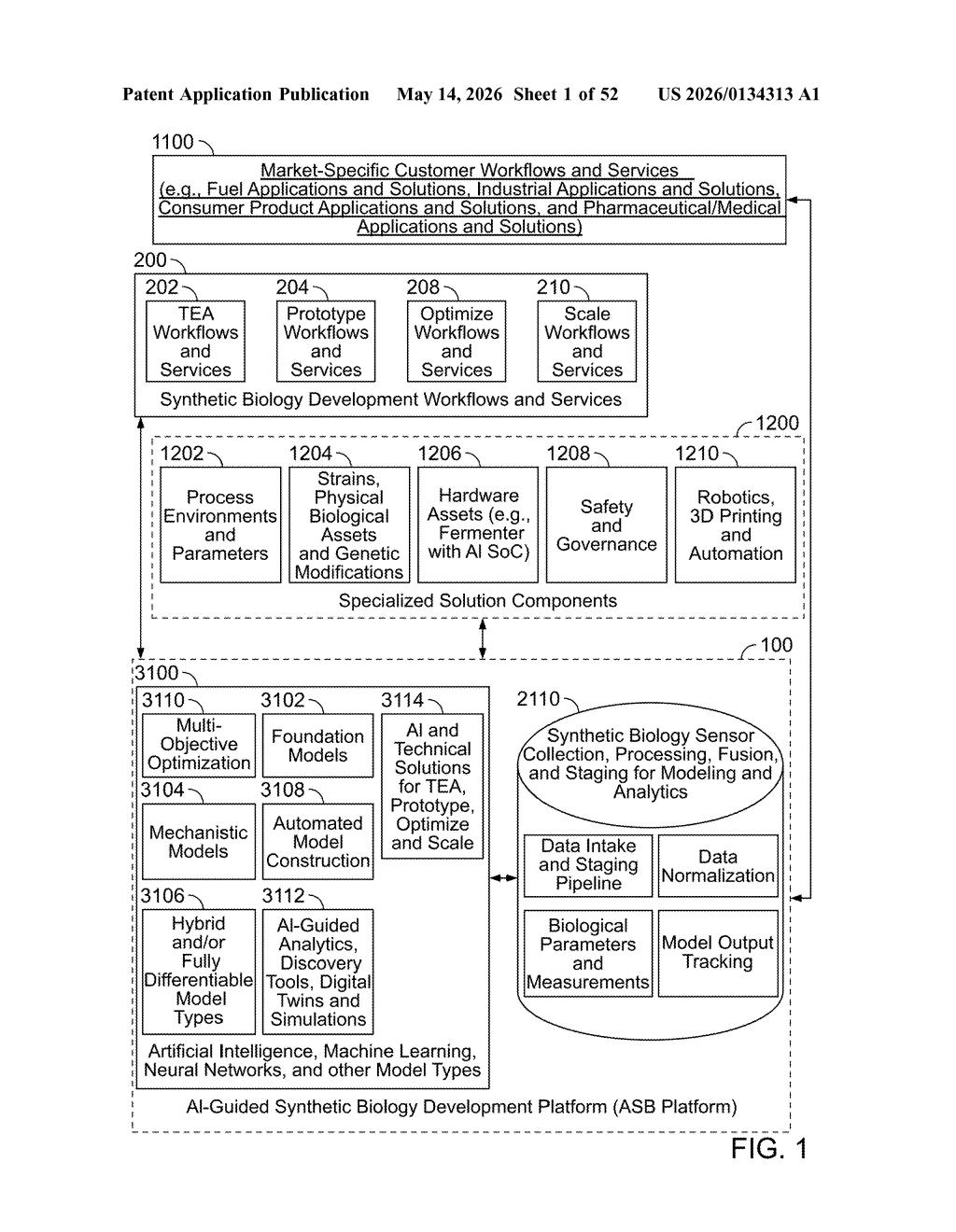

The abstract hints at a broader platform context that includes:

- Synthetic biology and sensor pipelines

- Digital twins and simulations

- Data normalization and model output tracking

- Robotics and 3D printing automation

This suggests the patent is meant to anchor a larger lab automation ecosystem — one where AI agents don't just analyze experiments in isolation but feed findings back into optimization workflows and staging pipelines for future experiments.

What this means for automated scientific research pipelines

X Development sits inside Alphabet and has historically worked on high-risk, long-horizon bets — many of them in biology and life sciences (think Verily). A system that automates hypothesis evaluation at scale is a direct enabler of high-throughput biology, where the bottleneck isn't running experiments but making sense of them fast enough to iterate.

For you as a researcher or a company building lab software, this filing signals that Alphabet is thinking seriously about the full AI-in-science stack — not just ML models for drug discovery, but the infrastructure layer underneath: structured experiment records, automated observation generation, and workflow optimization that ties it all together.

This is a foundational infrastructure patent, not a flashy demo — but that's actually what makes it worth watching. X Development is quietly staking out the scaffolding layer of AI-assisted science, and a system that structures hypothesis-experiment-observation loops is exactly the kind of boring-but-critical plumbing that ends up mattering enormously at scale.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.