Microsoft Patents a Neural Prior Model for Photorealistic 3D Avatars

Microsoft is filing patents on a system that can generate a photorealistic, animatable 3D avatar of you from a single photo — no studio, no body scan, no lengthy setup required. The trick is a neural network trained on thousands of 3D head models that already 'knows' what human faces look like before it ever sees yours.

How Microsoft builds your 3D avatar from one image

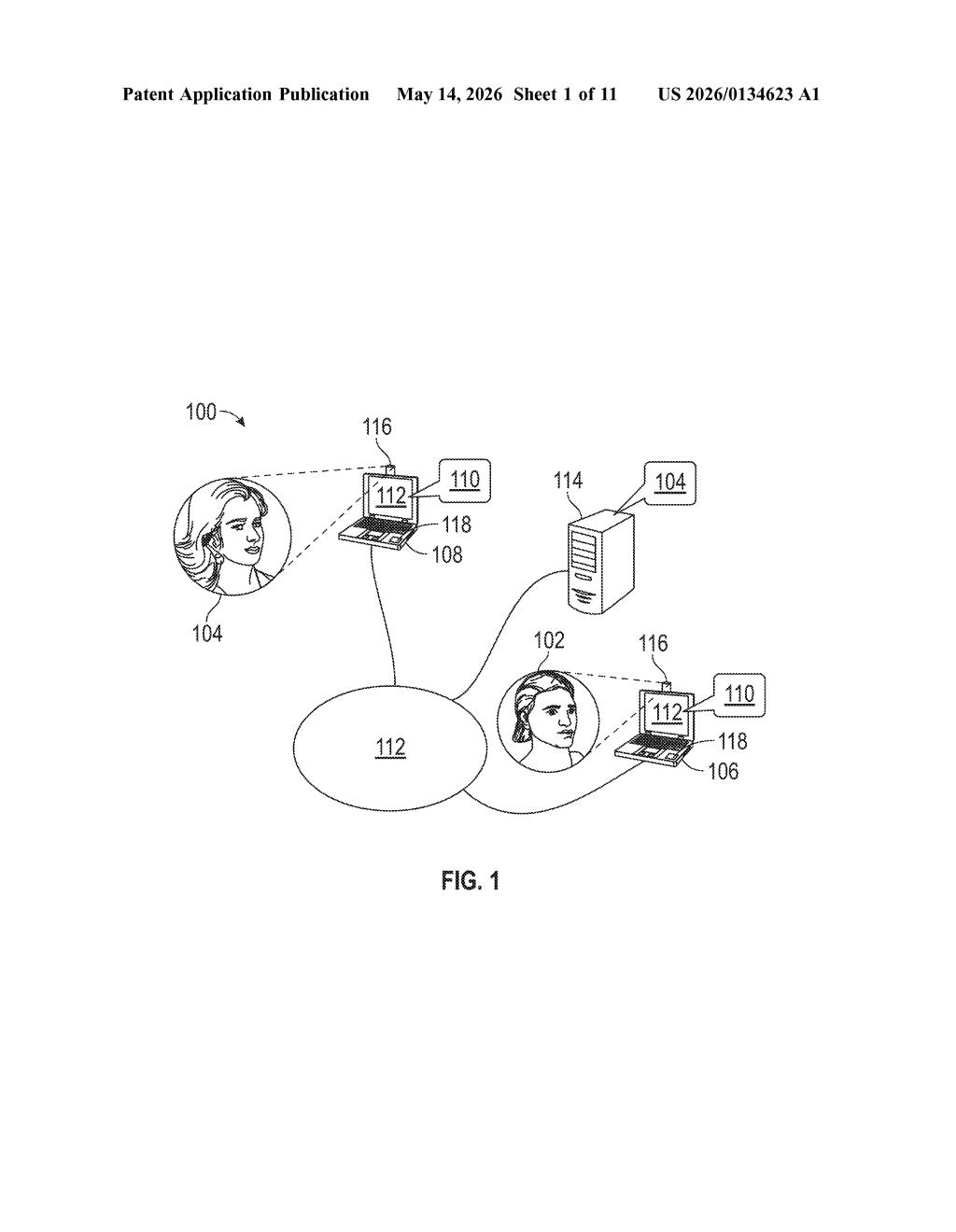

Imagine joining a video call and instead of your webcam feed, a smooth, animated 3D version of your face appears — one that moves when you talk, reacts when you emote, and looks genuinely like you. The catch has always been that building that kind of avatar normally takes dozens of photos, specialized cameras, or a lot of manual work.

Microsoft's approach flips that around. Instead of learning everything about your face from scratch, the system starts with a prior model — a neural network pre-trained on thousands of 3D head scans that already understands what human faces generally look like. When you upload a single image, it figures out what makes your face uniquely yours and stores just those differences.

The result is a 3D avatar that can be rendered from any angle and animated in real time using your voice or facial expressions. The applications Microsoft calls out include video conferencing, VR, gaming, and entertainment — basically anywhere you'd want a digital version of yourself.

How the prior model, identity vector, and Gaussian primitives fit together

At its core, the system uses a rendering technique called Gaussian splatting — instead of building a 3D mesh (like a traditional character model), it represents a face as thousands of tiny 3D blobs called primitives, each with its own position, size, rotation, color, and opacity. Splatting these blobs together from a given camera angle produces a photorealistic 2D image very quickly.

The prior model is a deep neural network trained on a large dataset of 3D head scans. It produces two key outputs: a canonical template (a kind of average human head, represented as Gaussian primitives) and per-primitive feature vectors (semantic codes that describe what each primitive represents — an eyelid, a cheekbone, a lip corner). Crucially, primitives with similar feature vectors are mapped to similar attributes, so the model understands the geometry of faces in a general sense.

When you enroll, the system processes your image through the prior model to generate an identity vector — a compact numerical fingerprint of what makes your face distinct. A decoder network then uses that vector plus the feature vectors to produce an initial set of Gaussian primitive attributes tailored to you.

From there, the system runs a fine-tuning pass:

- It adjusts primitive positions, scales, rotations, colors, and opacities to better match your photo.

- It applies distance constraints to prevent the refined primitives from drifting too far from the prior's baseline (keeping the result plausible).

- Finally, it projects the primitives to a target viewing angle and composites them into a 2D image — all fast enough for real-time rendering.

What this means for video calls, VR, and Teams meetings

For everyday users, this is about making high-quality avatar-based communication feel effortless. If Microsoft can ship something like this in Teams or Xbox, you wouldn't need to buy a depth camera or sit through a calibration session — a single selfie could be enough to get a convincing animated stand-in for video calls or VR meetings.

On the technical side, the Gaussian splatting approach is meaningfully faster than traditional neural radiance fields (NeRF), which have long been considered the gold standard for photorealistic novel-view synthesis but are notoriously slow to train and render. By pre-training a strong prior and encoding personal identity as a compact offset, Microsoft is betting it can hit real-time performance without sacrificing visual quality — which is the exact tradeoff that has kept photorealistic avatars out of consumer products so far.

This is a genuinely interesting patent because it attacks a real bottleneck: the cold-start problem in avatar creation. The combination of a learned prior, Gaussian splatting, and identity-offset fine-tuning is a sensible architecture that reflects where serious academic research in this space has been heading. Whether Microsoft ships it in any recognizable product form is a separate question, but this isn't a speculative moonshot — it's applied engineering on a problem that matters for remote work and social VR.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.