Microsoft Patents an AI System That Scores URLs Before Indexing Them

Every second, search engines discover millions of new URLs — and most of them are junk. Microsoft's new patent describes an AI system that can score a URL's likely value before a crawler even bothers to visit it.

How Microsoft's AI decides which URLs are worth indexing

Imagine you're a librarian and someone hands you a billion slips of paper, each with a different web address on it. You can't possibly read every page behind those links — so how do you decide which ones belong in the library catalog?

That's exactly the problem Microsoft is solving here. Their system trains an AI model on the structure and syntax of URLs — the patterns in how web addresses are written — so it can learn what a "good" URL tends to look like versus a spammy or low-quality one. Think of it like a spell-checker, but instead of checking words, it's checking web addresses.

Once that foundation model is built, Microsoft layers on a prediction classifier — a second AI component that outputs a score for any new URL. If the score clears a threshold, the URL gets added to the search index. If not, the crawler skips it. The whole thing is designed to be fast, operating before any page content is fetched.

How the URL language model feeds the prediction classifier

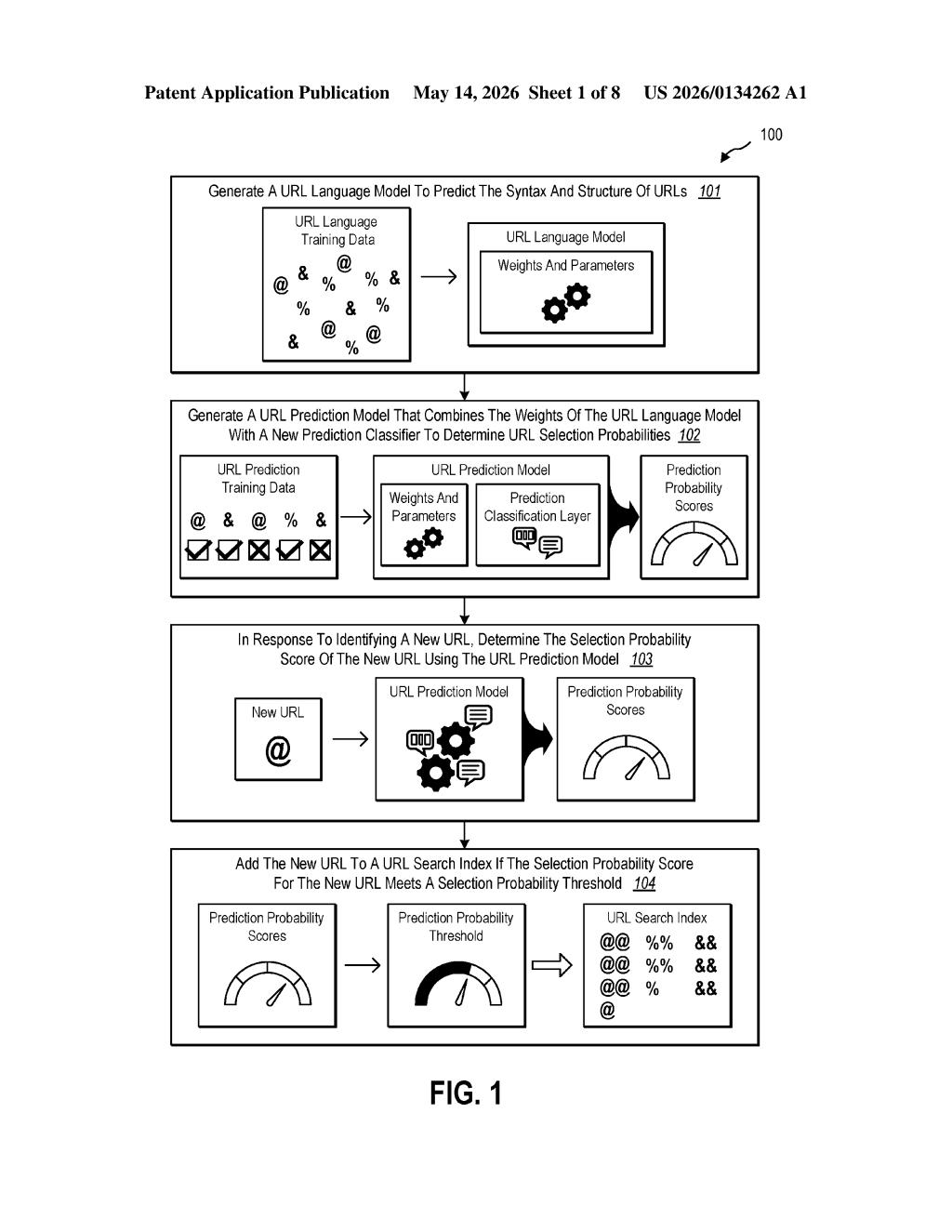

The system works in two sequential training phases, then a live scoring phase.

Phase 1 — URL Language Model: Microsoft trains a generative AI model exclusively on URL strings. The goal isn't to understand the pages behind the URLs — it's to learn the language of URLs themselves: how paths, subdomains, query parameters, and file extensions tend to be structured on high-quality versus low-quality sites. This is analogous to how a large language model learns grammar from text, except here the "grammar" is URL syntax.

Phase 2 — URL Prediction Model: The tuned weights from the language model are then combined with a new prediction classification layer (a lightweight neural network head that converts internal representations into a probability score). This transfer-learning approach — reusing the URL language model's weights rather than training from scratch — keeps the second model efficient.

Phase 3 — Live scoring: When the crawler discovers a new URL it has never seen before, the prediction model outputs a selection probability score. That score is compared against a configurable threshold. URLs that clear the bar get added to the search index; others are deprioritized or skipped entirely.

The patent emphasizes speed: the whole evaluation happens without fetching page content, making it dramatically cheaper than traditional crawl-then-evaluate pipelines.

What this means for Bing's crawl efficiency and search quality

For a search engine like Bing, crawl budget — the finite resources available to discover and process web pages — is one of the most expensive operational constraints. If you can filter out low-value URLs before dispatching a crawler, you save compute, bandwidth, and storage at massive scale. This patent is essentially Microsoft's attempt to automate that triage with a dedicated AI layer.

For you as a web publisher or SEO practitioner, this is a reminder that search engines are increasingly making indexing decisions based on signals that have nothing to do with your page content. A URL's structure — its length, path depth, query parameter patterns — may carry more weight in whether your page even gets indexed than previously assumed.

This is genuinely interesting search infrastructure work, not a flashy consumer AI story. The idea of training a model specifically on URL syntax — treating URL structure as its own 'language' worth learning — is a clever framing that sidesteps expensive page-fetch costs. It's the kind of pragmatic, cost-reduction engineering that actually ships inside large-scale web crawlers.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.