Samsung Patents a Motion-Trajectory System for Fixing Blurry Video Frames

Every video has a few frames ruined by motion blur — a fast pan, a sudden gesture, a poorly timed shutter. Samsung's new patent describes a smarter way to fix those frames by borrowing motion data from the sharp ones around them.

What Samsung's motion-guided video deblur actually does

Imagine you're filming a birthday party and someone dashes across the frame. Most of the clip is sharp, but a couple of frames come out blurry because the camera's shutter couldn't freeze the motion fast enough. Today's tools try to fix those blurry frames in isolation, which often produces smeared or artificial-looking results.

Samsung's approach works differently. Instead of guessing what a blurry frame should look like, the system first studies the sharp frames right before and after the blur — tracking exactly how each pixel moved across those clear images. It then uses that real motion path to predict how pixels were moving during the blurry frame.

With that predicted motion in hand, the device can run a much more informed deblurring pass — essentially "undoing" the blur along the actual path the subject traveled. The result should look more natural than generic sharpening filters, because it's guided by real motion evidence from your own video.

How Samsung maps pixel paths across sharp and blurry frames

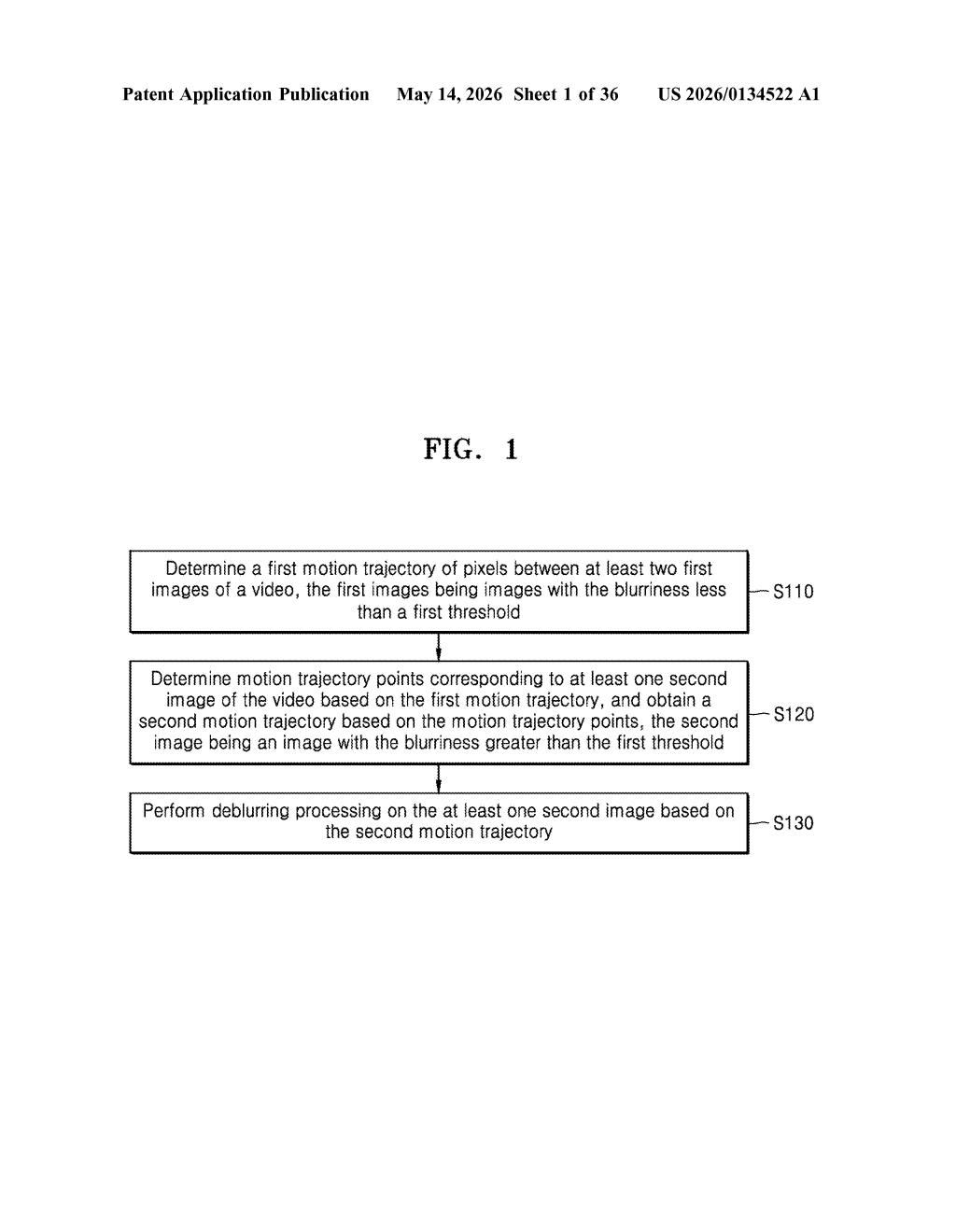

The patent describes a four-step pipeline running on-device during or after video capture.

Step 1 — First motion trajectory: The system analyzes at least two sharp (low-blur) frames from the video and maps the pixel-level motion between them. Think of this as drawing a trail showing where each part of the scene was heading.

Step 2 — Trajectory point estimation: Using that established motion path, the system extrapolates or interpolates motion trajectory points for a blurry frame that sits nearby in the timeline — essentially asking "if pixels were moving this way before and after, where were they during this frame?"

Step 3 — Second motion trajectory: Those estimated points are assembled into a second motion trajectory — a frame-specific motion map that describes the blur kernel (the directional smear caused by movement during exposure) for that particular image.

Step 4 — Deblurring: The blurry frame is processed using this trajectory as a guide. Rather than applying a generic sharpening filter, the algorithm can invert the specific motion blur that actually occurred, producing a cleaner result.

The patent notes that the target frame's blurriness is explicitly greater than the reference frames — meaning the system is designed to handle cases where exposure time or motion caused significant degradation, not just minor softness.

What this means for Samsung's video capture pipeline

For Samsung, which ships cameras in hundreds of millions of Galaxy phones and tablets each year, even small improvements to video quality translate into a meaningful competitive edge. This kind of motion-aware deblurring is especially relevant for slow-motion video, low-light shooting, or any scenario where a few frames get wrecked while the rest of the clip looks fine.

The broader implication is a shift from reactive image correction (fix the blur after the fact with no context) toward context-aware correction (use what the camera already knows about the scene's motion). If this ships in Galaxy camera firmware or Samsung's video processing stack, it could noticeably reduce the number of ruined frames in everyday clips — without asking users to do anything differently.

This is solid, practical camera engineering — not a flashy AI demo, but exactly the kind of incremental pipeline work that separates good phone video from great phone video. The core insight (use sharp neighboring frames to infer the blur kernel of a damaged frame) is sensible and well-scoped. Whether it produces visibly better results than existing optical flow deblurring methods depends on implementation details the patent doesn't fully reveal, but the approach is sound.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.