IBM Patents a System for Ranking AI Answers by Accuracy

What if your AI assistant didn't just give you one answer — but quietly ran several, graded them, and only showed you the best one? That's the core idea behind IBM's latest patent.

How IBM scores and filters LLM responses

Imagine you ask an AI assistant a complex question about tax law or medical dosing. The AI generates an answer, but how do you know it's the best answer, or even a correct one? Right now, most AI systems just hand you whatever comes out first.

IBM's patent describes a system that asks the AI the same question multiple times, collects all those different responses, and then evaluates each one for accuracy. It does this by pulling out the relevant domain context — the subject matter and key concepts — from both your original question and each answer. Then it runs a semantic comparison (basically, a meaning-level check) to see how well each answer lines up with what you actually asked.

Each response gets a weighted score, and the system ranks all the outputs from most to least accurate before serving you a final, higher-confidence answer. Think of it like having a fact-checker in the loop who never sleeps.

How domain context and weights sort LLM outputs

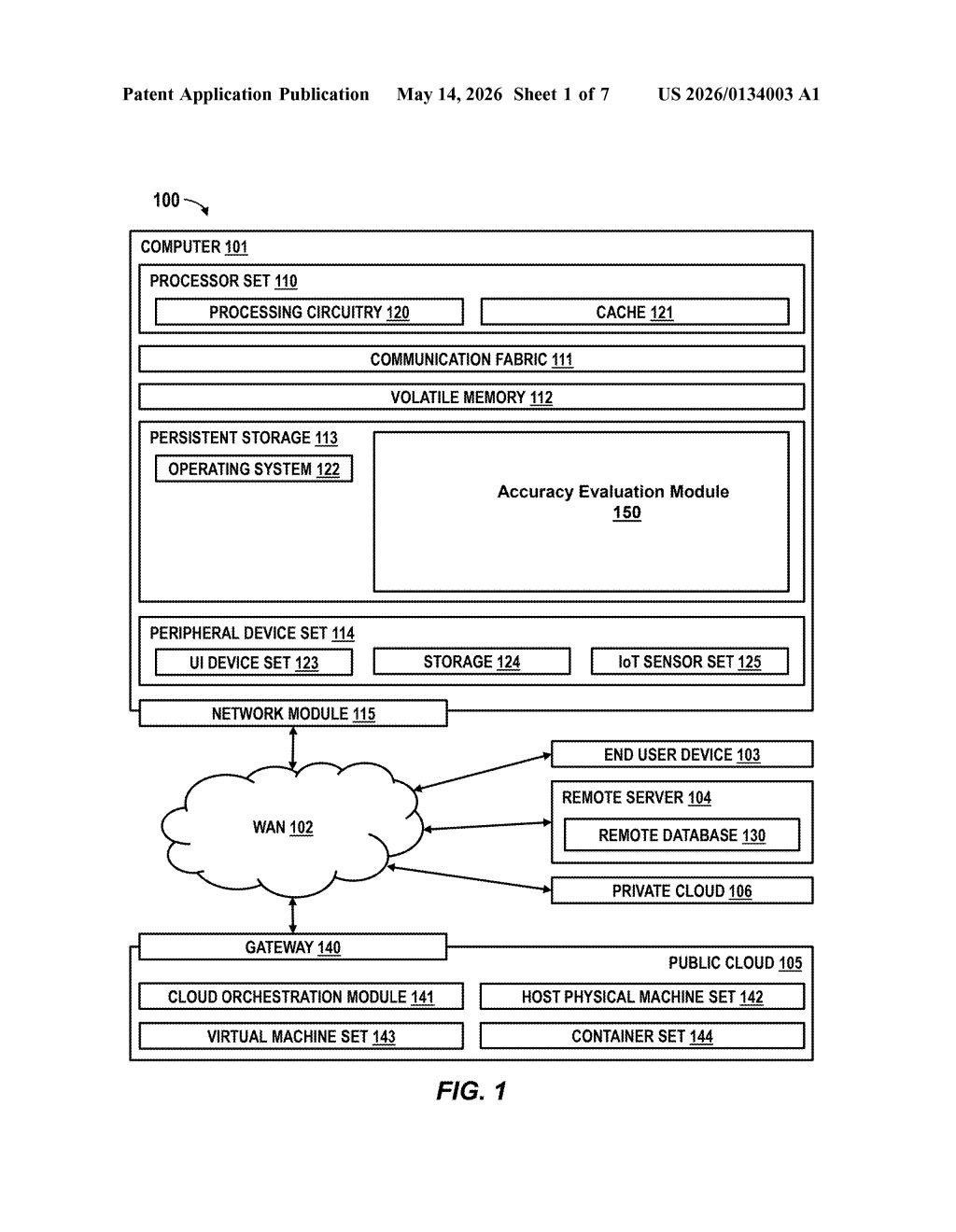

The patent describes a pipeline with several distinct stages that work together to filter and rank LLM-generated responses before they reach the end user.

- Query intake and multi-output generation: The system receives a user query and prompts the LLM to produce multiple distinct outputs in response — not just one.

- Domain context extraction: From both the original query and each generated output, the system extracts domain context — the topical scope, key entities, and subject-matter framing. This is the signal used to judge relevance and correctness.

- Model input generation and semantic analysis: The extracted contexts are fed into an input module that builds a comparison baseline. A semantic analysis (meaning-level comparison, not just keyword matching) is then run between each LLM output and that baseline to assess alignment.

- Weighted ranking: Each output receives a weight based on how well it performs in the semantic comparison. The outputs are ranked accordingly, and the top-ranked response becomes the final answer delivered to the user.

The patent doesn't specify a particular LLM architecture, so this is designed to sit on top of existing models as an evaluation layer — making it model-agnostic in principle.

What this means for enterprise AI reliability

For enterprise deployments — think customer support bots, internal knowledge assistants, or clinical decision-support tools — hallucination and inconsistency are the biggest blockers to real adoption. IBM's approach attempts to build a self-auditing layer that catches bad outputs before they reach users, without requiring humans in the loop for every query.

This fits squarely into IBM's watsonx AI platform strategy, where reliability and governance are core selling points to large enterprise clients. If this kind of accuracy-ranking layer ships in a product, it could make AI assistants meaningfully more trustworthy in high-stakes domains like finance, healthcare, and legal services — exactly the verticals IBM targets.

This is a solid, practically motivated patent — the problem it addresses (LLM outputs being inconsistent and hard to validate automatically) is very real, and IBM's enterprise customers genuinely need solutions like this. It's not a flashy research breakthrough, but a weighted-ranking wrapper around multi-sample LLM outputs is exactly the kind of unglamorous infrastructure that quietly makes AI deployable in regulated industries.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.