IBM Patents a Multi-Agent System That Rewrites Bad AI Prompts Automatically

Every AI system eventually produces a wrong or unhelpful answer — the question is what happens next. IBM's new patent describes a system where the model itself figures out why a prompt failed and rewrites it to do better, using a council of AI agents to agree on exactly what went wrong.

How IBM's self-correcting prompt rewriter works

Imagine you ask a chatbot a complicated question and it gives you a confusing, off-target response. Normally, you'd have to rephrase the question yourself and try again. IBM's patent proposes automating that whole back-and-forth loop.

The system works by catching a bad output, then asking a group of AI agents to collectively score how wrong it was. Those agents debate the score across multiple rounds until they reach a consensus — think of it like a panel of judges independently rating a performance, then adjusting their scores after hearing each other's reasoning.

Once the error is scored, the same AI model breaks your original prompt down into its logical building blocks — an "argument structure" — and figures out which piece caused the problem. It swaps out that piece for a better version, rebuilds the prompt, and tries again. The goal is a revised prompt that scores lower on the "error scale" — meaning a better answer, found automatically.

How the agent scoring loop breaks down and repairs a prompt

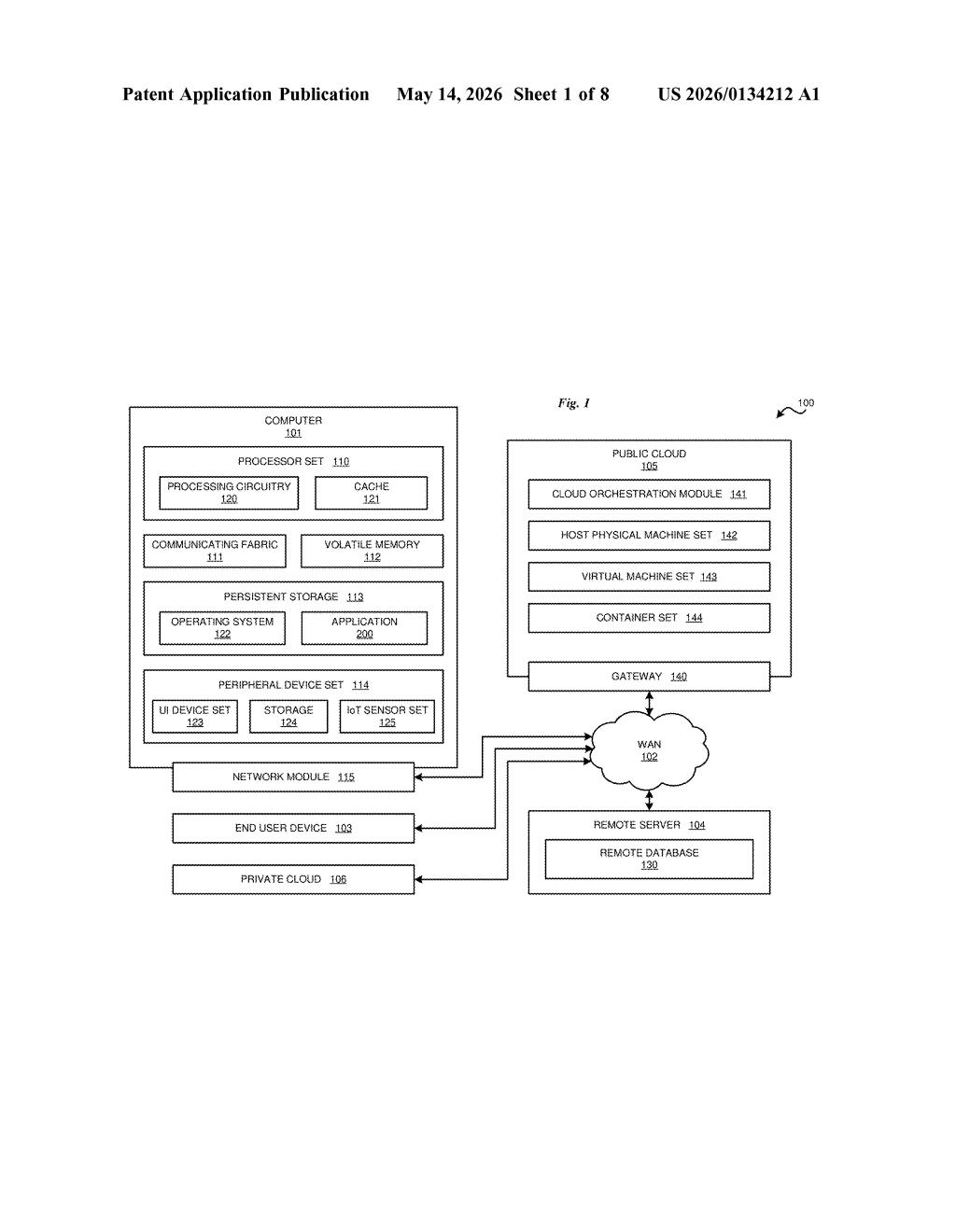

The patent describes a multistage, self-correcting pipeline for large language models (LLMs). When a model produces output that contains an error, the system doesn't just discard it — it systematically diagnoses and repairs the prompt that caused the error.

The first stage is error scoring via a multi-agent deliberation loop. A plurality of agents (multiple specialized AI sub-models) each independently score the error. Crucially, they iterate: each agent adjusts its own score based on what the other agents are scoring — a process similar to how a jury might revise individual opinions after group deliberation. The final composite score represents a calibrated measure of how badly the prompt failed.

The second stage is argument decomposition. The LLM is instructed to parse the original prompt into a structured representation — an argument structure — which breaks it into discrete logical components (premises, constraints, goals, etc.).

- Identify: Using the error score and the argument structure, the model pinpoints which component of the argument is most responsible for the bad output.

- Replace: The model generates a substitute component predicted to reduce the error score.

- Rebuild: The revised argument is reassembled into a new, corrected prompt.

This loop can run iteratively — the system keeps refining until the revised prompt produces output below an acceptable error threshold.

What automated prompt correction means for enterprise AI

For enterprises running AI in high-stakes multistage workflows — think automated document processing, customer service pipelines, or code generation — bad prompts are a silent failure mode. Engineers spend enormous time manually tuning prompts, often without visibility into why a particular phrasing fails. IBM's system would turn that into an automated, auditable process.

This also has implications for agentic AI systems — the wave of AI "agents" that are supposed to complete multi-step tasks autonomously. If an agent misfires midway through a workflow, this kind of self-correction loop could recover without human intervention. That's a meaningful reliability upgrade for anyone building serious AI automation, and it plays directly into IBM's enterprise software strategy with watsonx.

This is genuinely interesting infrastructure work, not flashy demo-ware. The multi-agent consensus scoring mechanism is the real novelty here — it's a more principled approach to error quantification than the single-model self-critique loops you see in most academic prompt-repair papers. IBM is clearly positioning this for enterprise AI reliability, where 'the model got confused' is an unacceptable answer.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.