IBM Patents a Gesture-Based Authentication System Using Machine Learning

Forget passwords or fingerprints — IBM is exploring whether the way *you* move your hand in space could be enough to prove you're you. The patent combines gesture recognition with user-specific biometric identification in a single ML-driven step.

What IBM's gesture authentication actually does

Imagine you want to approve a bank transfer on your phone or laptop. Instead of typing a PIN or pressing your thumb to a sensor, you perform a specific movement in the air — a swipe, a wave, a custom gesture — and the system not only recognizes the gesture itself but also verifies that you are the one performing it.

That's the core idea behind this IBM patent. A machine learning model watches a set of gestures and answers two questions at once: Is this the correct gesture for this action, and is it being performed by the right person? Both checks have to pass before anything happens.

This matters because current gesture systems usually just recognize the shape of a movement — anyone who copies your gesture could potentially trigger the same action. IBM's approach treats the way you move as a personal signature, making the gesture itself the password.

How IBM's ML model ties gestures to a specific user

The patent describes a three-step process anchored by a machine learning model system:

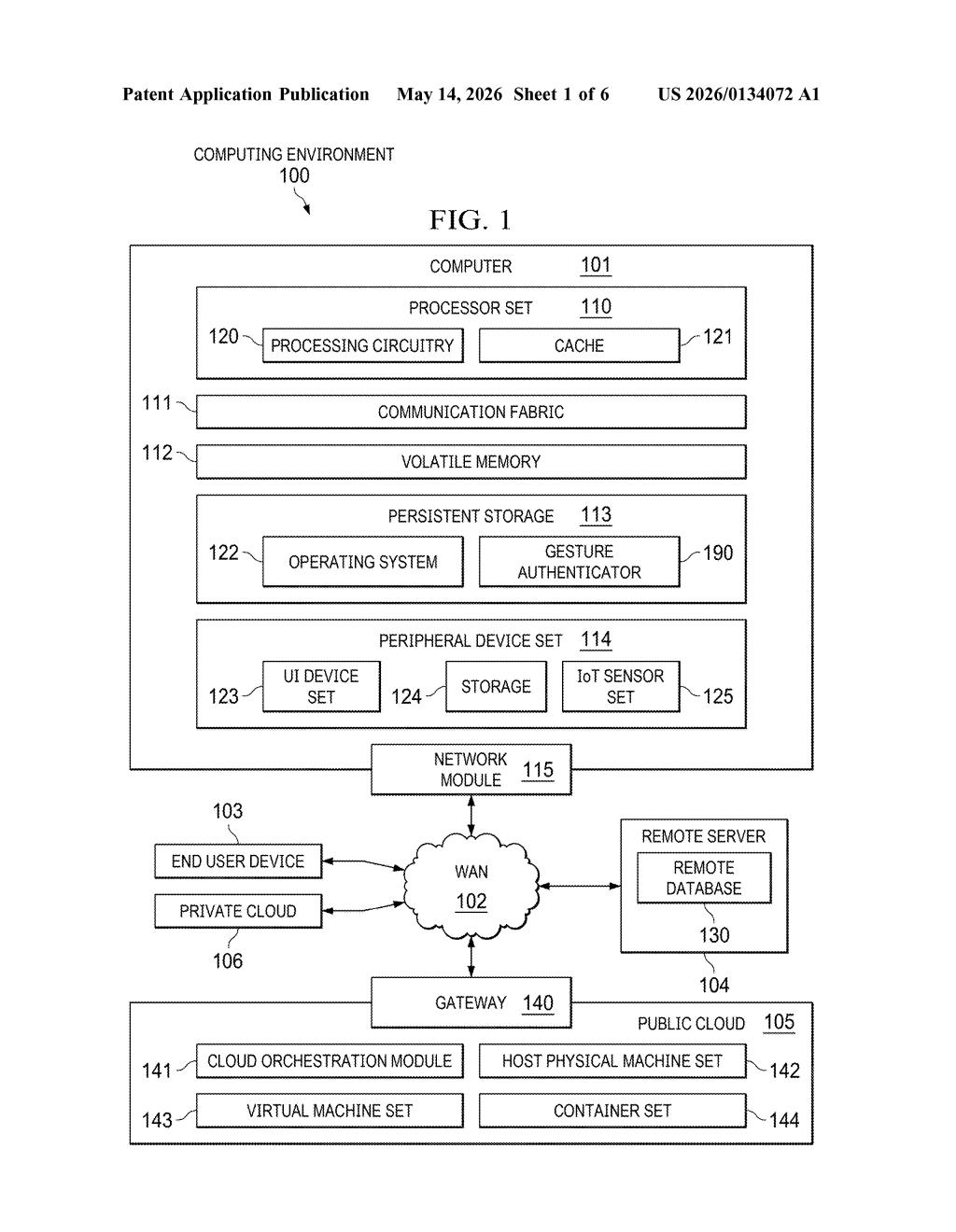

- Gesture detection: A sensor captures a set of spatial gestures performed by a user. This could be hand movements tracked by a camera, depth sensor, or wearable device.

- Dual authentication check: The ML model evaluates two things simultaneously — whether the gesture sequence matches a known authenticated action, and whether the unique motion characteristics (speed, trajectory, pressure, timing) match the enrolled profile of the specific user.

- Conditional action execution: The computer system only carries out the target action — like a funds transfer, an app unlock, or a command — when both conditions are confirmed true.

The key insight is that gesture dynamics (the precise, personal way someone moves) function as a behavioral biometric — similar to how how you type can identify you, not just what you type. The ML model is presumably trained on per-user gesture samples during an enrollment phase, though the patent doesn't fully specify the model architecture.

The patent's figures reference a funds transfer use case, suggesting the inventors had financial authorization scenarios in mind — a high-stakes context where both gesture recognition and identity verification need to be airtight.

What this means for passwordless and biometric security

Biometric authentication is already crowded — Face ID, fingerprint sensors, voice recognition — but behavioral biometrics tied to gesture dynamics is a less-explored angle, especially in spatial computing and AR/VR environments where touchscreens aren't the primary input. If you're wearing a headset or using a camera-equipped kiosk, gesture authentication could replace PINs in a natural, hands-free way.

For IBM specifically, this fits squarely into its enterprise security portfolio. A system that can authorize high-value actions — payments, access controls, digital signatures — using only a user's unique movement pattern could reduce friction without weakening security. The practical challenge will be accuracy in real-world conditions: fatigue, injury, or environmental noise can all change how a person moves.

This is a competent, clearly reasoned patent that addresses a real gap: most gesture systems authenticate the *action* but not the *actor*. The dual-verification framing is the genuinely interesting piece here. That said, behavioral biometrics for gesture dynamics is not a new research area, and IBM will need to show strong prior-art differentiation to get broad claims through examination.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.