IBM Patents a Context-Expanding System That Makes AI Database Queries Smarter

When you ask an AI a question about a database, it often grabs only the obvious fields — missing crucial related data sitting just one column over. IBM's new patent tries to fix that by teaching the AI to look around the neighborhood before it answers.

How IBM's query expansion feeds AI better database context

Imagine asking your company's AI assistant, "What's our ESG governance score?" A basic system finds the one database column labeled 'governance score' and hands that to the AI. But the score is meaningless without knowing the peer average, the percentile group, or the methodology behind it — all of which live in nearby columns that the simple lookup missed.

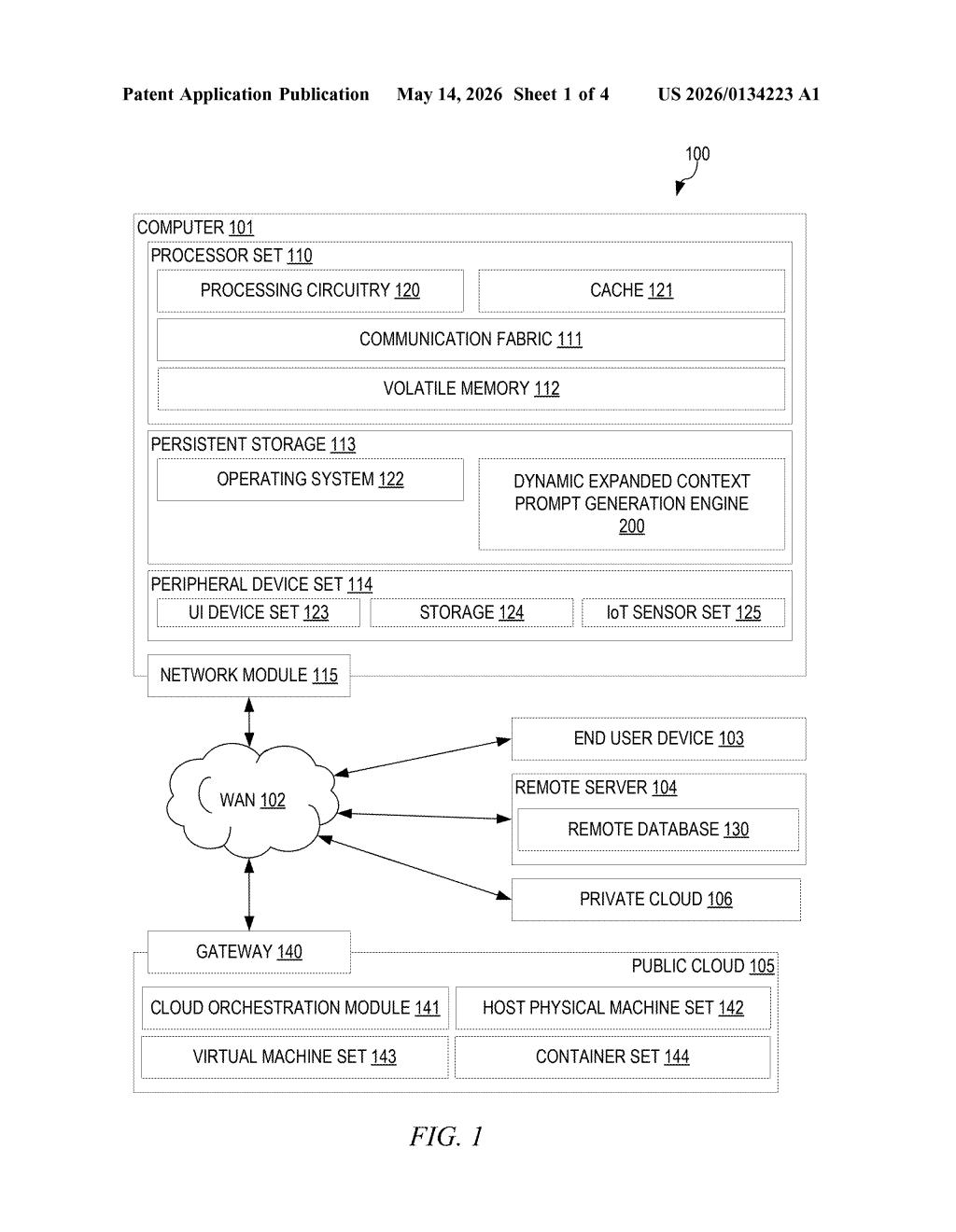

IBM's patent describes a smarter approach: after the system finds the direct matches to your question, it automatically scans adjacent fields in the database's data dictionary — a kind of index that describes what every column means and how they relate. It then pulls in the relevant neighboring fields and includes all of that enriched data in the prompt it sends to the AI.

The result is that the AI gets a fuller picture before it writes its answer — less like handing a chef one ingredient and more like giving them the whole pantry inventory for that dish.

How IBM's data dictionary traversal builds richer prompts

The patent describes a pipeline with a few distinct stages:

- Field matching: The system parses your natural-language input and maps keywords to specific fields in a data source schema (think: the structural blueprint of a database or data warehouse). These become the "first fields" — the direct hits.

- Adjacency analysis: For each matched field, the system inspects the data dictionary — metadata that describes each column's name, type, and relationships — and identifies "second fields" sitting nearby. Nearness here is defined semantically or structurally (neighboring columns in the same table, or fields linked by schema relationships), not just physical proximity.

- Expanded context assembly: The relevant second fields get merged into an expanded field listing, and actual data values from all those fields are pulled and packed into the context portion of a prompt sent to a generative AI model.

- AI response generation: The generative model (think a large language model) processes that enriched prompt and returns a response grounded in the fuller dataset.

The "learned context" in the title suggests the adjacency relevance scoring isn't purely rule-based — the system presumably trains on query-response pairs to get better at knowing which neighboring fields actually help.

What this means for enterprise AI querying structured data

Enterprise AI assistants querying structured data — think Salesforce analytics, SAP reports, or internal BI tools — constantly suffer from incomplete context. A single matched field is rarely enough to answer a business question correctly, which leads to confidently wrong AI answers. IBM's approach is a practical fix for that brittleness.

For you as an end user, this means fewer follow-up questions and less "the AI gave me a number with no context" frustration. For IBM, it fits squarely into their push to make watsonx and related enterprise AI platforms genuinely useful on top of real corporate databases — structured data being one of the hardest places to deploy LLMs reliably.

This is solid, unsexy infrastructure work that addresses a real and underappreciated failure mode in enterprise AI — the context starvation problem. It's not a flashy foundation-model patent; it's the kind of plumbing that makes the difference between a demo that works and a product that doesn't embarrass you in front of a client. Worth paying attention to if you build or buy AI tools that touch databases.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.