Samsung Patents an AI Assistant That Chains Multi-Domain Tasks in One Request

Ask your phone to find out how long it takes to get somewhere and automatically set an alarm for arrival — that's the exact scenario Samsung's new patent is built around. It's a system for teaching a voice assistant to chain tasks across completely different app domains in a single breath.

How Samsung's chained-intent assistant handles complex requests

Imagine you say to your phone: "Tell me how long it takes to get to the restaurant and set an alarm for when I arrive." That's actually two separate jobs — one for Maps, one for Clock — and today most assistants make you ask for each one individually, or fumble the handoff between them.

Samsung's patent describes a smarter way to handle this. The assistant parses your single request into two distinct intents, runs the first one (navigation), grabs the result (estimated arrival time), and automatically feeds that result into the second task (alarm creation) — without you having to repeat yourself or copy-paste anything.

The key insight is that the output of the first task becomes the input to the second. The system figures out on its own what information needs to flow between the two, using trained models to bridge the gap. It's less like asking two separate apps and more like giving instructions to a capable assistant who just figures out the rest.

How the device links first-domain output to second-domain input

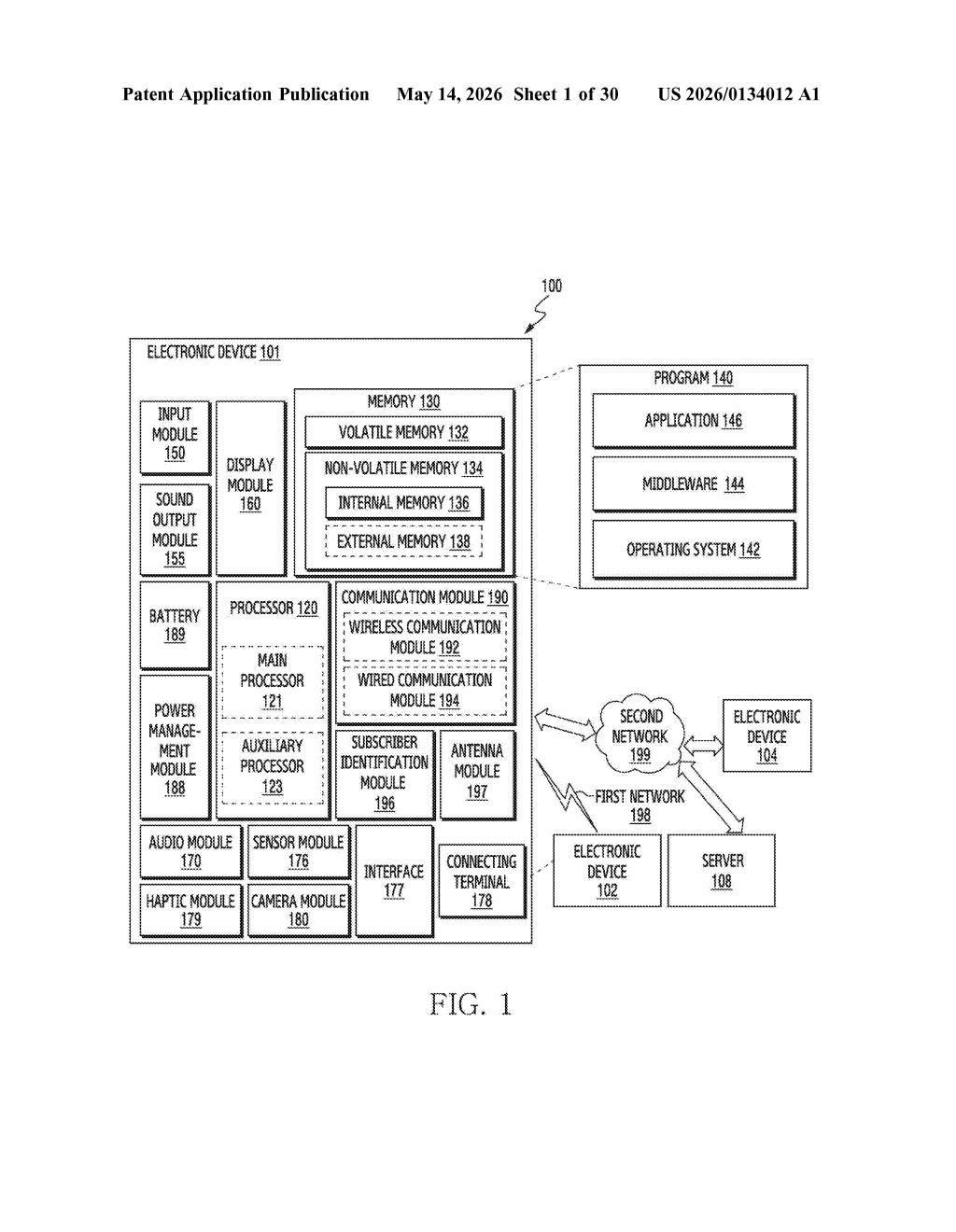

The patent describes an intent chaining architecture running on a device with at least one processor and memory. When you give a language-based input, the system identifies multiple intents buried in that single utterance and assigns each to a domain (think: navigation, calendar, messaging, alarms).

Here's the sequence the patent lays out:

- Your input is parsed into first input data (intent #1, e.g., get travel time) and second input data (intent #2, e.g., set an alarm).

- The first input is sent to an application via one or more trained models — these act as the translation layer between natural language and app-level API calls.

- The app returns first response data (e.g., "arrival at 1:27 PM").

- The system then generates third input data by combining that response with the second intent — essentially constructing a new, context-aware instruction like "set alarm for 1:27 PM."

- That third input goes back to the application layer to produce the second response and deliver the final service.

The phrase "third input data" is the clever bit — it's not just passing along raw output. The system synthesizes a new, coherent instruction from a combination of what it learned and what you originally asked. The patent is framed around a single unified application interface, suggesting this could run through one central AI layer (like Bixby) rather than requiring direct integrations between every pair of apps.

What this means for Samsung's Bixby and on-device AI strategy

For Samsung, this is squarely in Bixby territory. One of the persistent criticisms of Samsung's assistant is that it struggles with compound, multi-step requests — especially when those requests span different app categories. A patent like this signals Samsung is thinking architecturally about how to fix that, building a pipeline where context flows between tasks rather than resetting after each one.

For you as a user, the practical win is fewer back-and-forth exchanges with your assistant. The embedded example in the patent filing — travel time to "Sequel" triggering an alarm set to 1:27 PM — is mundane on purpose. It's mundane because it's the kind of thing people actually try to do and currently can't do smoothly. Getting the boring everyday stuff right is where assistant credibility is won or lost.

This is a solid, focused patent on a real usability gap — nobody enjoys manually copying an ETA from Maps into Clock. The intent-chaining approach is well-scoped and the worked example in the filing makes the value proposition unusually clear. It's not a moonshot, but it's exactly the kind of plumbing work that separates assistants people actually use from ones they give up on.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.