Adobe Patents a One-Step Transformer Prior for Text-to-Image Synthesis

Adobe is patenting a shortcut in the text-to-image pipeline — a transformer-based model that converts a text prompt into an image embedding in a single forward pass, rather than the usual multi-step diffusion process.

What Adobe's one-step image embedding actually does

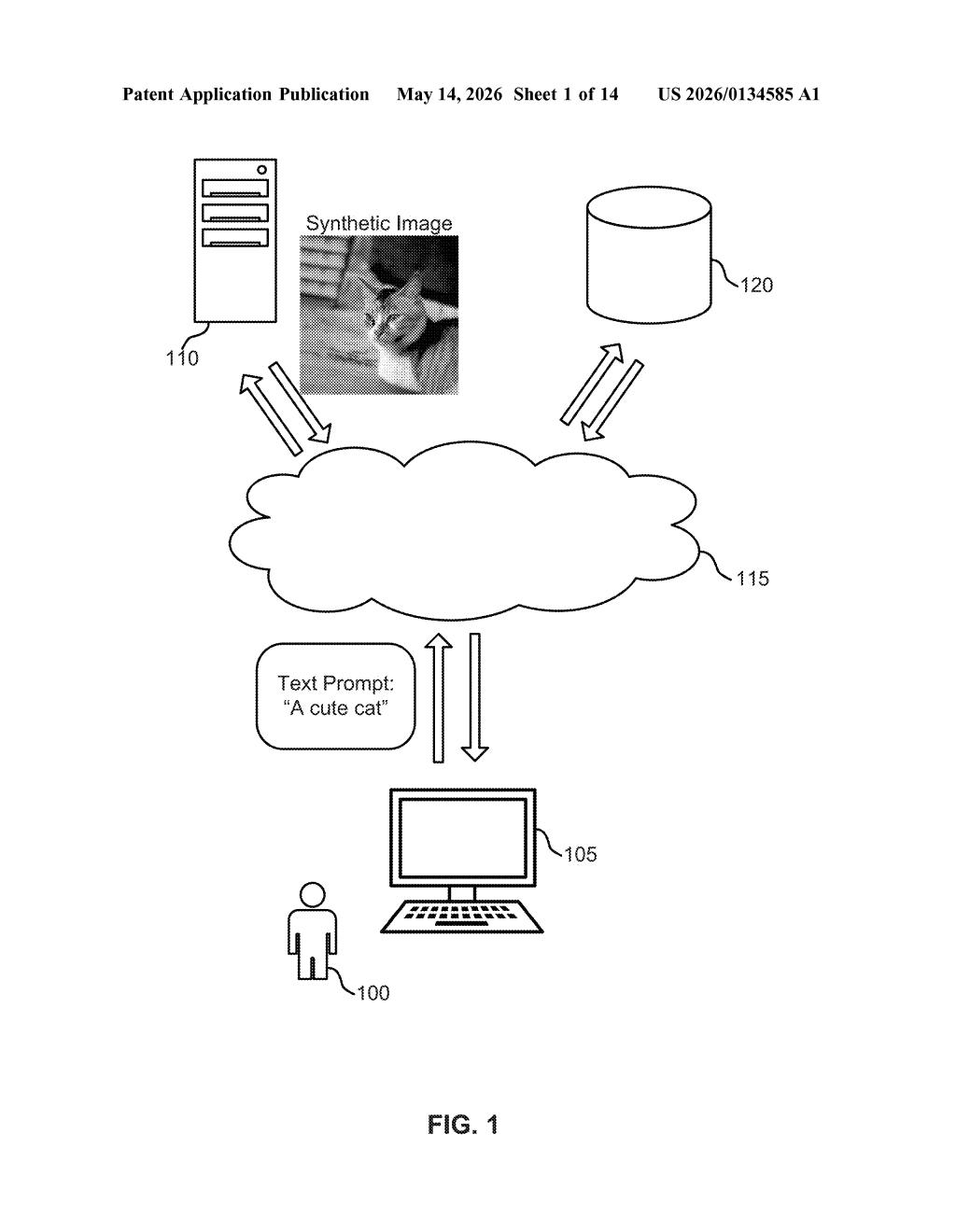

Imagine you type "a cute cat" into an AI image generator. Normally, the system has to go through a long, iterative back-and-forth process to figure out what visual features — fur texture, ear shape, color — that phrase implies before it even starts drawing. That's slow and compute-heavy.

Adobe's patent describes a shortcut: a prior model built on transformer architecture that jumps straight from your text prompt to a compact bundle of visual information (called an image embedding) in one single step. That embedding then hands off to a separate image generation model to produce the final picture.

The result is a leaner, faster pipeline where the "what should this look like?" question gets answered almost instantly. For a tool like Adobe Firefly, where users iterate quickly on creative prompts, shaving off that inference time could make the experience feel noticeably snappier.

How the transformer prior skips iterative diffusion steps

The patent describes a three-stage pipeline:

- Text prompt input: A user provides a natural-language description of an image element (e.g., "a cute cat").

- Transformer prior model: This model takes the text prompt and generates an image embedding — a dense numerical representation of the visual features implied by the prompt. Crucially, this happens in one inference step, not through iterative denoising like a standard diffusion model.

- Image generation model: The embedding is passed to a separate generative model, which renders the final synthetic image.

The key innovation is in that middle stage. Traditional text-to-image systems often rely on diffusion-based prior models (think DALL-E's CLIP prior), which require many sequential denoising steps to converge on a good image embedding — each step is a separate model call, adding latency.

A transformer prior (a neural network that processes sequences using attention mechanisms — essentially, the same core tech behind GPT) can be trained to predict the target embedding directly, collapsing that multi-step process into one. The claim is specifically about one-step inference, which is the computationally expensive bottleneck being targeted here.

What this means for Adobe's Firefly pipeline speed

For Adobe Firefly users, faster prior inference means shorter wait times between typing a prompt and seeing a result — which matters a lot when you're iterating through dozens of creative variations in a single session. Cloud inference costs also drop when you're running fewer model calls per image.

More broadly, this patent reflects a competitive pressure across the industry to make generative AI pipelines leaner. As rivals like Midjourney, OpenAI, and Stability AI race to cut latency, Adobe needs Firefly to keep pace — especially as it integrates deeper into Photoshop, Illustrator, and Express, where users expect near-real-time feedback.

This is a focused, incremental optimization patent rather than a conceptual leap — Adobe is essentially patenting a specific architectural choice (transformer prior, single-step inference) in a well-understood pipeline. It's not flashy, but latency reduction in generative AI is a real competitive battlefield right now, and owning prior art on this approach in a creative tools context is a reasonable defensive move.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.