Adobe Patents a Single Neural Network That Runs Multiple Segmentation Tasks at Once

Cutting out a subject, isolating a sky, and detecting every person in a photo usually means running three separate AI models. Adobe's new patent describes a way to do all of that in one pass.

What Adobe's multi-task segmentation model actually does

Imagine you're editing a photo in Photoshop and you want to do three things: remove the background, select just the people, and isolate the sky. Right now, tools like that typically run separate AI models for each job — which takes time and computing power.

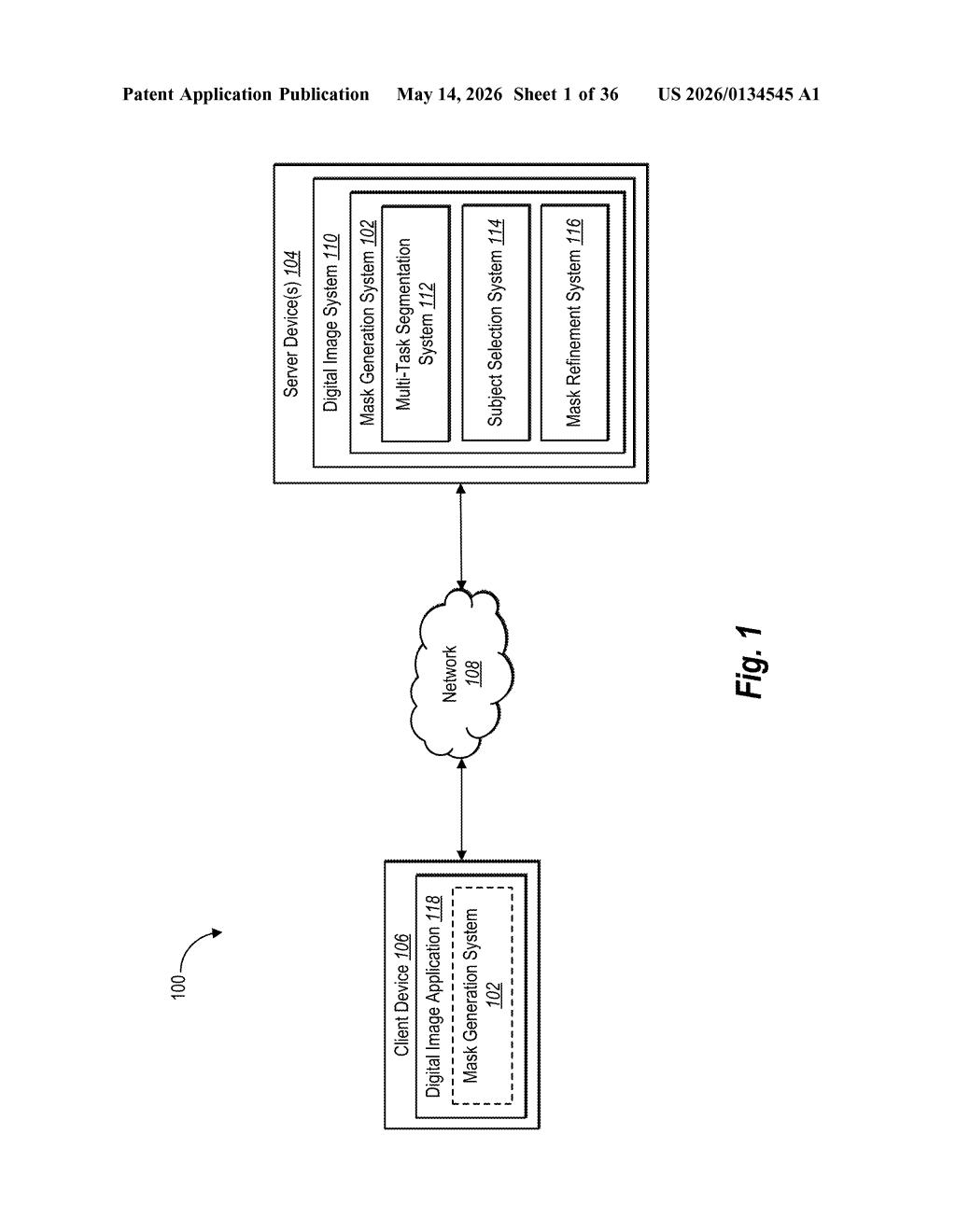

Adobe's patent describes a smarter approach: one neural network that handles all those segmentation tasks at the same time. You feed it a photo, and it produces multiple different selection masks — one for subjects, one for objects, one for whatever else you need — in a single model run.

The trick is a modular design where each task gets its own specialized "query decoder" that learns what to look for, while the heavy lifting of understanding the image is shared. It's like one very well-read analyst briefing multiple specialists, rather than each specialist reading the whole report from scratch.

How Adobe's query decoders split one model into many tasks

The system has three main components working in sequence:

- Image encoder: Takes the input photo and compresses it into encoded feature maps — rich numerical representations of what's in the image, where things are, and how they relate to each other.

- Pixel decoder: Takes those feature maps and produces a set of mask features — a lower-level spatial representation that's useful for drawing precise outlines around things.

- Query decoder neural networks (plural): This is the key innovation. Instead of one decoder, there are multiple query decoders, each associated with a different segmentation task. Each decoder has its own "learned queries" — trainable vectors that essentially encode "what does an instance segmentation mask look like?" versus "what does a semantic segmentation mask look like?"

Because the image encoder and pixel decoder are shared across all tasks, the system avoids redundant computation. The task-specific query decoders then interpret the shared mask features through their own learned lenses, producing distinct segmentation outputs simultaneously.

This architecture resembles the Mask2Former family of transformer-based segmentation models, extended to a multi-task regime — which is consistent with Adobe's published research in this space.

What this means for Photoshop's AI selection tools

For Photoshop and Adobe Express users, faster and more accurate selections are the most visible payoff. If Adobe can run subject selection, object detection, and semantic segmentation in one model call rather than three, that means quicker results and less battery drain — especially important on-device or in the browser.

For Adobe's broader AI platform strategy, this matters too. A single unified segmentation backbone that serves multiple tools — Remove Background, Generative Fill, Select Subject — is far more efficient to maintain and fine-tune than a zoo of separate models. That kind of architectural consolidation is what separates mature AI product pipelines from early-stage demos.

This is genuinely interesting systems-level work, not a flashy consumer feature patent. Adobe has published academic research closely aligned with this architecture (their work on multi-task segmentation is well-regarded), so this filing looks like the IP layer being put around real shipping infrastructure. If you care about how creative AI tools get faster and cheaper to run, this is worth tracking.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.