Sony Patents a Way to Stream 3D Scans at Any Detail Level from One File

Sony is working on a way to store 3D scans of real-world objects so that a weak device can load a low-detail version while a powerful one loads the full-resolution model — all from the same file.

What Sony's layered 3D point cloud format actually does

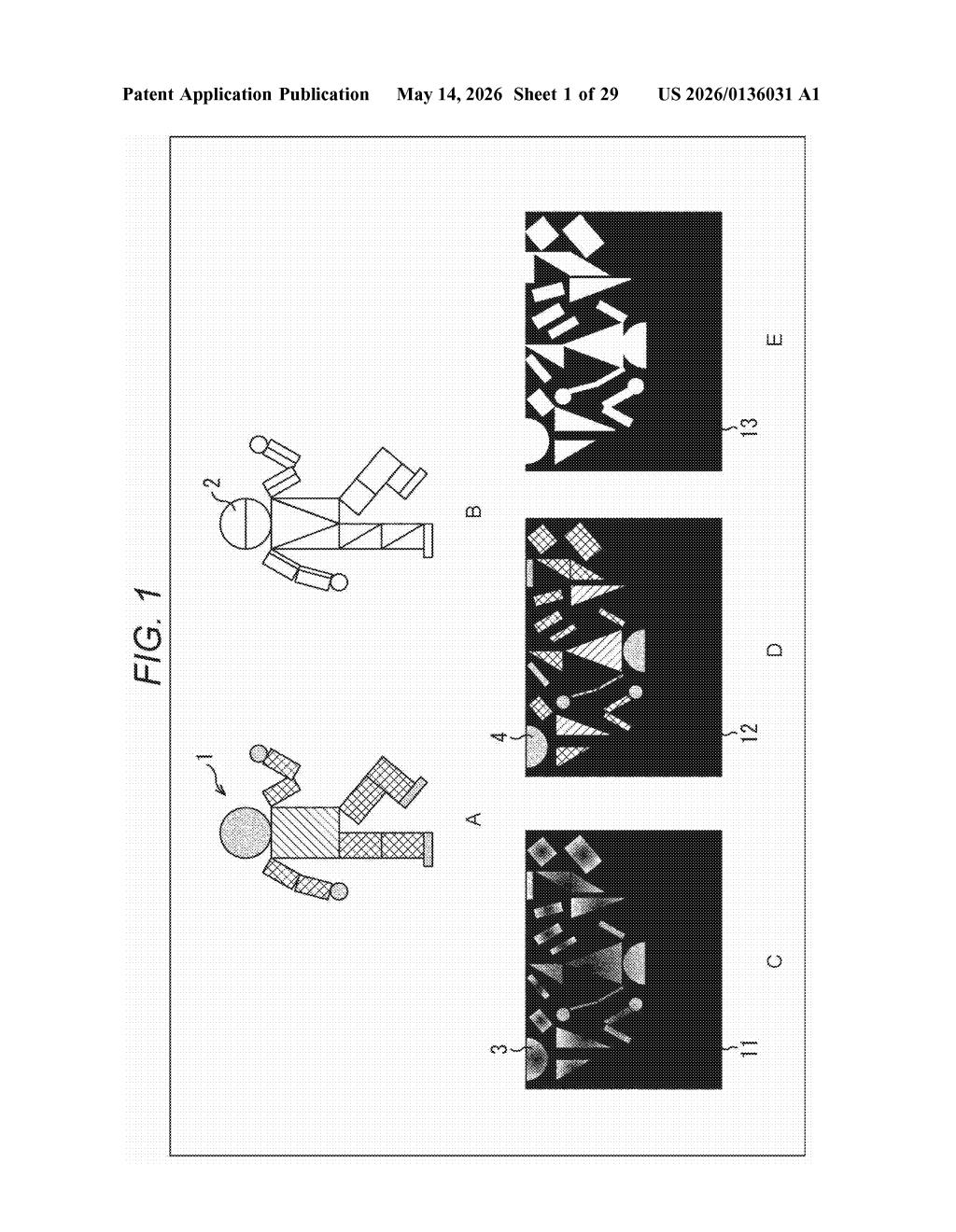

Imagine you're wearing a mixed-reality headset and you want to see a 3D scan of a car. Your headset is powerful, so you want every tiny surface detail. But your friend's phone — viewing the same scene — can only handle a rougher version. Today, you'd need two separate files. Sony's patent describes a single file format that contains multiple levels of detail, stacked as layers.

The underlying data is a point cloud — basically a 3D object described as millions of tiny dots in space, like a laser scan. Sony's system converts that into a 2D format (easier to compress), encodes it in layers of increasing detail, and bundles it all into one file with a map explaining what's inside.

When you go to play it back, your device reads that map, picks the layer that matches its capabilities, and only decodes what it needs. You get the right quality for your hardware without wasting bandwidth or processing power.

How Sony encodes and extracts spatial scalability layers

The patent describes a pipeline with two main sides: encoding (creating the file) and decoding (reading it back).

On the encoding side, a point cloud — a 3D object represented as a dense set of XYZ-coordinate points — is first flattened into a 2D image-like format. This makes it compatible with existing video compression tools. The system then encodes this 2D data using spatial scalability (a technique borrowed from video coding where one bitstream contains multiple resolution layers, each building on the previous one). The output is a sub-bitstream per layer, all bundled into a single file alongside spatial scalability information — essentially a metadata map describing which layer lives where.

On the decoding side, the patent's independent claim covers a device with three units:

- Selection unit: reads the metadata map and picks the appropriate layer based on device capability or user preference

- Extraction unit: pulls only the relevant sub-bitstream from the file, skipping data the device doesn't need

- Decoding unit: decompresses that sub-bitstream into usable 3D geometry

The key insight is that the metadata map (spatial scalability information) is stored inside the file container, so a decoder doesn't have to parse the entire bitstream to figure out what's available — it can navigate directly to the right layer.

What this means for immersive video and spatial computing

Point clouds are the native data format for LiDAR scans, volumetric video, and spatial computing — exactly the content pipeline that powers AR/VR, autonomous vehicle mapping, and next-generation streaming. As headsets and spatial displays become more mainstream, content delivery needs to handle wildly different device capabilities gracefully. Sony's approach borrows a well-proven idea from video streaming (adaptive bitrates, scalable video coding) and applies it to 3D geometry.

For you as a developer or end user, this means smoother experiences across devices — a phone, a standalone headset, and a high-end PC could all consume the same 3D asset file at the quality level each can handle. Sony's position in both professional imaging hardware and PlayStation VR gives this patent a plausible path to real products.

This is solid infrastructure work in a space that genuinely needs it — 3D point cloud delivery is still a mess of proprietary formats and bespoke pipelines. Sony isn't reinventing compression here; they're applying mature scalable-video ideas to geometry data and, critically, solving the file-container problem so decoders can navigate layers efficiently. It's not flashy, but it's the kind of unsexy spec work that shipping products actually depend on.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.