Sony Patents a Way to Teach AI by Showing It the Easy Problem First

What if you could teach an AI to solve a hard problem by first making it practice the easy version in reverse? That's the core idea behind Sony's latest language model patent — and it has direct implications for how game characters talk to you.

How Sony trains its NPC dialogue AI backwards

Imagine trying to teach someone to read a map by quizzing them on arbitrary routes. It's hard. But ask them to draw a route given a start and end point, and suddenly it's easy. Sony's patent uses the same logic for AI training.

Most AI training works by showing a model examples of questions paired with answers — input goes in, output comes out. But Sony's approach flips it: instead of teaching the model to extract meaning from sentences, it first asks the model to generate sentences from meaning. The AI creates thousands of example sentences from simple word pairs, then uses those examples to train itself on the harder reverse task.

The goal is to power NPC dialogue — the conversations non-player characters have with you in video games. By training this way, Sony's system can better understand what a player says and respond in a way that feels natural and in-character.

Inside Sony's reverse input-output fine-tuning loop

The patent describes a technique called reverse-order data generation for fine-tuning a large language model (LLM). Instead of feeding the model a pile of real-world sentence-to-meaning examples — which are hard to collect and label — the system bootstraps its own training data.

Here's the loop:

- Start with simple object-action pairs (e.g., "sword, attack" or "dragon, fly").

- Feed those pairs into the LLM and ask it to generate natural sentences that use them — the easy direction.

- Take those generated sentences and pair them back with their source object-action labels to create a synthetic training dataset.

- Fine-tune the LLM on that reversed dataset so it learns to extract object-action meaning from sentences — the hard direction.

This is a form of synthetic data augmentation — using the model's own generative ability to produce labeled examples it couldn't otherwise get at scale. The technical insight is that generation (easy) and extraction (hard) are asymmetric problems, and you can exploit that gap.

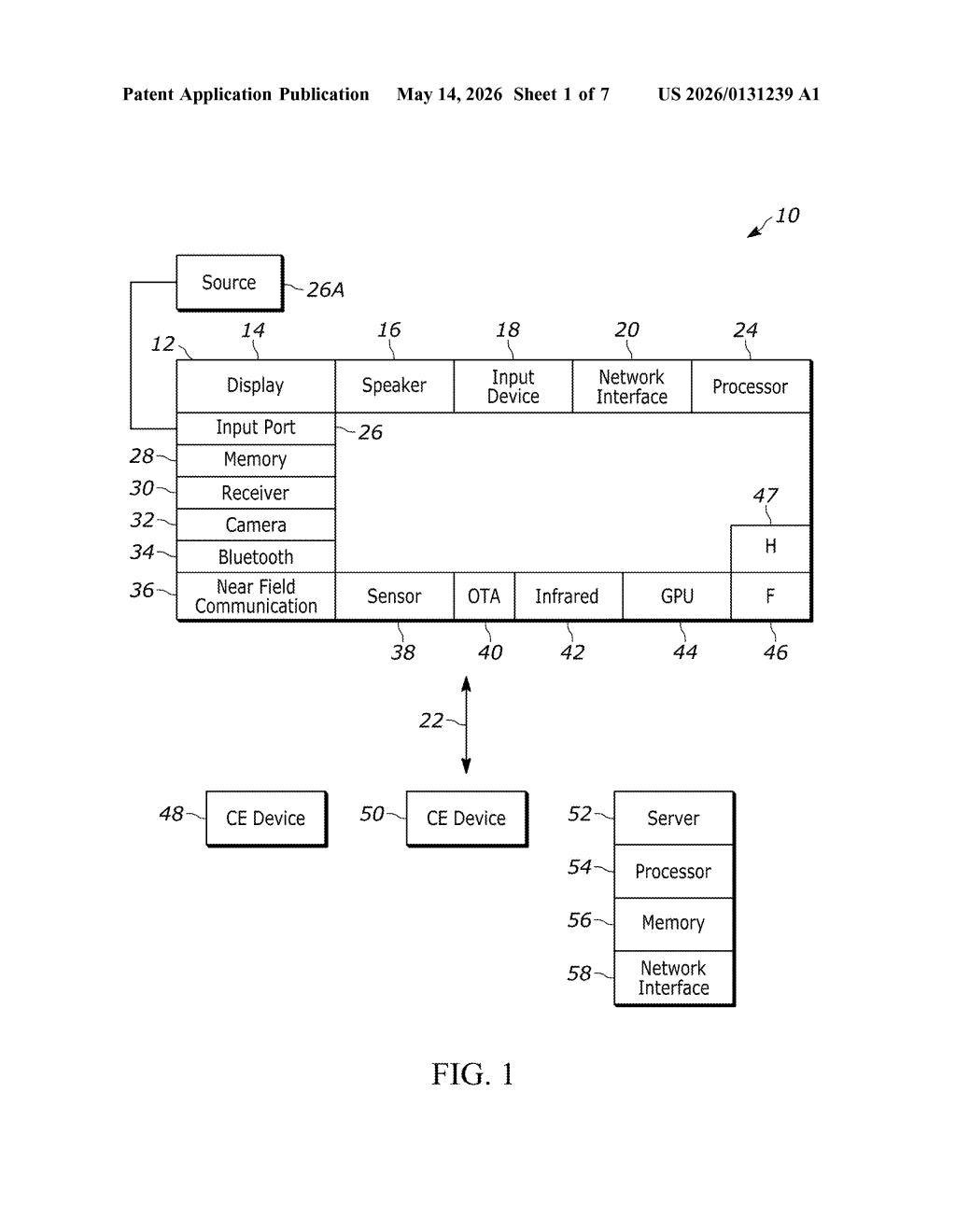

The trained model is then deployed on a game server or console to interpret player speech in real time and generate contextually appropriate NPC dialogue responses.

What this means for smarter NPCs in PlayStation games

For game developers, this is a pragmatic solution to a real bottleneck: getting enough labeled dialogue data to train a responsive NPC AI is expensive and slow. If Sony can bootstrap that data from simple word pairs, it lowers the barrier to shipping AI-driven characters that actually understand what you're saying — not just matching keywords.

More broadly, the reverse-generation trick is a transferable idea. Any domain where annotated input-output pairs are scarce but the reverse direction is easy to generate could benefit from this approach. Sony's specific application is games, but the underlying method is general enough that it could show up in other interactive AI contexts too.

This is a genuinely clever training trick, not just an incremental tweak. The asymmetry insight — that generation is easier than extraction — is the kind of practical ML intuition that tends to age well and get reused. Sony's application to NPC dialogue is concrete and believable, which makes this one of the more grounded game-AI patents in recent memory.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.