Sony Patents Angle-Aware Pixel Design to Fix CMOS Sensor Sensitivity Drift

Light doesn't always hit a camera sensor straight on — and that angle matters more than most people realize. Sony is patenting a way to physically tune each pixel's internal structure based on the angle light is expected to arrive, potentially smoothing out the sensitivity differences that plague wide-angle and large-aperture lenses.

What Sony's angle-tuned pixel diffusion actually does

Imagine taking a photo with a wide-angle lens. The pixels in the center of your sensor receive light almost straight on, but pixels near the edges get light coming in at a steep angle. That difference causes those edge pixels to collect light less efficiently, which is part of why photos can look dimmer or slightly softer toward the corners — a phenomenon called vignetting or sensitivity roll-off.

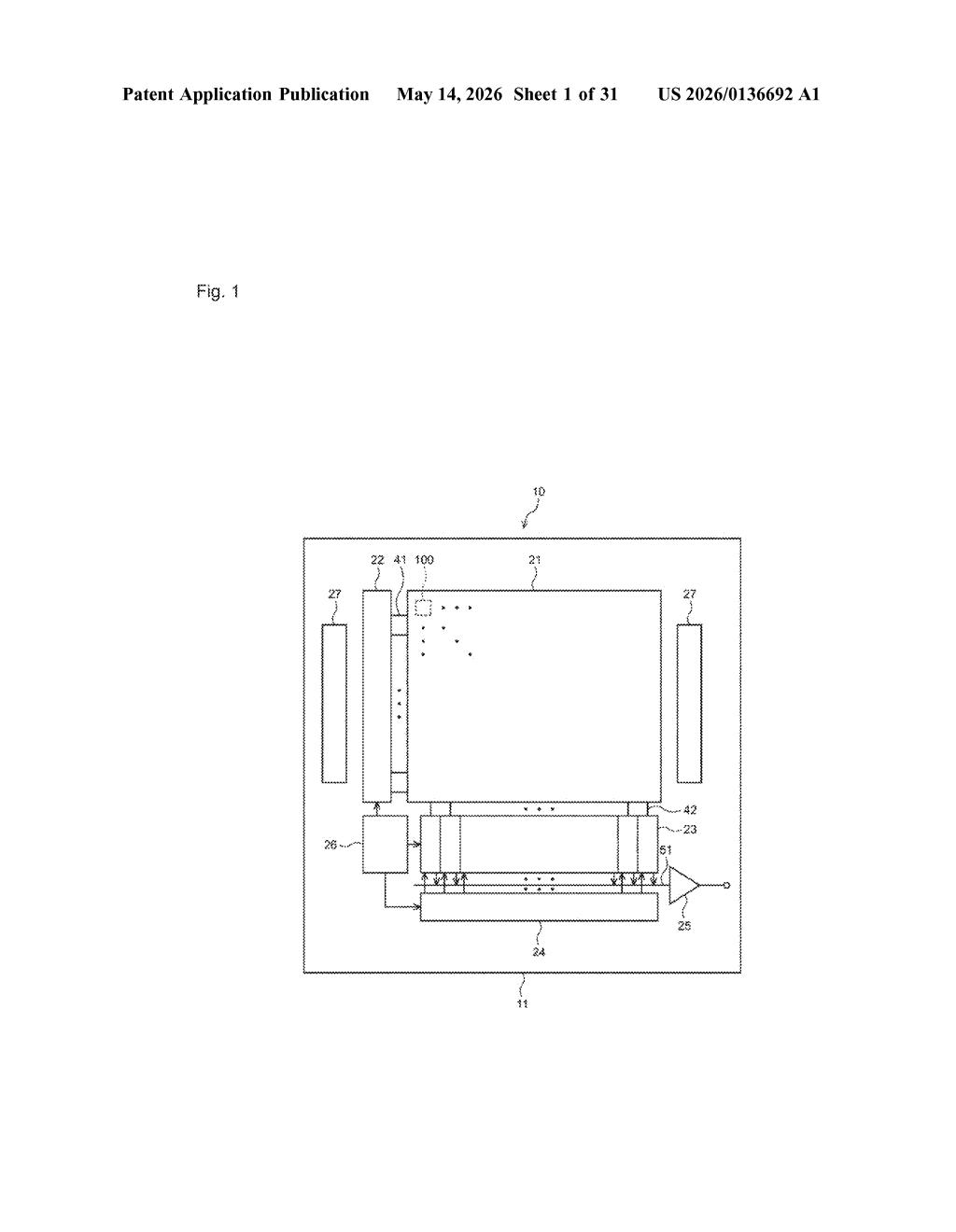

Sony's patent proposes a fix baked directly into the silicon. Instead of every pixel being built identically, the diffusion regions — tiny doped areas inside each pixel that help move captured electrons toward the readout circuit — would be shaped and positioned differently depending on the angle of light expected at that pixel's location on the sensor.

The idea is that if each pixel's internal geometry is already optimized for the light angle it's actually going to receive, the whole sensor behaves more uniformly. You get more consistent sensitivity from center to edge without relying entirely on software correction after the fact.

How diffusion regions are mapped to incoming light angles

Inside every pixel on a CMOS image sensor, there's a photoelectric conversion region — typically a photodiode — that turns incoming photons into electrical charge. Getting that charge out cleanly requires carefully designed diffusion regions: areas of the semiconductor substrate that are doped (chemically treated) to create an electric field that sweeps electrons toward the collection point.

In a conventional sensor, these diffusion regions are laid out the same way for every pixel across the array. That works fine when light is perpendicular, but real-world optics — especially wide-angle lenses or fast (low f-number) lenses — send light toward the sensor at varying oblique angles depending on where a pixel sits relative to the optical axis.

Sony's patent claims a design where the geometry and placement of diffusion regions vary across the pixel array based on the expected incident angle at each pixel's location. In practice this means:

- Center pixels, which receive near-perpendicular light, get one diffusion configuration

- Edge and corner pixels, which receive more oblique light, get a shifted or reshaped diffusion layout tuned to that angle

- The two-dimensional pixel arrangement is used as a map to determine which diffusion profile each pixel gets

The goal is to equalize charge collection efficiency across the sensor, reducing pixel-to-pixel sensitivity differences that arise purely from geometry.

What this means for edge-of-frame sharpness in cameras

For consumers, this kind of fix could mean cameras — whether in smartphones, mirrorless bodies, or surveillance systems — produce images with more uniform brightness and sharpness across the whole frame, especially with wide-angle or large-aperture lenses where oblique light angles are most extreme. Right now, a lot of that correction happens in software or firmware, which works but costs processing time and can introduce artifacts.

For Sony specifically, this matters because Sony Semiconductor Solutions supplies image sensors to a huge portion of the industry, including Apple's iPhone lineup. A sensor-level solution to angle-dependent sensitivity is the kind of low-level manufacturing advantage that's hard to replicate and easy to embed into every device a sensor ships in — quietly raising the floor for image quality across millions of cameras.

This is a quiet but genuinely clever piece of sensor engineering. Fixing optical problems in silicon rather than software is always the cleaner solution, and Sony doing this at the diffusion-region level — below where most competitors even look — is the kind of deep fabrication IP that compounds over time. It's not flashy, but it's exactly the sort of thing that keeps Sony's sensor business ahead.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.