Nvidia Patents an AI Pipeline for Generating Animatable 3D Characters

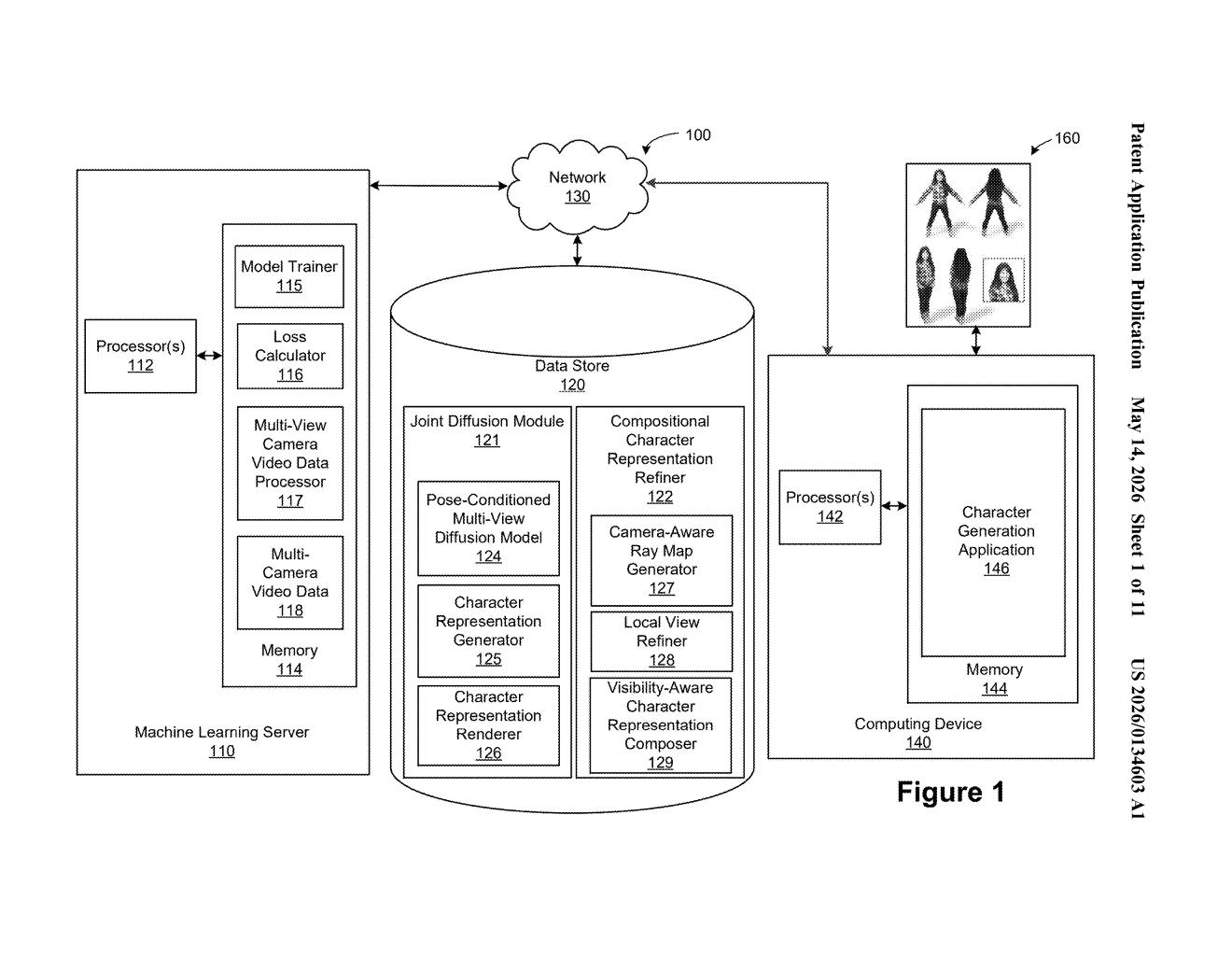

Creating a fully animatable 3D character normally takes a team of artists weeks. Nvidia's new patent describes a way to let a diffusion model do it in one automated pipeline — and crucially, produce a result that actually moves.

How Nvidia builds a 3D character you can actually animate

Imagine you want to put a custom character in a video game or a virtual world. Right now, making that character look good and move naturally — walking, running, gesturing — is a painstaking, expensive process that requires skilled 3D artists. Nvidia is working on a system that automates most of it.

The idea is to start with a rough overall picture of a character, then zoom into specific body parts from multiple camera angles. A trained AI model fills in the detail for each part — arms, legs, torso — and stitches those detailed close-ups back into one coherent, high-quality 3D model. The result isn't just a static mesh; it's a representation built for animation from the start.

Think of it like a portrait artist who first sketches the whole figure, then meticulously renders each hand, then reassembles everything into a finished painting — except the AI does all of that in seconds.

How the multi-view diffusion pipeline assembles body parts

The system works in four main stages, each building on the last:

- Global-to-local view generation: A coarse global representation of the character — think a rough 3D sketch — is used to generate focused "local views," essentially close-up camera frames centered on specific body regions.

- Local ray map generation: For each local view, the system computes a ray map (a per-pixel guide that encodes the exact camera angle and depth direction for that crop). This keeps spatial geometry consistent when you zoom into a body part.

- Multi-part local view synthesis via diffusion: A diffusion model (the same class of AI behind image generators like Stable Diffusion) takes those local views and ray maps and generates multi-part local views — high-fidelity renderings that respect the underlying skeleton and pose. A secondary ML model conditions the output on body-part visibility, so overlapping regions like an arm crossing the torso don't get confused.

- Representation refinement: Finally, all the enriched local views are composed back onto the global character model to produce a refined animatable representation — a 3D asset that retains full pose-driven deformation capability.

A key insight here is the camera-aware ray map approach: by baking camera geometry directly into the conditioning signal, the diffusion model can hallucinate fine detail for each body part without losing track of where that part sits in 3D space.

What this means for game dev and real-time character creation

The bottleneck in real-time character creation — for games, film, virtual avatars, or robotics simulation — has long been the gap between what AI can generate (impressive-looking 2D images) and what engines actually need (rigged, animatable 3D meshes). This patent directly targets that gap. If the pipeline works at scale, it could let small teams or even individual creators generate production-quality animated characters without a full art department.

For Nvidia specifically, this fits squarely into their Omniverse and simulation strategy. Animatable characters are core to synthetic data generation — training robots and autonomous systems requires vast libraries of human motion, and hand-crafting every character in those libraries is impractical. A diffusion-driven pipeline that spits out animatable humans on demand is exactly the kind of infrastructure that makes that work tractable.

This is a genuinely interesting technical filing, not just an incremental patent. The compositional multi-view approach — zoom into parts, enrich them independently, stitch back — is a smart architectural choice that sidesteps one of the hardest problems in 3D generation: keeping global consistency while generating fine local detail. Whether it performs as well in practice as it does on paper is another question, but the problem being solved is real and the approach is thoughtful.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.