Nvidia Patents a System That Amplifies Robot Training Data 100x Automatically

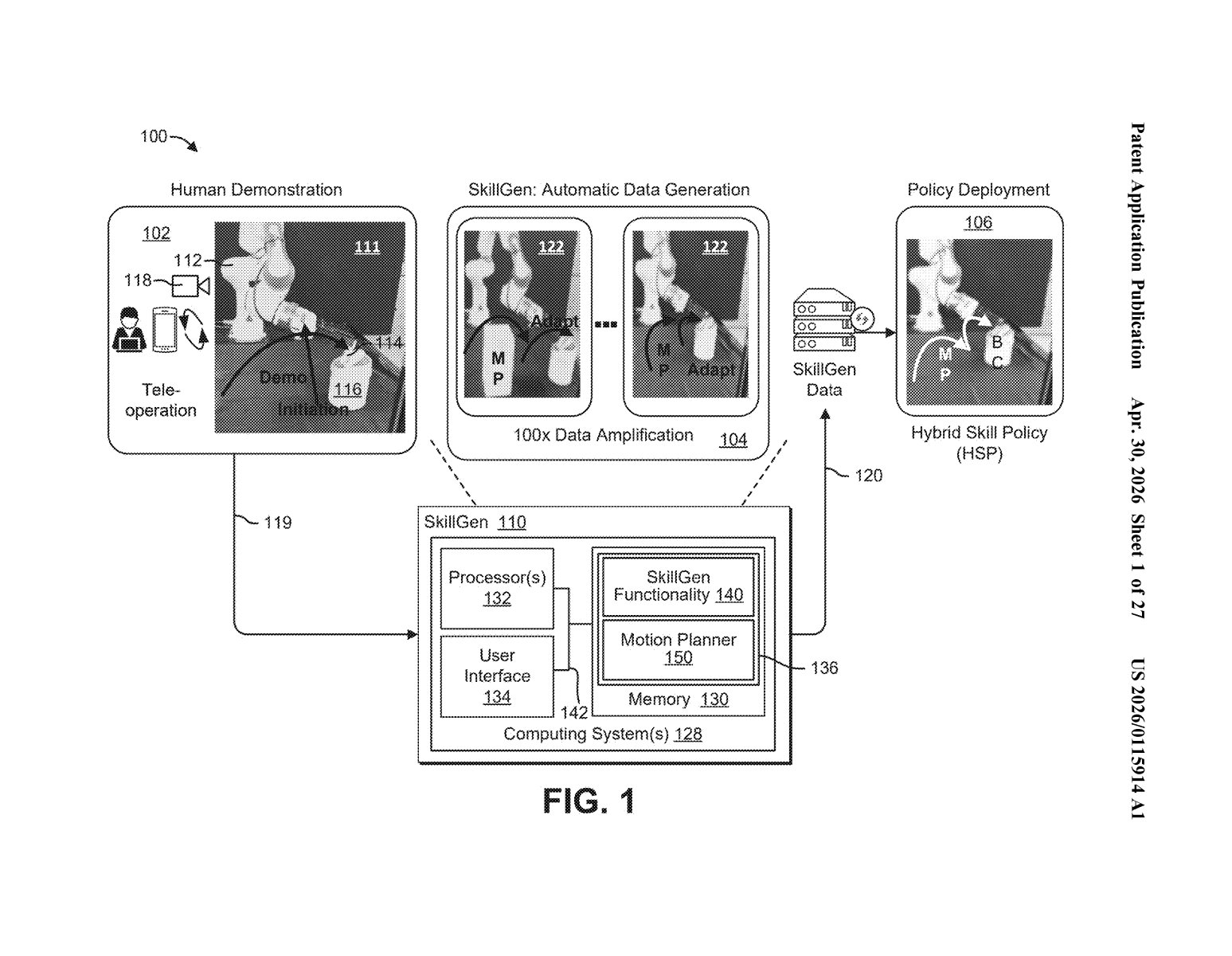

Teaching a robot to do something useful requires enormous amounts of demonstration data — and collecting that data by hand is slow, expensive, and exhausting. Nvidia's new patent describes a system called SkilGen that could turn a single human demonstration into a hundred usable training examples, automatically.

How Nvidia turns one robot demo into a hundred

Imagine you want to teach a robot to pick up a coffee mug and place it on a coaster. Normally, you'd need a human operator to physically guide the robot through that task dozens or hundreds of times, from slightly different starting positions, angles, and speeds — just so the robot gets enough varied examples to learn from. That's a lot of tedious work.

Nvidia's SkilGen system tries to solve that problem by automatically generating new variations of a demonstration you've already recorded. It breaks a single recorded robot movement into segments — the moments where the robot touches or interacts with an object, and the moments where it's just moving through space — and then treats each type differently.

For the "just moving" segments, it uses a classical motion planner (basically a navigation algorithm) to compute new paths. For the tricky "touching the object" segments, it uses a neural network to figure out how the robot's grip and approach should change. The system then stitches those pieces back together into brand-new, realistic demonstrations. The result, according to Nvidia, is a roughly 100x expansion of your training dataset from a single human recording.

How SkilGen splits, mutates, and stitches trajectories

At its core, SkilGen is a hybrid data-generation pipeline that exploits a key insight: not all parts of a robot's movement are equally hard to vary.

A typical robot task has two fundamentally different kinds of motion segments:

- Interaction segments — where the robot is actively grasping, pushing, or manipulating an object. These require contact-aware reasoning and are hard to generalize automatically.

- Transit segments — where the robot is just moving its arm from point A to point B without touching anything. These are much easier to replan with standard algorithms.

SkilGen handles each segment type with the right tool. For interaction segments, it uses one or more neural networks trained to generate new trajectories when the starting or ending conditions are slightly modified (say, the object is moved a few centimeters). For transit segments, it hands off to a classical motion planner — a deterministic algorithm that computes a collision-free path given a start and end pose. Think of it like GPS rerouting the drive between two fixed stops.

The system then combines these separately generated pieces back into a coherent new demonstration. The resulting "Hybrid SkilGen Policy" (HSP) is then trained on this amplified dataset and deployed to control a real or simulated second robot agent.

What this means for teaching robots new tasks cheaply

The bottleneck in robot learning isn't algorithms — it's data. Getting a robot to generalize to new object positions, lighting conditions, or table heights usually means collecting hundreds of new demonstrations for every small variation. That's expensive at scale and a real barrier to deploying robots in uncontrolled real-world environments like homes or warehouses.

If SkilGen works as described, it could dramatically reduce the human teleoperation time required to train a capable robot policy. That has direct implications for Nvidia's robotics ambitions — including its Isaac robotics platform and its partnerships with manufacturers. It also lines up with a broader industry push (from Figure, 1X, Google DeepMind, and others) to make imitation learning practical without requiring industrial-scale data collection operations.

This is one of the more practically motivated robot-learning patents to come out of a big tech company recently. It doesn't try to solve robot intelligence from first principles — it solves a very specific, unglamorous problem (we don't have enough training data) with a clever hybrid approach. The 100x amplification claim is eye-catching, and if it holds up in real-world benchmarks, SkilGen could become a meaningful part of Nvidia's pitch to robotics customers.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.