Nvidia Patents an Intelligent Two-Phase Cooling System for AI Datacenters

As AI chips push heat density to extremes, Nvidia is patenting a smarter cooling system that can switch between two different refrigerant loops on the fly — automatically ramping up when your GPUs start running hot.

How Nvidia's dual-mode refrigerant cooling works

Imagine your datacenter's cooling system is like a car with both a regular engine and a turbocharger. Under normal conditions, you cruise on the base system. But when you floor the accelerator, the turbo kicks in. That's roughly what Nvidia is describing here.

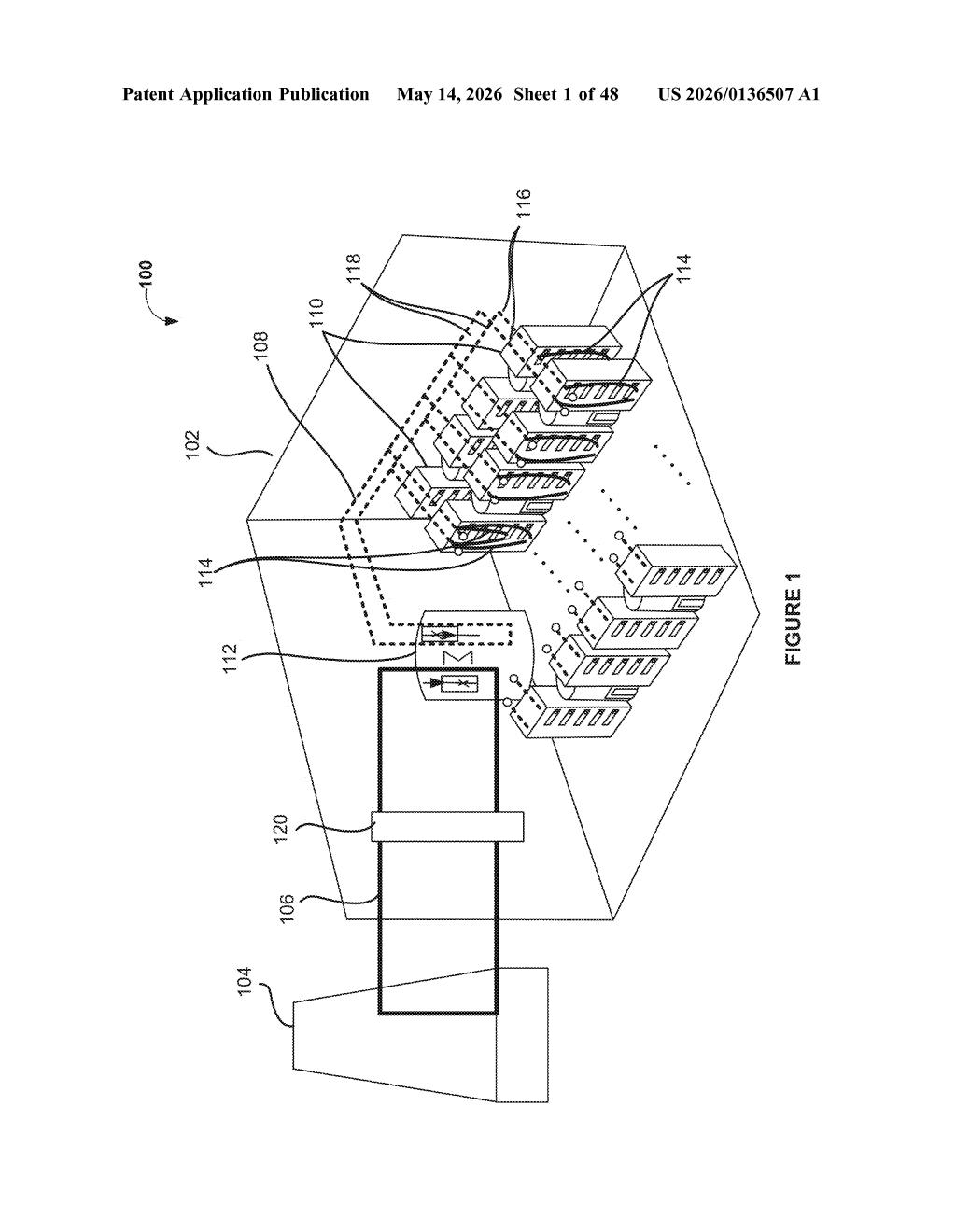

The patent covers a cooling setup with two separate loops: a primary refrigerant-to-air loop (using a fluid that changes between liquid and gas to carry heat away) and a backup loop with a different coolant. Under typical workloads, the refrigerant loop handles everything on its own. But when a server's heat output spikes above a certain threshold — or the backup coolant gets too warm — a processor automatically activates both loops together.

The whole thing is designed to keep cold plates (metal blocks bolted directly to chips) at safe temperatures even when AI training jobs or inference workloads push hardware to its limits. It's plumbing intelligence, essentially.

How the R2A loop switches between cooling modes

The system centers on a refrigerant-to-air (R2A) heat exchanger — a device that uses a two-phase fluid (a refrigerant that absorbs heat by evaporating from liquid to gas, then releases it by condensing back) to pull heat from cold plates attached directly to computing hardware.

The patent describes two distinct operating modes:

- Mode 1 (baseline): Only the R2A refrigerant loop runs. The two-phase fluid circulates through cold plates, picks up heat, moves through the heat exchanger, and dissipates that heat into the datacenter air via a compressor or condensing unit.

- Mode 2 (augmented): A second, independent cooling loop — carrying a different fluid — is activated alongside the R2A loop. Both loops run in parallel through the same cold plates.

The switch between modes is controlled by at least one processor and is triggered by two conditions: the heat load from the computing device exceeds a preset threshold, or the secondary coolant's inlet temperature rises too high. This is essentially a closed-loop control system (one that monitors its own outputs and adjusts behavior accordingly).

The architecture is notable because it treats the refrigerant loop as the primary workhorse rather than a supplemental system, which inverts the typical datacenter approach where chilled water is primary and refrigerant is reserved for spot cooling.

What this means for high-density GPU datacenter cooling

Modern AI accelerators — think H100- or Blackwell-class GPUs — can push 700W or more per chip, and racks can exceed 100kW total. Traditional air cooling and even standard liquid cooling struggle at those densities. A system that intelligently combines two different cooling mechanisms and escalates automatically means you can keep hardware running at peak performance without manual intervention or conservative thermal throttling.

For hyperscale datacenter operators, this kind of adaptive two-phase cooling could also improve energy efficiency: run the lighter loop when loads are modest, only burning the extra compressor energy when the workload actually demands it. That's a meaningful operational cost consideration when you're running thousands of racks.

This is genuinely useful infrastructure engineering, not a flashy AI trick. Nvidia building cooling IP makes strategic sense — they design the chips that create the heat problem, and controlling the full thermal stack gives them more credibility (and potentially more margin) when selling turnkey datacenter solutions. The two-mode switching logic is the interesting bit here; the rest is solid mechanical engineering.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.