Microsoft Patents an AI Prompt Security System That Profiles Both Models and Users

What if your AI assistant could tell when something about a conversation felt off — not because a word matched a blocklist, but because the whole pattern of the interaction looked statistically weird? That's what Microsoft is building here.

What Microsoft's AI behavioral profiling system actually does

Imagine you use an AI assistant at work every day. You tend to ask it to summarize emails, draft reports, and look up company policies. Now imagine someone — or something — starts sending that same AI wildly different kinds of prompts: requests for system credentials, attempts to extract training data, or weirdly structured inputs designed to confuse the model. A simple keyword filter might miss all of that.

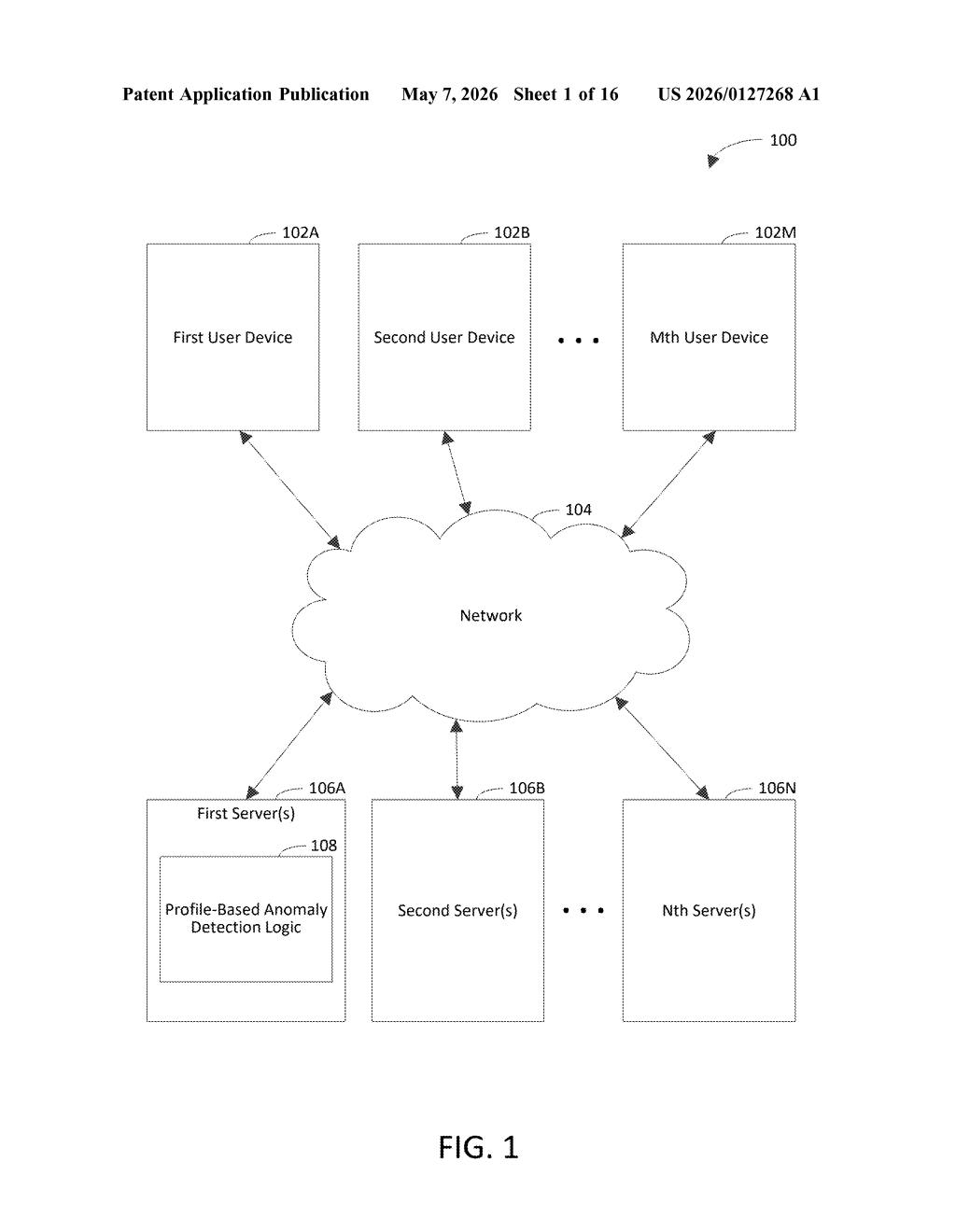

Microsoft's patent describes a system that builds behavioral profiles for both the AI model itself and each individual user. The model's profile captures what kinds of conversations it normally has and what its responses usually look like. Your user profile captures what you typically ask and how your sessions tend to go.

When a new prompt arrives, the system compares it against all of those profiles. If it's too different from the norm — for the model, for the user, or for both — the system triggers a security action. That might mean blocking the prompt, flagging it for review, or something else entirely. The key idea is catching attacks that look unusual in context, not just in content.

How the system compares prompts against model and user profiles

The system generates four distinct types of profiles, each capturing semantic meaning (what things actually mean, not just the words used) from different angles:

- Model-session profile: represents the typical flow and meaning of full conversations the AI model handles — both the prompts it receives and the responses it generates.

- Model-response profile: specifically tracks what the AI's outputs normally look like semantically, so an unusually out-of-character response can be caught.

- User-session profiles: one per user, capturing the pattern of a typical back-and-forth conversation that user has with the model.

- User-prompt profiles: focused specifically on what kinds of prompts each individual user tends to send.

When an incoming AI prompt arrives, the system computes a difference score between that prompt and the relevant profiles. If the score hits or exceeds a difference threshold, the system triggers an instruction to take a security action — the patent doesn't lock in a specific action, leaving room for blocking, alerting, rate-limiting, or logging.

The semantic comparison is the interesting part. Rather than matching keywords, the system appears to work at the level of meaning — so a cleverly rephrased jailbreak attempt that avoids flagged words could still trip the anomaly detector if its intent diverges from normal usage patterns.

What this means for enterprise AI security and Microsoft Copilot

Enterprise AI deployments are increasingly targeted by prompt injection attacks — adversarial inputs designed to hijack an AI's behavior, extract sensitive data, or bypass safety guardrails. Traditional security tools built for web apps don't map cleanly onto conversational AI. Microsoft's approach of building semantic behavioral baselines is a more native defense.

This fits squarely into Microsoft's push to make Copilot and Azure AI services safe enough for regulated industries like finance, healthcare, and government. If this kind of profiling ships in a product, it could give IT administrators a meaningful signal when an employee's account is compromised and being used to probe an AI system — or when an external attacker is trying to manipulate a public-facing AI agent.

This is genuinely interesting security infrastructure work. Most AI safety conversation focuses on model-level alignment, but protecting the runtime interaction layer — who's talking to the model and how — is a real and underserved problem. The semantic profiling angle is more sophisticated than blocklist-based filtering, and Microsoft is positioned to deploy this at massive scale across Copilot and Azure.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.