Nvidia Patents a Lightweight System for Converting Stereo Camera Data into Bird's Eye Views

Seeing the world from above is incredibly useful for self-driving cars — but doing it cheaply and efficiently on low-power hardware is the hard part. Nvidia's new patent describes a clever shortcut that gets you to a bird's eye view without a beefy GPU.

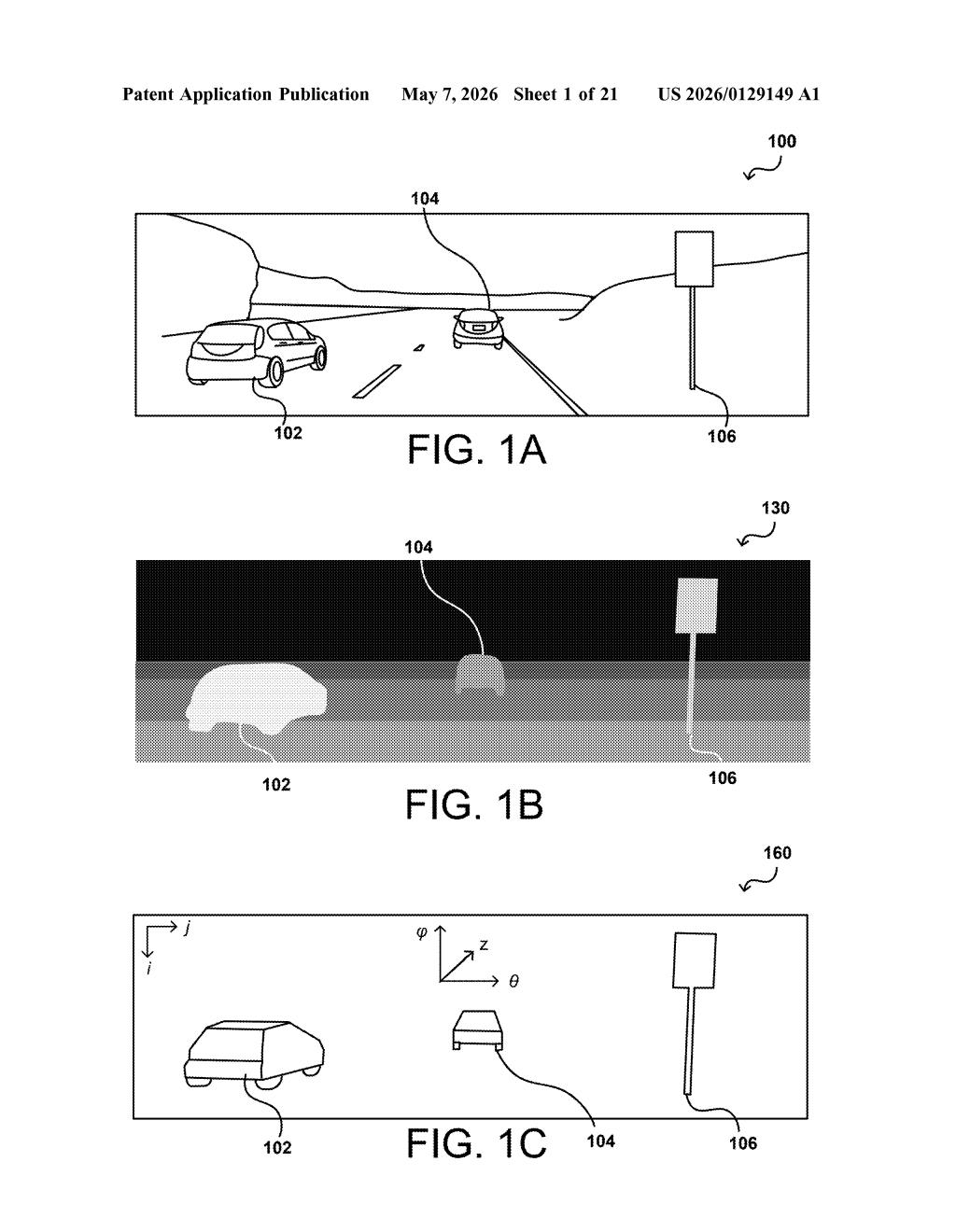

What Nvidia's stereo-to-overhead-view system actually does

Imagine you're driving and your car's cameras are trying to figure out where every pedestrian, cyclist, and parked car is sitting in 3D space around you. Stereo cameras — two lenses spaced apart, like your eyes — can estimate depth by comparing what each lens sees. But turning that depth map into a clean overhead picture that a self-driving system can reason about takes real computing power.

Nvidia's patent describes a way to do that conversion on a small, low-power embedded processor — the kind of chip you'd find in a car's dashboard computer rather than a data center. The key trick is an intermediate step: instead of going straight from raw depth data to a final image, the system first creates a compact 2D summary (a histogram) of where objects are, based on their angle and distance from the camera.

From that compact summary, the system applies the same-sized filter to every object regardless of how far away it is — which is normally tricky because distant objects appear smaller in camera images. Then it converts the whole thing into a bird's eye view. The result is a computationally lean pipeline that can run on embedded hardware with limited memory, making it practical for real vehicles.

How Nvidia's 2D histogram pipeline transforms depth into top-down images

The patent describes a processing pipeline running on an embedded processor with DMA (Direct Memory Access) — meaning the chip can pull data straight from memory without burdening a central CPU, keeping things fast and efficient on constrained hardware.

Here's the sequence the system follows:

- Step 1 — Generate disparity data: A stereo camera pair captures a scene, and depth is inferred from the slight difference between what each camera sees (called disparity). Greater disparity = closer object.

- Step 2 — Build a 2D histogram view: Instead of keeping the full depth map, the system compresses it into a 2D histogram organized by angle and distance from the camera. Think of it like a radar sweep summarized in a grid — compact and structured.

- Step 3 — Apply uniform morphological filtering: Morphological filtering (a technique that cleans up shapes by expanding or shrinking blobs of pixels) is applied using a single fixed-size filter for all objects, regardless of how far away they are. Normally, distant objects need different-sized filters because they appear smaller in the image — but because the histogram is organized by real-world angle and distance, one filter size works everywhere.

- Step 4 — Transform to bird's eye view: The cleaned-up histogram is projected into a top-down overhead image, giving the system a flat map of the surroundings.

The whole pipeline is designed to run on embedded chips with limited compute and memory, which is where the DMA access and intermediate representation become critical — they avoid expensive data shuffling and repeated scaling operations.

What this means for autonomous driving on embedded hardware

Self-driving and advanced driver assistance systems (ADAS) need to process depth data in real time, but the most powerful compute is expensive, power-hungry, and physically large. Nvidia's approach targets the embedded processors that actually live inside production vehicles — not the cloud servers behind the scenes. A bird's eye view is extremely useful for path planning and obstacle detection, and being able to generate one efficiently on-device is a meaningful engineering win.

The uniform filter trick is the quietly clever part here. In standard camera-space processing, you'd need different filter sizes for near versus far objects — adding complexity and compute. By working in a histogram space defined by real-world angle and distance, Nvidia sidesteps that problem entirely. That kind of simplification is exactly what makes the difference between a feature that runs at 30fps on embedded hardware versus one that only works in simulation.

This is a solid, practical engineering patent — not flashy, but the kind of work that actually ships in production ADAS systems. The insight of using an intermediate histogram representation to normalize object scale is genuinely elegant, and it's clearly aimed at Nvidia's automotive ambitions with the Drive platform. Worth a look if you follow embedded vision or autonomous systems.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.