Adobe Patents a Neural Network That Trains Itself to Fix Messy Image Masks

Cutting out a subject from a photo is one of those tasks that looks easy until you zoom in on the hair or fur. Adobe's latest patent describes a neural network trained specifically to clean up imprecise selection masks — and the clever bit is how it learns.

What Adobe's mask refinement AI actually learns to do

Imagine you're trying to cut a curly-haired person out of a photo. Your first selection is close, but the edges are ragged — some hair is missing, some background is included. That initial rough outline is what's called an image mask, and polishing it has traditionally been painstaking manual work.

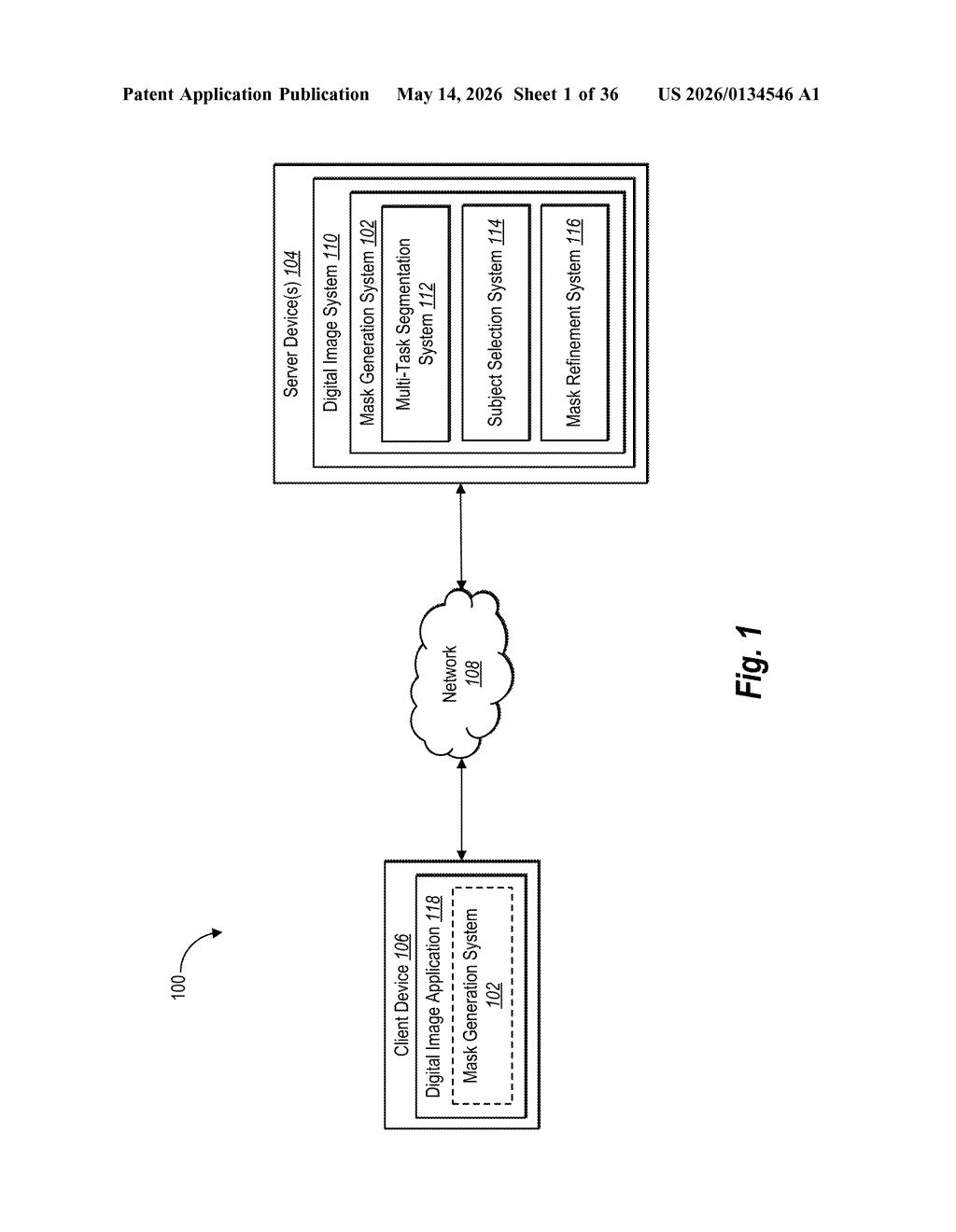

Adobe's patent describes an AI that's specifically trained to take those rough masks and refine them into clean, precise outlines. The interesting part isn't the refinement itself — it's how the AI learns to do it. Instead of needing thousands of perfectly labeled training examples, the system creates its own deliberately imperfect masks by messing up known-good ones, then teaches the AI to fix them.

This self-generated training data approach means Adobe can train a better mask-cleaner without an enormous hand-labeled dataset. The result, at least in theory, is a smarter tool that handles tricky edges — think flyaway hairs, transparent fabrics, or furry animals — more reliably than current selection methods.

How simulated masks and point-sampling train the network

The system has three main stages working together:

- Simulated mask generation: The system takes high-quality, hand-labeled ground-truth masks (the ideal outlines of objects) and intentionally degrades them using various "mask modification operations" — think eroding edges, adding blobs, or misaligning boundaries. These deliberately broken masks simulate the kind of rough output a real selection tool might produce.

- Mask refinement network: A neural network takes both the original image and one of these simulated rough masks as input, then tries to predict what the correct refined mask should look like. It learns to use image details — color gradients, textures, edge information — to decide where the true object boundary actually is.

- Point-sampling loss training: Rather than comparing the entire predicted mask against the ground truth all at once, the system evaluates accuracy by randomly sampling individual points across the mask and computing a matting loss (a measurement of how wrong the pixel's opacity assignment is) at each sample. Using multiple separate sampling operations gives the optimizer a richer signal about where the mask is still wrong.

The matting loss is key here — unlike a simple binary "inside or outside" comparison, matting loss treats edges as having fractional transparency values, which captures the subtlety of semi-transparent or soft-edged objects like hair.

What this means for Photoshop-style selection tools

For anyone who uses Photoshop, Firefly, or Adobe Express, this kind of background technology is what makes the difference between a "Select Subject" result you can actually use and one that still needs 20 minutes of manual cleanup. If Adobe ships this into its tools, you'd expect noticeably cleaner automatic selections on difficult subjects — the hairy, furry, or fine-detailed objects that have always caused problems.

More broadly, this is part of a longer trend of AI teams building self-supervised training pipelines — systems that generate their own training data rather than depending on expensive human annotation. Adobe's approach of deliberately corrupting good masks to train a fixer is a practical, scalable way to keep improving selection quality without an ever-growing labeling budget.

This is solid, unglamorous infrastructure work — exactly the kind of patent Adobe files regularly to protect improvements to its core editing pipeline. The simulated-mask training trick is genuinely clever and reflects real ML engineering sophistication. It probably won't make headlines, but it's the kind of thing that quietly makes 'Select Subject' go from decent to great over a few product cycles.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.