Intel Patents a GPU That Converts Textures Once and Remembers Them

Intel has filed a patent for a GPU-side system that converts textures into the right format on the fly — and then holds onto them so it doesn't have to do the same work twice. It's a caching play buried in graphics pipeline infrastructure.

What Intel's texture transcoding cache actually does

Imagine your GPU is a chef who has to prep the same ingredient every time a dish is ordered, even if it just made that exact thing five minutes ago. That's roughly what happens when a GPU repeatedly converts texture data into a usable format during rendering. It's wasteful, and it adds up.

Intel's patent describes a system where the GPU transcodes textures — converting them from one compressed or encoded format into whatever the current execution environment needs — and then caches those converted textures so they can be reused on the next request without repeating the conversion.

This is the kind of quiet plumbing work that rarely makes headlines, but it can meaningfully reduce redundant computation during graphics-heavy workloads like gaming, video playback, or real-time rendering pipelines.

How Intel's GPU converts and stores texture formats

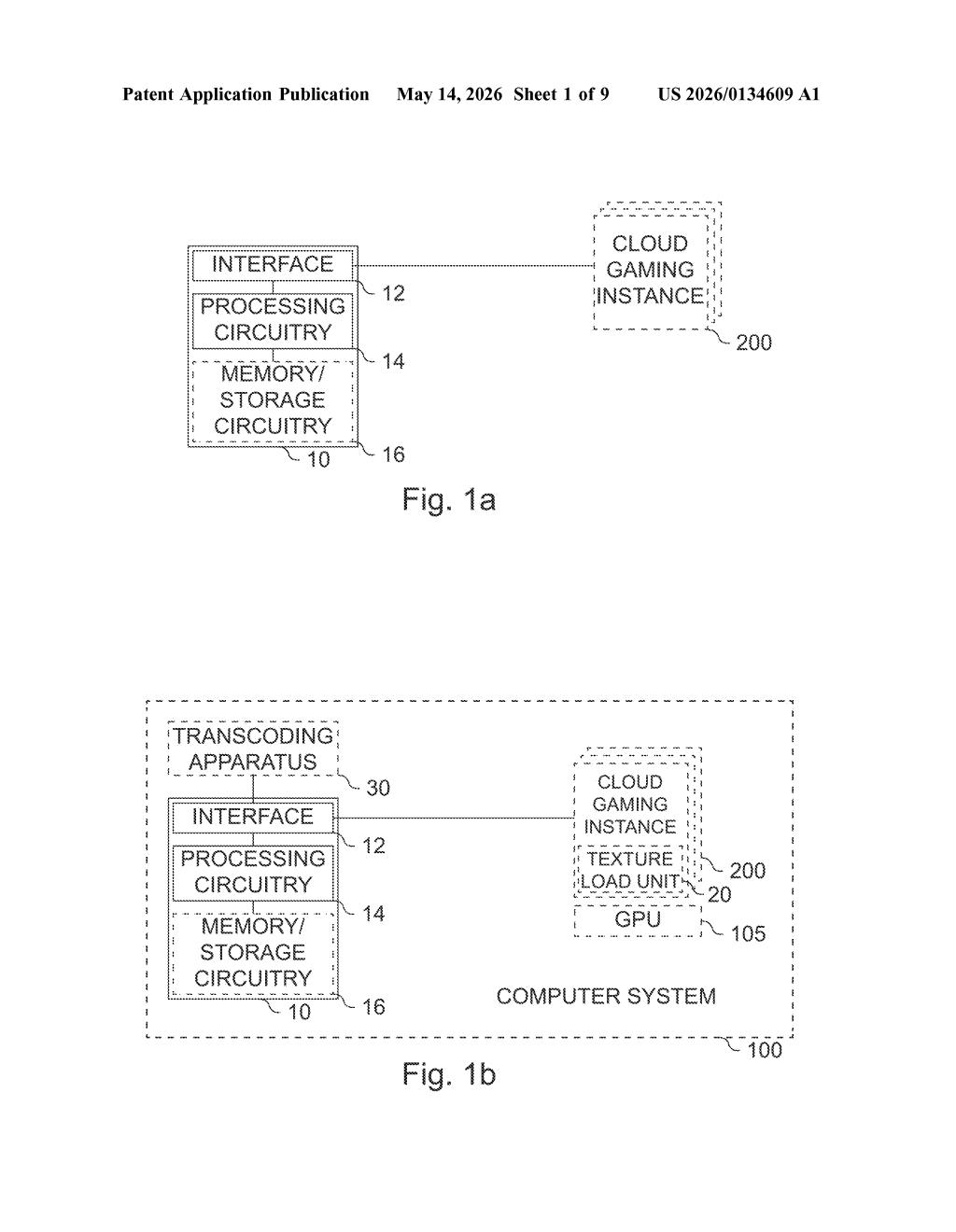

The patent describes a transcoding apparatus built around a graphics processing unit (GPU) paired with dedicated processing circuitry. The core job is two-step: transcode incoming textures into a format suited for execution, then cache those results for later reuse.

Texture transcoding is the process of converting compressed image data (like BC7, ASTC, or ETC formats) from one encoding into another that a particular GPU or execution context can efficiently consume. Different hardware and APIs often require different formats, so this conversion step is a common — and sometimes repeated — cost in graphics pipelines.

The caching layer is where the real efficiency argument lives. Rather than reconverting the same texture every time it's needed, the apparatus stores the transcoded result and retrieves it on subsequent requests. The patent also references a driver apparatus and caching apparatus as supporting components, suggesting this is part of a broader system-level architecture involving driver software managing the cache lifecycle.

The claim is intentionally broad at this stage, covering the interface, processing circuitry, and the transcode-then-cache behavior as a unified apparatus.

What this means for GPU memory and rendering workloads

For game engines and real-time rendering pipelines, repeatedly transcoding the same texture assets is a known performance drain — especially when switching between rendering contexts or loading assets from disk. A GPU-native cache that short-circuits that repeated work could meaningfully reduce latency and free up compute cycles for actual rendering.

For Intel specifically, this kind of infrastructure patent fits neatly into its Arc GPU and Xe graphics roadmap, where driver-level optimization is a known competitive pressure point. Whether this ends up in shipping silicon or driver software, it signals Intel is thinking carefully about how texture formats and execution environments interact across its GPU lineup.

This is a narrow, infrastructure-level patent — not a flashy AI feature or a new rendering technique. But texture format management is a genuinely painful problem in cross-platform graphics, and a GPU-side caching layer for transcoded textures is a sensible engineering answer. Worth noting as a signal of where Intel is investing in Arc/Xe driver optimization, even if the claim itself is quite bare.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.