Intel Patents an AI That Teaches Robots to Imagine What's Around the Corner

Robots are usually blind to whatever their camera isn't directly pointed at — Intel's new patent uses the same AI image-filling tech behind generative photo editors to stitch together a robot's full surroundings in real time, no GPS required.

How Intel's diffusion model gives robots a full-room awareness

Imagine you're trying to navigate a room, but you can only see through a narrow flashlight beam. You'd have to constantly remember where the walls and furniture were from earlier glances, and mentally combine that with what you're seeing right now. That's basically the problem robots face.

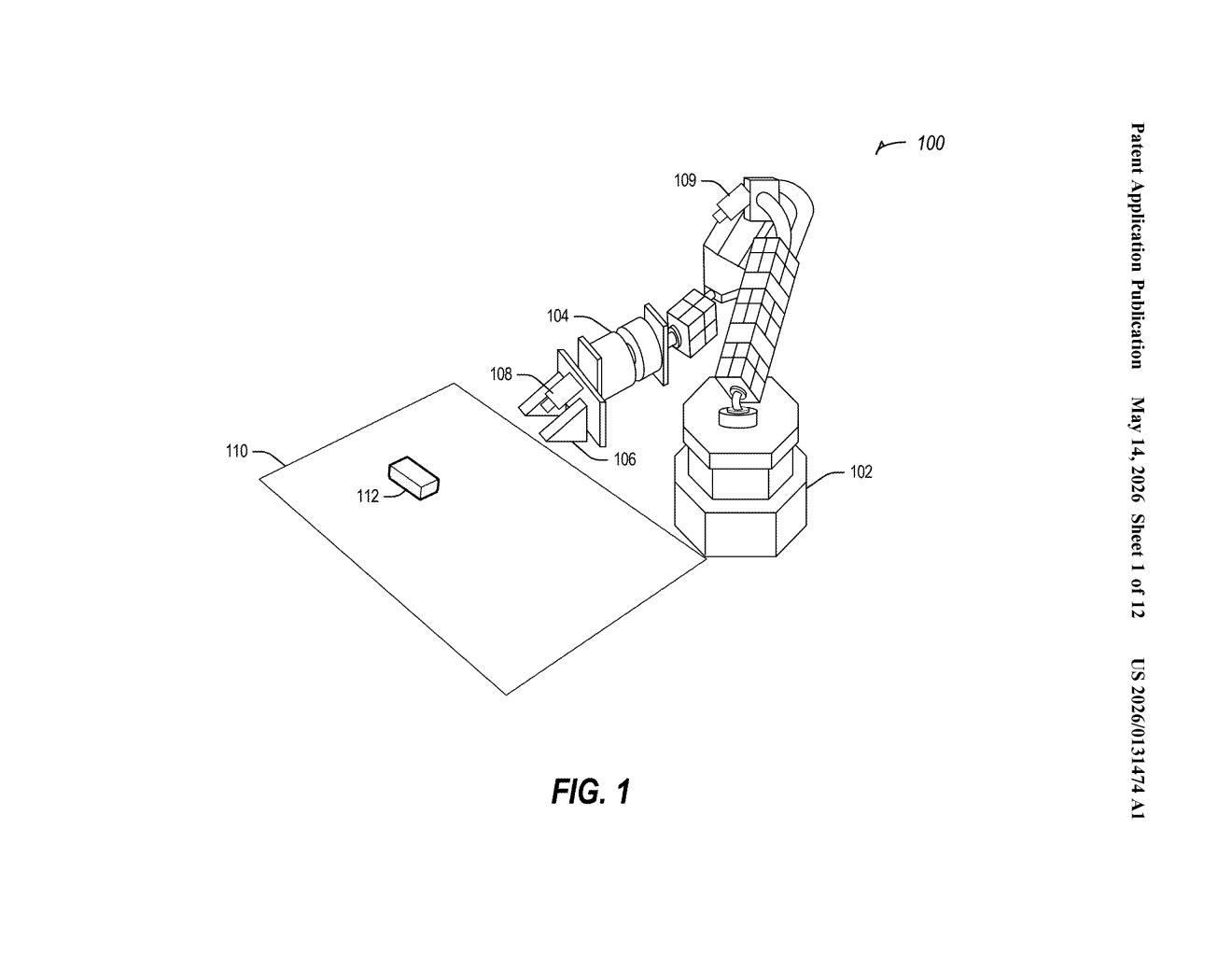

Intel's patent describes a system where a robot's camera captures its current narrow view, then blends that with a previously stored spherical image — a 360-degree snapshot of the whole environment — to build a continuously updated picture of everything around it. The clever part: gaps between the old panorama and the new camera frame are filled in using a generative diffusion model, the same family of AI behind tools like Stable Diffusion, which synthesizes plausible-looking pixels to bridge the two images seamlessly.

The result is that the robot always has a coherent, full-surround view it can use to find its target and move its arm precisely — without needing any external GPS, motion-capture system, or localization hardware.

How spherical images and inpainting combine for robot guidance

The system works in three main stages that repeat as the robot moves:

- Capture the current view: The robot's onboard camera grabs a standard image of whatever portion of the environment it's currently facing.

- Retrieve the spherical reference: The system fetches a previously built spherical image (think: a full 360° equirectangular panorama) that encodes the robot's whole surrounding space.

- Merge via diffusion inpainting: The current camera frame is overlaid onto the spherical image. Where the two don't perfectly align — due to robot movement, parallax, or missing data — a generative diffusion model performs inpainting (AI-powered gap-filling) to produce a smooth, blended output called the modified spherical image.

This modified spherical image feeds directly into visual servoing — a control technique where a robot continuously adjusts its movements by comparing what its camera sees against a desired target position, rather than relying on pre-programmed coordinates.

Critically, the patent specifies that the whole pipeline operates independent of any external positioning or localization system, meaning no motion-capture rigs, no GPS, no laser trackers. The robot figures out where it is and where to go purely from vision and the AI-synthesized panorama.

What this means for GPS-free industrial robotics

Most precision robotics in factories and labs today leans heavily on external infrastructure — laser trackers, motion-capture arrays, or carefully calibrated fixed cameras — to tell a robot arm exactly where it is. That infrastructure is expensive, fragile, and hard to redeploy. A robot that can localize and servo itself purely from onboard vision plus an AI-refreshed panorama is significantly cheaper to operate and easier to move to new environments.

For Intel, this fits squarely into its push to make edge AI silicon relevant for robotics and industrial automation. The compute-heavy diffusion inference that powers the inpainting would run on Intel's own processors, giving it a natural hardware angle. If this technique works reliably in noisy real-world conditions — lighting changes, occlusions, moving objects — it could meaningfully reduce what it costs to deploy a robot arm outside a tightly controlled lab.

This is genuinely interesting work at the intersection of generative AI and robotics control, not just an incremental tweak. The key bet is that a diffusion model can inpaint robot-panorama gaps fast enough and accurately enough to be useful in real-time servo loops — which is a meaningful unsolved problem. Whether Intel can make the inference latency practical on edge hardware is the real question, and the patent wisely sidesteps that by describing the architecture rather than the performance numbers.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.