Google Patents a Source-Tracking System to Cut AI Hallucinations

AI hallucinations — where a model confidently makes things up — are one of the biggest trust problems in generative AI right now. Google's new patent describes a way to score every candidate response by how much it actually relies on known, selected sources, then filter out the ones that stray too far.

How Google's source-scoring approach fights AI make-believe

Imagine you ask an AI assistant a question and you also tell it: "only use information from this document." A regular AI might still blend in stuff it learned during training — stuff that could be wrong or completely fabricated. Google's patent tries to fix that by making the model keep a running score of which sources each answer actually draws from.

Here's the idea: instead of generating one answer and hoping for the best, the system produces multiple candidate answers. Each one carries a kind of receipt — a score that says how much each information source contributed to it. If you told the system to stick to a particular source, it will pick the candidate whose score shows the strongest alignment with that source.

The result is that answers which lean too heavily on the model's internal guesswork (instead of your chosen source) get filtered out before you ever see them. It's a practical, pipeline-level check on one of AI's most frustrating habits.

How candidate responses get scored and filtered by source

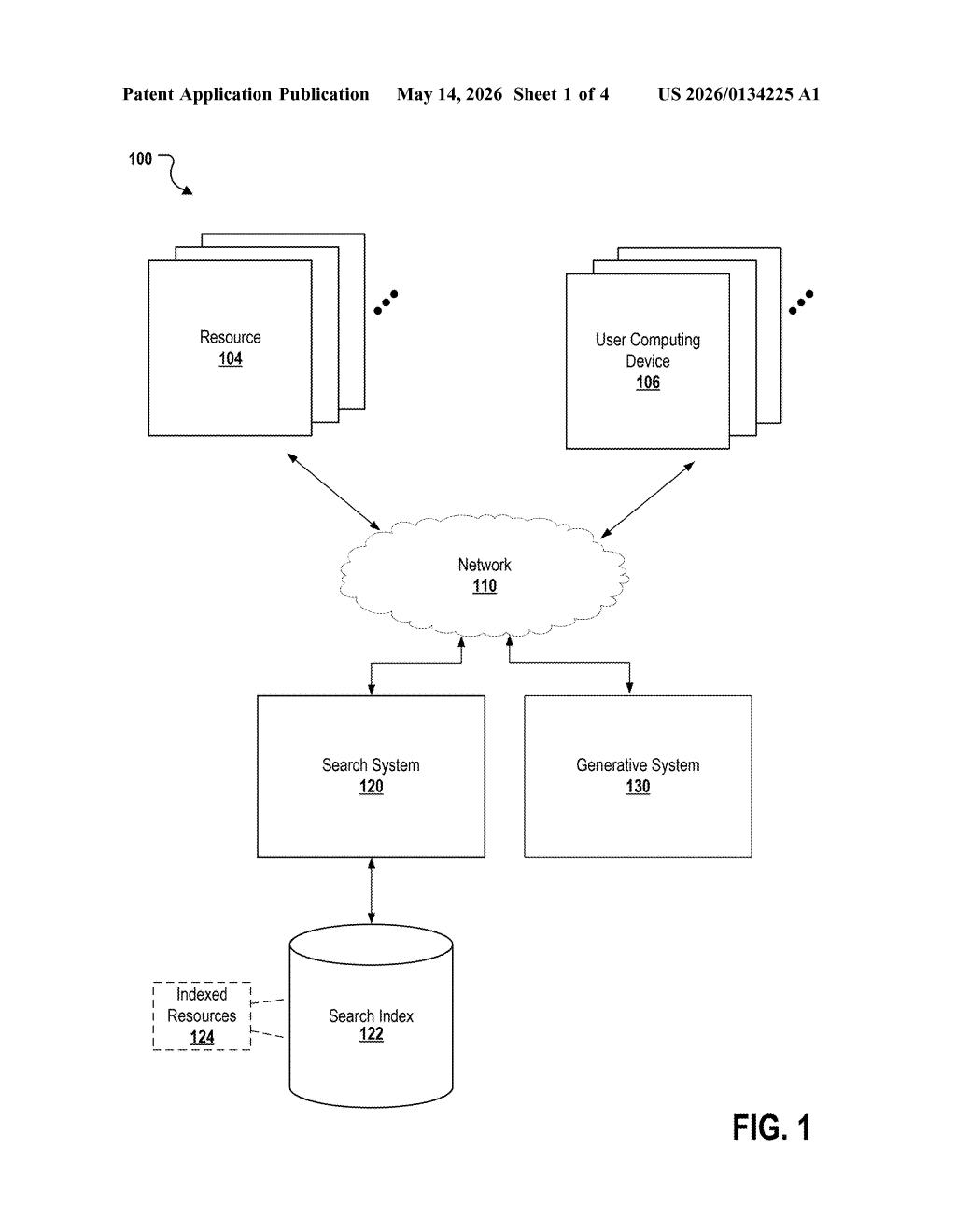

The patent describes a multi-candidate generation and selection pipeline built on top of a generative model. Here's how the pieces fit together:

- The user submits a query and explicitly selects a preferred source from a pool of available sources of information.

- The generative model produces several candidate responses simultaneously, each computed using a weighted summation (think of it as a blended recipe that says how much of each source went into the answer) across all available sources.

- Each candidate also carries a per-source score — a numeric measure of how strongly that answer is grounded in each individual source.

- The system then selects the winning candidate by comparing those scores against the user's chosen source and a threshold value (a minimum acceptable grounding level). Candidates that fall below the threshold — meaning they drift too far from the selected source — are discarded.

The threshold value is the key enforcement mechanism. It's essentially a dial for how strictly the system must stick to the designated source before presenting an answer. A higher threshold means more conservative, tightly sourced responses; a lower one gives the model more latitude.

Unlike post-hoc citation tagging (where a model answers first and finds citations afterward), this approach bakes source attribution into the selection logic itself.

What this means for AI reliability in Google products

Hallucinations aren't just embarrassing — in enterprise, legal, medical, or research contexts, they can be genuinely harmful. A system that lets users designate a trusted source and then enforces that choice algorithmically is a meaningful step toward making AI assistants actually auditable. For Google, this matters most in products like NotebookLM or Gemini with grounding, where the value proposition is specifically "AI that stays within your documents."

The approach also shifts some control back to the user. Rather than trusting a black-box model to cite correctly, you get a pipeline that is structurally biased toward your chosen source. That's a different — and arguably more honest — contract between the AI and the person using it.

This is a solid, practical patent that addresses a real problem rather than chasing a flashy capability. The weighted-summation-plus-threshold mechanism is the kind of unglamorous infrastructure work that actually makes AI products more trustworthy in production. It's not novel enough to be a research breakthrough, but it's exactly the kind of system you'd want quietly running inside a document-grounded AI tool.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.