Google Patents an AI System That Shows You Wearing Clothes Without a Fitting Room

Imagine being able to see exactly how a jacket looks on your body before buying it online — no guesswork, no returns. Google is patenting an AI image-generation system designed to do exactly that, and the technical approach is more sophisticated than the simple "paste a shirt onto a photo" tricks you've seen before.

What Google's virtual try-on diffusion system actually does

Picture this: you're shopping online, you find a coat you love, and instead of squinting at a size chart, you upload a photo of yourself and the AI shows you wearing that exact coat. That's the core idea behind this Google patent.

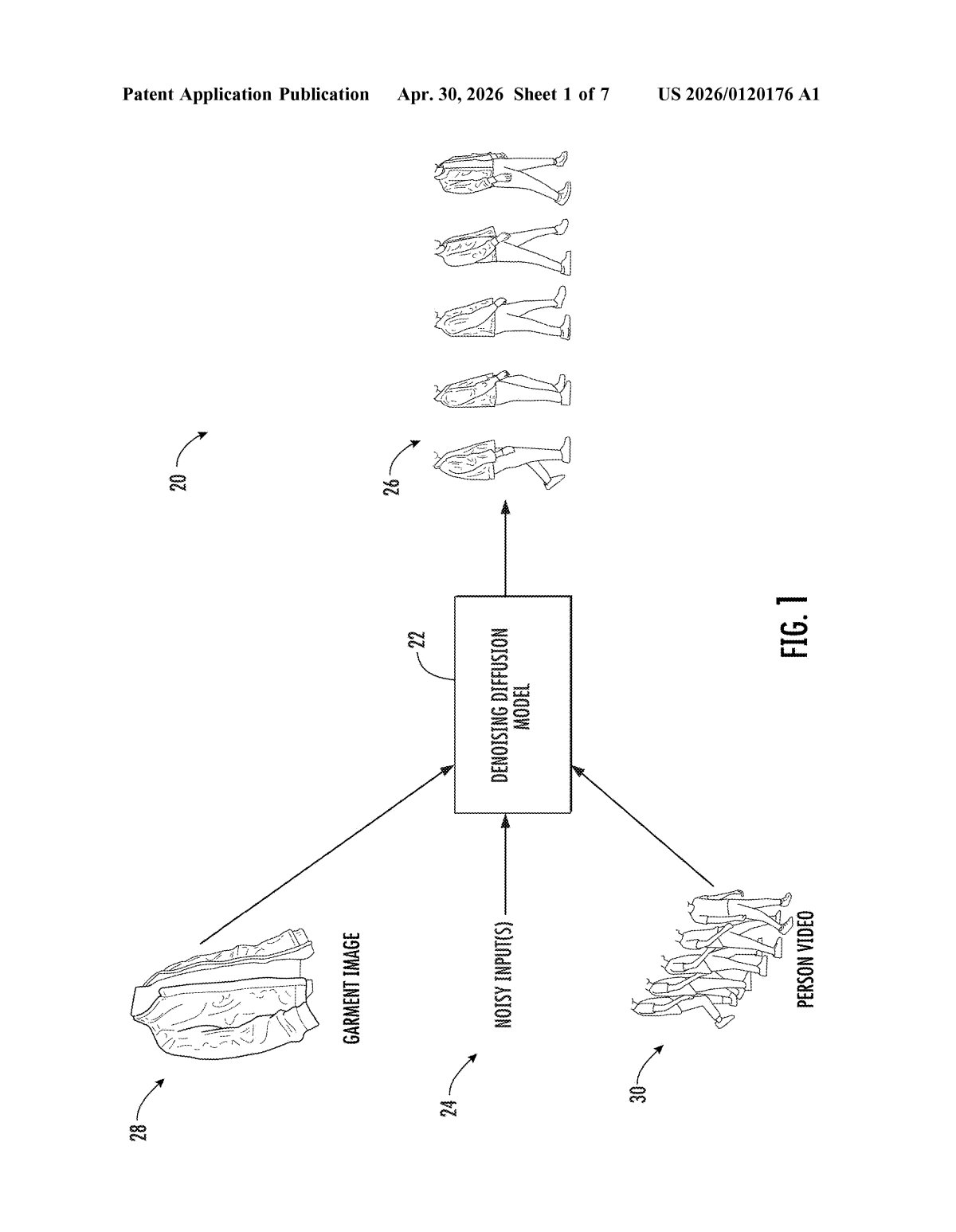

The system uses a type of AI called a diffusion model — the same family of technology behind image generators like Stable Diffusion and Midjourney — to synthesize a realistic photo of you in a specific garment. The clever part is how it balances multiple sources of information at once: what you look like, what the garment looks like, and how the two should fit together naturally.

Rather than just slapping a clothing image onto your photo, the model runs multiple rounds of refinement, each time incorporating different "conditioning" clues (like your body shape or the garment's texture) to build up a more accurate and realistic result. The goal is a final image that looks like you actually tried the item on.

How split classifier-free guidance builds the try-on image

The patent describes a method called split classifier-free guidance, which is a specific technique for controlling how a diffusion model generates its output image.

Here's the core loop: the model starts with a noisy input (think of it as a scrambled, static-filled image) and progressively cleans it up over many diffusion timesteps — each step nudging the image closer to something coherent. What makes this approach distinct is that instead of applying all the guidance signals at once, the system applies them sequentially across multiple update iterations within each timestep:

- It first generates an initial prediction from the noisy input with no conditioning.

- It then loops through several sets of conditioning inputs — these could include the person's appearance, the garment's visual details, or pose information.

- Each loop updates the working prediction, layering in new constraints one group at a time.

Classifier-free guidance (CFG) is a standard diffusion trick where you run the model with and without a prompt, then amplify the difference to steer the output. The "split" innovation here is doing that steering across multiple separate conditioning groups rather than one combined prompt, which in theory gives the model finer-grained control over how each input influences the final image.

The output is a photorealistic image showing a specific person wearing a specific garment.

What this means for online shopping and Google's retail ambitions

Online clothing retail has a massive returns problem — estimates put apparel return rates at 20–30%, largely because items don't look the way shoppers imagined. A credible virtual try-on tool that works at scale could meaningfully reduce that friction, and it's a space Google has been circling with its Shopping and Search products for years.

For you as a shopper, this would mean seeing yourself in a garment before committing to a purchase. For Google, embedding this into Shopping or Google Lens would deepen its position as the starting point for product discovery — and give it a stickier advantage over Amazon and dedicated fashion platforms. The diffusion-model approach is notably more flexible than older warping-based try-on methods, since it can handle complex garments, varied poses, and realistic fabric drape without needing a 3D model of the clothing.

Virtual try-on is a crowded space — Snap, Amazon, and a dozen startups are all chasing the same problem — but Google's use of split classifier-free guidance is a real technical idea, not just a vague AI hand-wave. Whether this specific mechanism turns out to be the right approach is an open question, but the patent signals Google is investing engineering depth here, not just slapping "AI" on a feature roadmap slide.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.