Nvidia Patents Reinforcement Learning System for Designing Faster, Smaller Circuits

Chip design is one of the most painstaking jobs in engineering — and Nvidia thinks reinforcement learning can do it better than humans. This patent describes an AI system that iteratively improves the internal wiring of arithmetic circuits until it lands on designs that are smaller, faster, and more power-efficient than anything drawn by hand.

How Nvidia trains AI to lay out chip circuits

Imagine you're trying to find the fastest route through a city, but the city has millions of intersections and changes every time you reroute. That's roughly what chip designers face when laying out the logic circuits inside a processor. Every adder, multiplier, and comparator is a puzzle of gates and wires, and even small improvements in layout can shave nanoseconds off calculations or milliwatts off power draw.

Nvidia's patent describes a system where a machine learning model — trained using reinforcement learning — takes over that puzzle-solving job. Instead of a human engineer manually sketching out a circuit topology, the AI starts with an initial design, evaluates how good it is, makes targeted changes, and gradually works toward a layout that beats conventional designs on area (how much chip space it uses), power consumption, and delay (how fast signals travel through it).

The focus is on a class of circuits called parallel prefix circuits — the building blocks of fast adders inside every processor. These are mundane but critical components. Getting them even slightly more efficient, at the scale Nvidia operates, translates directly to faster GPUs and better performance per watt.

How the RL agent optimizes parallel prefix circuit states

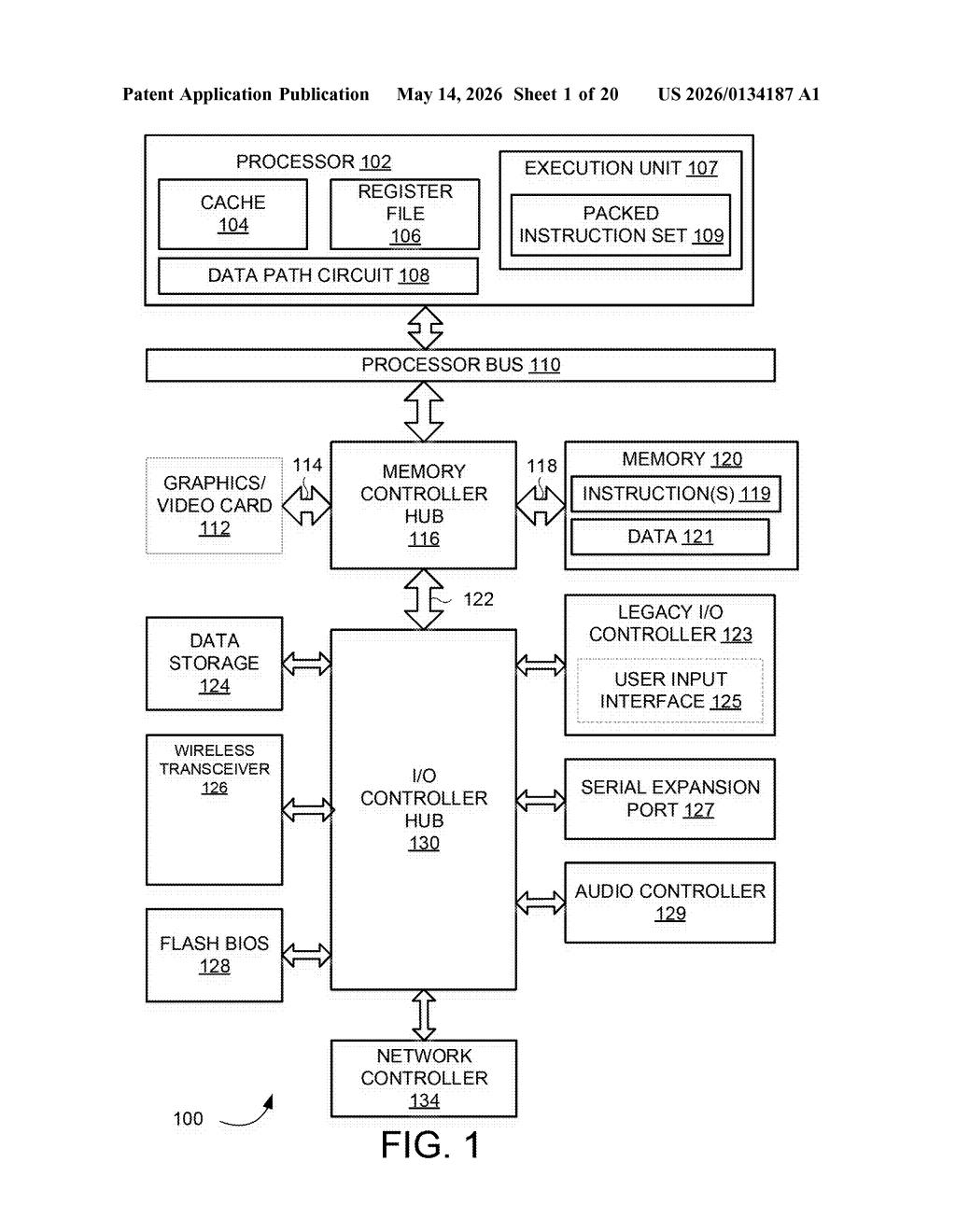

The patent centers on automating the design of data path circuits — the arithmetic and logic structures at the heart of any processor. The specific example highlighted is parallel prefix circuits, which are the structural backbone of high-speed binary adders (think: the circuitry that handles addition inside every arithmetic logic unit).

The process works like this:

- A design state — a representation of the current circuit topology — is fed into a machine learning model.

- The model, trained via reinforcement learning (a trial-and-error approach where an agent receives rewards for good outcomes and penalties for bad ones), outputs a sequence of modifications to the design.

- The system iterates, evaluating each new state against metrics like area, power, and timing delay, until it converges on a final design state that outperforms conventional hand-tuned or rule-based approaches.

The reward signal is what makes this work: instead of a human deciding whether a change is an improvement, the RL agent learns to predict which structural choices lead to better silicon-level outcomes. This is conceptually similar to how AlphaGo learned to play Go — not by memorizing rules, but by discovering strategies through repeated self-play against a measurable objective.

The patent names a team with deep roots in both GPU architecture and AI research, including Bryan Catanzaro and Stuart Oberman, signaling this isn't a theoretical exercise — it's tied to real chip design infrastructure at Nvidia.

What AI-designed circuits mean for Nvidia's GPU roadmap

Chip design is a bottleneck for the entire semiconductor industry. Even small improvements in arithmetic circuit efficiency compound across billions of transistors — a parallel prefix adder that uses 5% less area or runs 3% faster isn't a rounding error at GPU scale. Nvidia's ability to automate this with RL could mean faster design cycles and chips that push closer to physical limits without proportional increases in engineering headcount.

This also fits squarely into a broader industry trend: using AI to accelerate chip design itself (sometimes called "AI for EDA" — Electronic Design Automation). Google made waves with a similar approach for chip floorplanning. If Nvidia can apply RL to the more granular level of arithmetic circuit topology, you could eventually see the gains show up in GPU generations that arrive faster and with better power efficiency than the current pace suggests.

This is genuinely interesting work, not just a speculative filing. The combination of a credible inventor list — Catanzaro and Oberman have real GPU architecture credentials — and a concrete, measurable optimization target (area, power, delay on parallel prefix circuits) makes this feel like a real internal tool being formalized into IP. The fact that it mirrors Google's DeepMind floorplanning work but targets a lower, more granular level of abstraction is the key signal: Nvidia is building an AI-assisted design stack from the arithmetic primitives up.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.