Qualcomm's New Patent Patches Dropped Frames Before You Ever See Them

When you stream a game or VR scene over Wi-Fi, dropped packets can tear apart the image in ways that feel jarring and wrong. Qualcomm's new patent describes a system that quietly patches those gaps using data from the frame you just saw.

What Qualcomm's split rendering error fix actually does

Imagine you're playing a cloud-streamed VR game and your Wi-Fi hiccups for a split second. Instead of a corrupted or frozen frame, the headset just... looks fine. That's the goal of this Qualcomm patent.

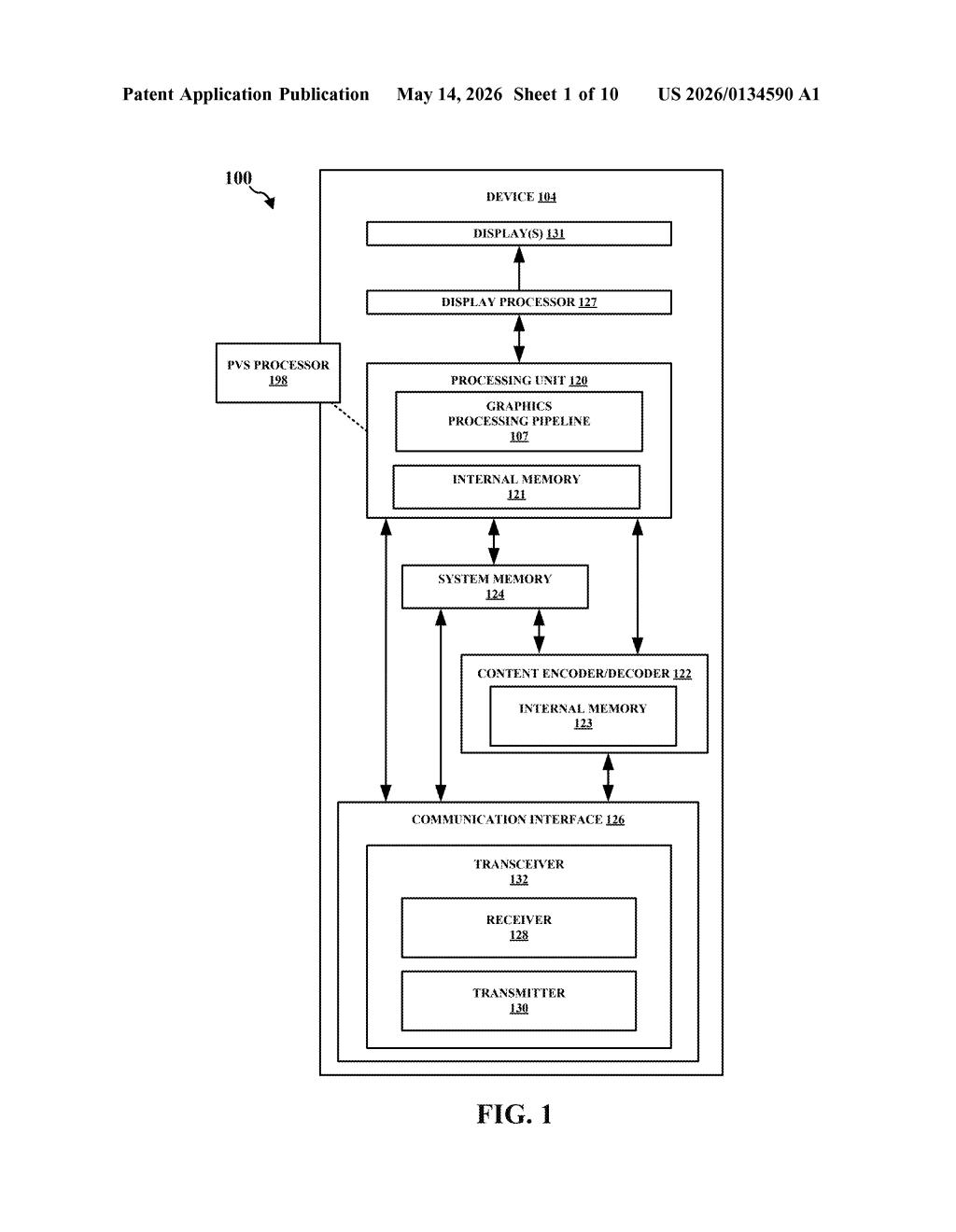

In split rendering, the heavy graphics work happens on a server, and only the final video — plus a map of what objects should be visible — gets sent to your device over the network. Qualcomm calls that map a Potentially Visible Set (PVS). The problem is that it's sent over UDP, a fast but unreliable protocol that doesn't guarantee every packet arrives.

This patent describes how the receiving device detects when PVS data or video packets go missing, then fills in the blanks using geometry info from the previous frame. The result is a smoother experience even when the network isn't cooperating — which, frankly, is most of the time in real-world wireless environments.

How the PVS concealment loop patches missing packets

The patent centers on a Potentially Visible Set (PVS) — essentially a pre-computed list of 3D geometry chunks, called meshlets, that should be visible to the user from a given camera position. The server sends this list alongside compressed video packets to the client device.

Because both the PVS and video are transmitted over UDP (User Datagram Protocol — a "send and forget" transport that skips the delivery guarantees of TCP in exchange for low latency), packets can arrive out of order or not at all.

When the client's graphics processor detects a loss, it:

- Identifies which specific meshlets in the current frame are affected by the missing data

- Looks up concealment information — geometry and visibility state — from those same meshlets in the previous frame

- Substitutes that prior-frame data to fill the gap and completes the render

The second frame is then rendered using a blend of: the intact parts of the new PVS, the patched concealment data, and any meshlets that arrived cleanly. The approach is similar in spirit to error concealment in video codecs, but applied at the 3D geometry layer rather than the pixel layer.

What this means for wireless VR and cloud gaming streams

Split rendering is the architecture most serious wireless VR systems depend on — the idea that your headset doesn't need a gaming PC inside it if a server nearby can do the heavy lifting. But the whole model falls apart if the wireless link isn't rock-solid. Packet loss recovery at the geometry layer is a meaningful step toward making split rendering practical in real environments, not just controlled demos.

For you as a user, this is the kind of unglamorous infrastructure work that makes a product feel polished versus broken. Qualcomm makes the chips inside most major standalone VR headsets, so a patent like this is directly relevant to where that hardware goes next.

This is solid, focused engineering on a real problem — not a moonshot. Split rendering over lossy wireless links is a genuinely hard challenge, and applying frame-level concealment at the PVS/meshlet layer is a sensible and non-obvious approach. It's not a headline-grabbing AI patent, but it's the kind of work that separates functional wireless VR from a demo that only works in a server room.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.