Samsung Patents a Touch-Detection System That Listens and Feels for Taps

What if your device could figure out where you tapped — not just from the touchscreen itself, but from the sound of the tap and the tiny vibration it created? That's the core idea in Samsung's latest patent filing.

How Samsung's microphone-assisted touch detection works

Imagine tapping your finger on a screen that has several overlapping icons or interactive elements clustered closely together. The touchscreen alone might struggle to tell which one you meant to hit — a frustrating experience anyone with slightly larger fingers knows well.

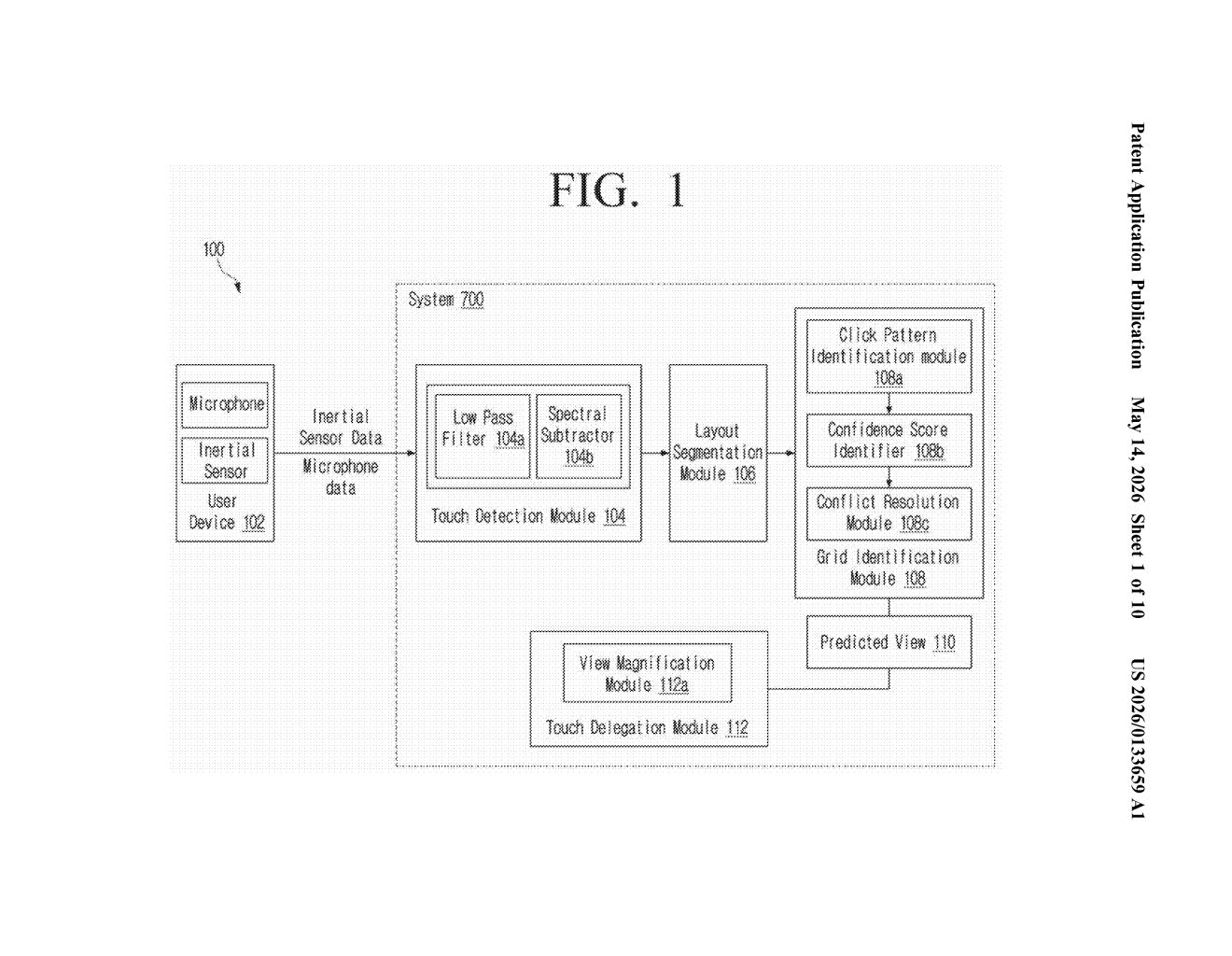

Samsung's patent describes a system that pulls in extra clues: microphone data (the tiny sound your finger makes when it contacts the glass) and inertial sensor data (the subtle physical vibration that travels through the device). By combining those signals with where the tap registered on screen, the system narrows down which specific object you were targeting.

The result is a smarter, more confident tap-recognition pipeline — one that doesn't rely solely on capacitive touch coordinates, but triangulates your intent using multiple sensor streams at once.

How the sensor fusion pipeline resolves the target object

The patent describes an electronic apparatus — think a phone, tablet, or smart display — that uses at least one processor to fuse sensor inputs and resolve ambiguous touch events.

Here's the pipeline the patent lays out:

- Sensing data acquisition: The device collects data from a microphone (the acoustic tap signature) and/or an inertial measurement unit or IMU (the physical jolt when you press the screen).

- Touch identification: Using that sensor data, the system confirms a touch input has occurred — independently of or in addition to the capacitive layer's own reading.

- Region mapping: The display is divided into regions, and the system identifies which region the touch falls into.

- Target object resolution: Among all the interactive objects associated with that touch event, the system picks the most likely target object based on the touch region — essentially disambiguating which UI element you meant to activate.

The claim is deliberately broad, covering any operation performed on the resolved target. The sensor fusion approach is what's novel here: using acoustic and motion signals as first-class inputs to touch interpretation, rather than as mere fallback or accessibility aids.

What this means for future Samsung display accuracy

Touch accuracy has been a persistent pain point on densely packed UIs — think crowded notification panels, small keyboard keys, or tightly spaced app icons on a large Samsung tablet. If this system works as described, it could reduce mis-taps without requiring the user to do anything differently.

For Samsung, this is also strategically interesting. As foldables and large-screen Galaxy devices become a bigger part of the lineup, multi-object disambiguation becomes a real UX problem worth solving. Using existing hardware — the microphones and accelerometers already in every phone — means no added cost per unit if the approach proves reliable.

This is a solid incremental patent — not flashy, but it addresses a real and annoying problem. Using the tap sound and vibration to help resolve which UI element you meant is clever reuse of existing hardware. Whether Samsung can make this reliable enough to ship without introducing new false-positive headaches is the real engineering challenge.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice.