Google Patents a System That Edits Each Part of Your Photo Separately — Automatically

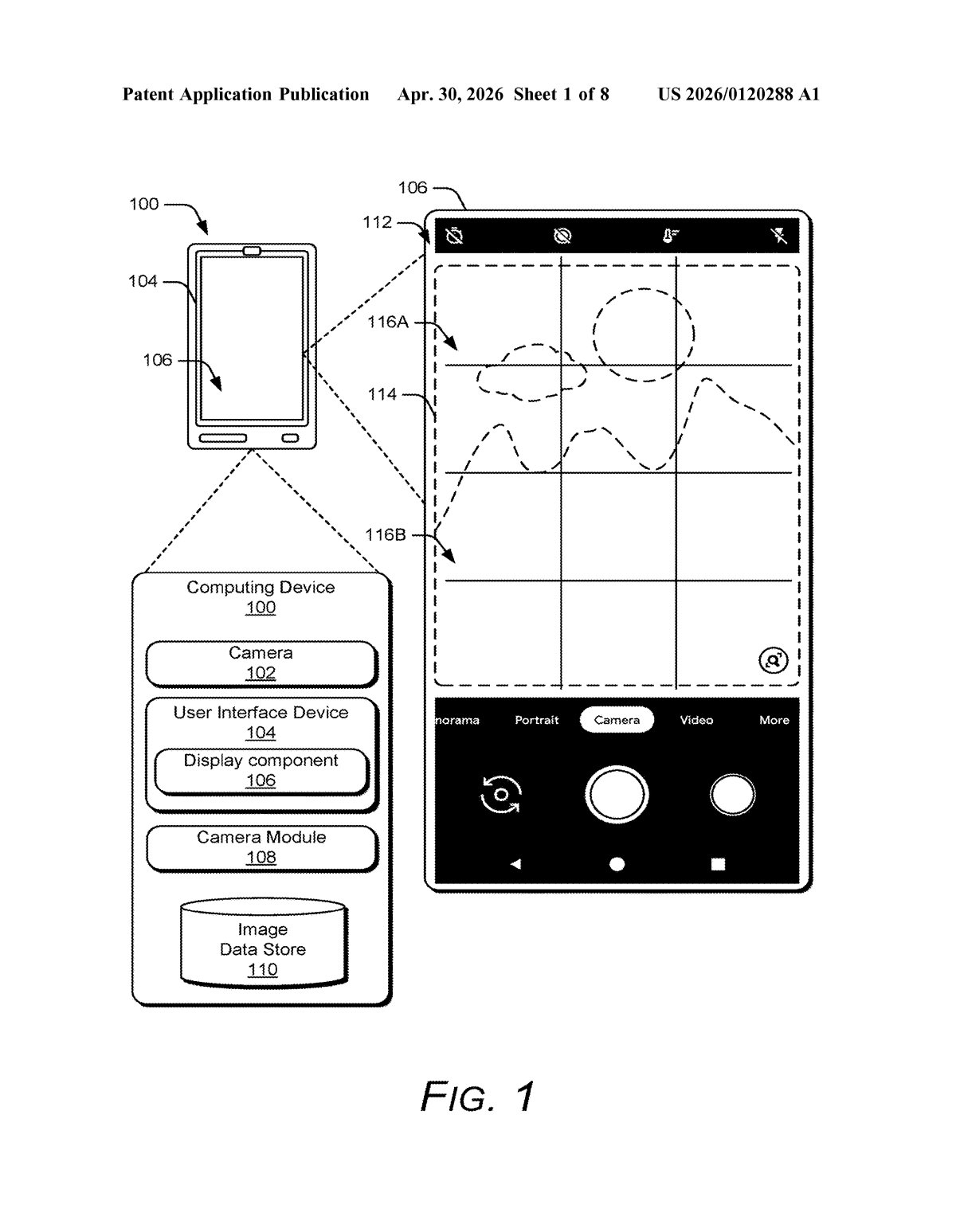

Your phone's camera already does a lot of invisible processing — but Google is patenting a system that goes further, splitting your photo into distinct zones and applying different edits to each one, all without you touching a slider.

What Google's auto region-editing actually does to your photos

Imagine taking a photo where the sky is perfectly exposed but your subject's face looks washed out, or the background is sharp but the shadows are a muddy mess. Fixing that manually means opening an editor, masking regions by hand, and tweaking settings zone by zone — the kind of work that takes a professional editor several minutes. Google's new patent describes a system that does all of that automatically, the moment you snap a photo.

Here's how it works in plain terms: the system looks at your image and divides it into distinct regions — think sky, faces, shadows, mid-tones. It then figures out what type of region each one is and applies the right adjustments for that type. One region might get brightened, another de-noised, another gets its white balance corrected. Then all the pieces are stitched back together into a single image that looks better than any single global edit could produce.

The goal, as the patent puts it, is to produce pictures that exceed expectations for the kind of camera hardware you're actually using — and in some cases even rival shots taken with professional gear and edited in software like Lightroom.

How Google's masks isolate and adjust each photo region

The system starts by running a machine-learned segmentation model on the original image. Segmentation means the model draws boundaries around different parts of the scene — sky, skin, foliage, shadows — and assigns each cluster of pixels to a labeled region type. Think of it like a coloring-book outline drawn automatically over your photo.

Each region then gets a mask — essentially a stencil that tells the processor exactly which pixels belong to that region. Raw segmentation masks can have fuzzy or jagged edges that don't line up with real object boundaries, so the patent describes refining them using a guided filter (a technique that sharpens mask edges so they follow the actual contours of objects in the photo, rather than bleeding across them).

With clean masks in hand, the processor applies region-specific adjustments independently. The patent lists several:

- Exposure — darkening or lightening each region separately

- Auto white balance (AWB) — correcting color temperature per region, so a warm-lit face and a cool blue sky can both look natural in the same frame

- Denoising — reducing grain in each zone at a level appropriate for that region's content

- Other characteristics — the claim is written broadly enough to cover sharpness, saturation, and similar adjustments

Finally, all the adjusted regions are merged back into a single output image and displayed. The whole pipeline runs on-device, triggered automatically when you capture a photo.

What this means for Pixel camera processing and rivals

Smartphone cameras have long used computational photography to punch above their optical weight — HDR merging, Night Sight, portrait blur — but most of those techniques apply adjustments globally or rely on bracketed exposures. A system that classifies regions by type and edits them with type-specific logic is a meaningful step toward the kind of local, context-aware editing that professional photo editors do manually in Lightroom or Photoshop. If this ships in a Pixel, it could make the gap between a quick phone snap and a carefully edited DSLR shot noticeably smaller for everyday users.

For the broader industry, this is also a competitive signal. Apple, Samsung, and others are all pushing computational photography hard. A robust region-classification pipeline baked into the camera stack — not just a post-processing filter you apply later — could quietly become one of those features that makes users feel like their photos just look better without knowing exactly why.

This is one of those patents that describes something genuinely useful rather than speculative. Region-aware, automatic photo editing is a hard problem — getting mask edges clean enough that the seams are invisible is non-trivial — and the fact that Google is patenting the full pipeline from segmentation through per-region adjustment to merge suggests real engineering investment, not a concept sketch. If this ends up in Pixel's camera stack, it's the kind of quiet improvement that earns strong camera benchmark scores without needing a flashy marketing name.

Get one Big Tech patent every Sunday

Plain English, intelligent commentary, no hype. Free.

Editorial commentary on a publicly published patent application. Not legal advice. Patentlyze may earn a commission if you click an affiliate link and make a purchase. This doesn't affect what we cover or how we cover it.